Finding Local Linear Correlations in High Dimensional Data

250 likes | 362 Views

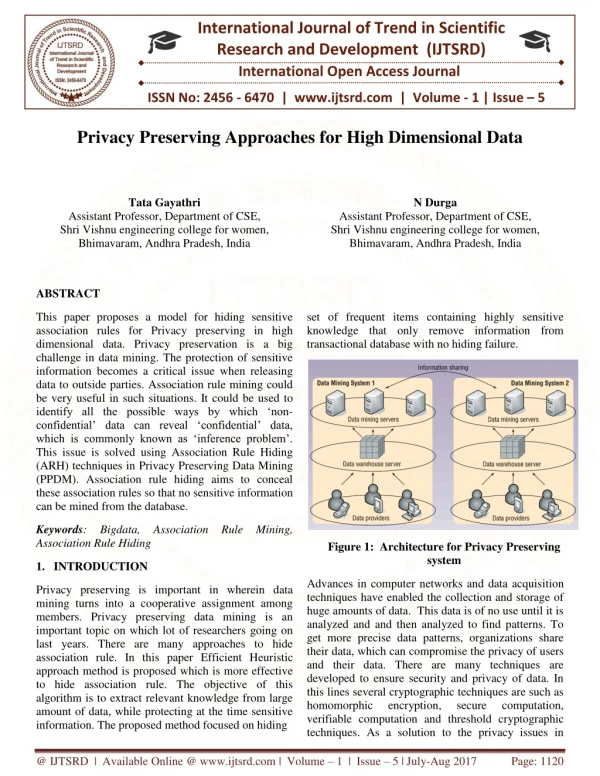

This work by Xiang Zhang, Feng Pan, and Wei Wang from the University of North Carolina at Chapel Hill explores the identification of local linear correlations within high-dimensional datasets. It highlights the limitations of existing methods such as feature selection, transformation, and subspace clustering. The authors introduce a formalized approach to identify strongly correlated feature subsets, emphasizing the monotonic properties of these subsets for efficient algorithm development. The study presents the CARE algorithm, designed to effectively select feature and point subsets that reveal strong correlations.

Finding Local Linear Correlations in High Dimensional Data

E N D

Presentation Transcript

Finding Local Linear Correlations in High Dimensional Data Xiang Zhang Feng Pan Wei Wang University of North Carolina at Chapel Hill Speaker: Xiang Zhang

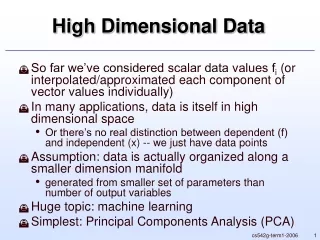

Finding Latent Patterns in High Dimensional Data • An important research problem with wide applications • biology (gene expression analysis) • customer transactions, and so on. • Common approaches • feature selection • feature transformation • subspace clustering

Existing Approaches • Feature selection • find a single representative subset of features that are most relevant for the data mining task at hand • Feature transformation • find a set of new (transformed) features that contain the information in the original data as much as possible • Principal Component Analysis (PCA) • Correlation clustering • find clusters of data points that may not exist in the axis parallel subspaces but only exist in the projected subspaces.

Question: How to find these local linear correlations (using existing methods)? Motivation Example linearly correlated genes

Applying PCA — Correlated? • PCA is an effective way to determine whether a set of features is strongly correlated • a few eigenvectors describe most variance in the dataset • small amount of variance represented by the remaining eigenvectors • small residual variance indicates strong correlation • A global transformation applied to the entire dataset

Applying PCA – Representation? • The linear correlation is represented as the hyperplane that is orthogonal to the eigenvectors with the minimum variances [1, -1, 1] embedded linear correlations linear correlations reestablished by full-dimensional PCA

Applying Bi-clustering or Correlation Clustering Methods linearly correlated genes • Correlation clustering • no obvious clustering structure • Bi-clustering • no strong pair-wise correlations

Revisiting Existing Work • Feature selection • finds only one representative subset of features • Feature transformation • performs one and the same feature transformation for the entire dataset • does not really eliminate the impact of any original attributes • Correlation clustering • projected subspaces are usually found by applying standard feature transformation method, such as PCA

Local Linear Correlations - formalization • Idea: formalize local linear correlations as strongly correlated feature subsets • Determining if a feature subset is correlated • small residual variance • The correlation may not be supported by all data points -- noise, domain knowledge… • supported by a large portion of the data points

Problem Formalization • Suppose that F (m by n) be a submatrix of the dataset D (M by N) • Let { } be the eigenvalues of the covariance matrix of F and arranged inascending order • F is strongly correlated feature subset if number of supporting data points variance on the k eigenvectors having smallest eigenvalues (residual variance) (1) and (2) total number of data points total variance

Problem Formalization • Suppose that F (m by n) be a submatrix of the dataset D (M by N) larger k, stronger correlation Eigenvalues K andε, together control the strength of the correlation larger k smaller ε Eigenvalue id smaller ε, stronger correlation

Goal • Goal: to find all strongly correlated feature subsets • Enumerate all sub-matrices? • Not feasible (2M×N sub-matrices in total) • Efficient algorithm needed • Any property we can use? • Monotonicity of the objective function

Monotonicity • Monotonic w.r.t. the feature subset • If a feature subset is strongly correlated, all its supersets are also strongly correlated • Derived from Interlacing Eigenvalue Theorem • Allow us to focus on finding the smallest feature subsets that are strongly correlated • Enable efficient algorithm – no exhaustive enumeration needed

The CARE Algorithm • Selecting the feature subsets • Enumerate feature subsets from smaller size to larger size (DFS or BFS) • If a feature subset is strongly correlated, then its supersets are pruned (monotonicity of the objective function) • Further pruning possible (refer to paper for details)

Monotonicity • Non-monotonic w.r.t. the point subset • Adding (or deleting) point from a feature subset can increase or decrease the correlation among the features • Exhaustive enumeration infeasible – effective heuristic needed

The CARE Algorithm • Selecting the point subsets • Feature subset may only correlate on a subset of data points • If a feature subset is not strongly correlated on all data points, how to chose the proper point subset?

The CARE Algorithm • Successive point deletion heuristic • greedy algorithm – in each iteration, delete the point that resulting the maximum increasing of the correlation among the subset of features • Inefficient – need to evaluate objective function for all data points

The CARE Algorithm • Distance-based point deletion heuristic • Let S1be the subspace spanned by the k eigenvectors with the smallest eigenvalues • Let S2 be the subspace spanned by the remainingn-keigenvectors. • Intuition: Try to reduce the variance in S1 as much as possible while retaining the variance in S2 • Directly delete (1-δ)M points having large variance in S1and small varianceinS2 (refer to paper for details)

The CARE Algorithm A comparison between two point deletion heuristics successive distance-based

Experimental Results (Synthetic) Linear correlation embedded Linear correlation reestablished Full-dimensional PCA CARE

Experimental Results (Synthetic) Linear correlation embedded (hyperplan representation) Pair-wise correlations

Experimental Results (Synthetic) Scalability evaluation

Experimental Results (Wage) Correlation clustering method & CARE CARE only A comparison between correlation clustering method and CARE (dataset (534×11) http://lib.stat.cmu.edu/datasets/CPS_85_Wages)

Experimental Results Hspb2: cellular physiological process 2810453L12Rik: cellular physiological process 1010001D01Rik: cellular physiological process P213651: N/A Nrg4: cell part Myh7: cell part; intracelluar part Hist1h2bk: cell part; intracelluar part Arntl: cell part; intracelluar part Nrg4: integral to membrane Olfr281: integral to membrane Slco1a1: integral to membrane P196867: N/A Oazin: catalytic activity Ctse: catalytic activity Mgst3: catalytic activity Linearly correlated genes (Hyperplan representations) (220 genes for 42 mouse strains) Mgst3: catalytic activity; intracellular part Nr1d2: intracellular part; metal ion binding Ctse: catalytic activity Pgm3: metal ion binding Ldb3: intracellular part Sec61g: intracellular part Exosc4: intracellular part BC048403: N/A Ptk6: membrane Gucy2g: integral to membrane Clec2g: integral to membrane H2-Q2: integral to membrane Hspb2: cellular metabolism Sec61b: cellular metabolism Gucy2g: cellular metabolism Sdh1: cellular metabolism

Thank You ! Questions?