Clustering High Dimensional Data Using SVM

Clustering High Dimensional Data Using SVM. Tsau Young Lin and Tam Ngo Department of Computer Science San José State University. Overview. Introduction Support Vector Machine (SVM) What is SVM How SVM Works Data Preparation Using SVD Singular Value Decomposition (SVD) Analysis of SVD

Clustering High Dimensional Data Using SVM

E N D

Presentation Transcript

Clustering High Dimensional Data Using SVM Tsau Young Lin and Tam Ngo Department of Computer Science San José State University

Overview • Introduction • Support Vector Machine (SVM) • What is SVM • How SVM Works • Data Preparation Using SVD • Singular Value Decomposition (SVD) • Analysis of SVD • The Project • Conceptual Exploration • Result Analysis • Conclusion • Future Work

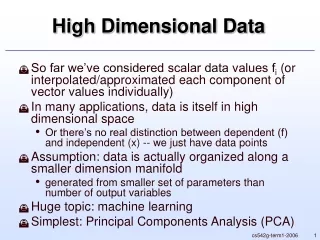

Introduction • World Wide Web • No. 1 place for information • contains billions of documents • impossible to classify by humans • Project’s Goals • Cluster documents • Reduce documents size • Get reasonable results when compared to humans classification

Support Vector Machine (SVM) • a supervised learning machine • outperforms many popular methods for text classification • used for bioinformatics, signature/hand writing recognition, image and text classification, pattern recognition, and e-mail spam categorization

Motivation for SVM • How do we separate these points? • with a hyperplane Source: Author’s Research

SVM Process Flow Feature Space Input Space Input Space Source: DTREG

Convex Hulls Source: Bennett, K. P., & Campbell, C., 2000

Simple SVM Example • How would SVM separates these points? • use the kernel trick • Φ(X1) = (X1, X12) • It becomes 2-deminsional Source: Author’s Research

Simple Points in Feature Space • All points here are support vectors. Source: Author’s Research

SVM Calculation • Positive: w x + b = +1 • Negative: w x + b = -1 • Hyperplane: w x + b = 0 • find the unknowns, w and b • Expending the equations: • w1x1 + w2x2 + b = +1 • w1x1 + w2x2 + b = -1 • w1x1 + w2x2 + b = 0

Use Linear Algebra to Solve w and b • w1x1 + w2x2 + b = +1 • w10 + w20 + b = +1 • w13 + w29 + b = +1 • w1x1 + w2x2 + b = -1 • w11 + w21 + b = -1 • w12 + w24 + b = -1 • Solution is w1 = -3, w2 = 1, b = 1 • SVM algorithm can find the solution that returns a hyperplane with the largest margin

Use Solutions to Draw the Planes Positive Plane: w x + b = +1 w1x1 + w2x2 + b = +1 -3x1 + 1x2 + 1 = +1 x2 = 3x1 Negative Plane: w x + b = -1 w1x1 + w2x2 + b = -1 -3x1 + 1x2 + 1 = -1 x2 = -2 + 3x1 Hyperplane: w x + b = 0 w1x1 + w2x2 + b = 0 -3x1 + 1x2 + 1 = 0 x2 = -1 + 3x1 Source: Author’s Research

Simple Data Separated by a Hyperplane Source: Author’s Research

LIBSVM and Parameter C • LIBSVM: A Java Library for SVM • C is very small: SVM only considers about maximizing the margin and the points can be on the wrong side of the plane. • C value is very large: SVM will want very small slack penalties to make sure that all data points in each group are separated correctly.

Choosing Parameter C Source: LIBSVM

4 Basic Kernel Types • LIBSVM has implemented 4 basic kernel types: linear, polynomial, radial basis function, and sigmoid • 0 -- linear: u'*v • 1 -- polynomial: (gamma*u'*v + coef0)^degree • 2 -- radial basis function: exp(-gamma*|u-v|^2) • 3 -- sigmoid: tanh(gamma*u'*v + coef0) • We use radial basis function with large parameter C for this project.

Data Preparation Using SVD • SVM is excellent for text classification, but requires labeled documents to use for training • Singular Value Decomposition (SVD) • separates a matrix into three parts; left eigenvectors, singular values, and right eigenvectors • decompose data such as images and text. • reduce data size • We will use SVD to cluster

SVD Example of 4 Documents • D1: Shipment of gold damaged in a fire • D2: Delivery of silver arrived in a silver truck • D3: Shipment of gold arrived in a truck • D4: Gold Silver Truck Source: Garcia, E., 2006

Matrix A = U*S*VT Given a matrix A, we can factor it into three parts: U, S,and VT. Source: Garcia, E., 2006

U = 0.3966 -0.1282 -0.2349 0.0941 0.2860 0.1507 -0.0700 0.5212 0.1106 -0.2790 -0.1649 -0.4271 0.1523 0.2650 -0.2984 -0.0565 0.1106 -0.2790 -0.1649 -0.4271 0.3012 -0.2918 0.6468 -0.2252 0.3966 -0.1282 -0.2349 0.0941 0.3966 -0.1282 -0.2349 0.0941 0.2443 -0.3932 0.0635 0.1507 0.3615 0.6315 -0.0134 -0.4890 0.3428 0.2522 0.5134 0.1453 S = 4.2055 0.0000 0.0000 0.0000 0.0000 2.4155 0.0000 0.0000 0.0000 0.0000 1.4021 0.0000 0.0000 0.0000 0.0000 1.2302 Using JAMA to Decompose Matrix A Source: JAMA (MathWorks and the National Institute of Standards and Technology (NIST))

V = 0.4652 -0.6738 -0.2312 -0.5254 0.6406 0.6401 -0.4184 -0.0696 0.5622 -0.2760 0.3202 0.7108 0.2391 0.2450 0.8179 -0.4624 VT = 0.4652 0.6406 0.5622 0.2391 -0.6738 0.6401 -0.2760 0.2450 -0.2312 -0.4184 0.3202 0.8179 -0.5254 -0.0696 0.7108 -0.4624 Using JAMA to Decompose Matrix A • Matrix A can be reconstructed by multiplying matrices: U*S*VT Source: JAMA

U’ = 0.3966 -0.1282 0.2860 0.1507 0.1106 -0.2790 0.1523 0.2650 0.1106 -0.2790 0.3012 -0.2918 0.3966 -0.1282 0.3966 -0.1282 0.2443 -0.3932 0.3615 0.6315 0.3428 0.2522 S’ = 4.2055 0.0000 0.0000 2.4155 Rank 2 Approximation (Reduced U, S, and V Matrices) • V’ = 0.4652 -0.6738 0.6406 0.6401 0.5622 -0.2760 0.2391 0.2450

Use Matrix V to Calculate Cosine Similarities • calculate cosine similarities for each document. • sim(D’, D’)=(D’• D’) /(|D’| |D’|) • example, Calculate for D1’: • sim(D1’, D2’) = (D1’• D2’) / (|D1’| |D2’|) • sim(D1’, D3’)=(D1’• D3’) / (|D1’| |D3’|) • sim(D1’, D4’) = (D1’• D4’) / (|D1’| |D4’|)

Result for Cosine Similarities • Example result for D1’: sim(D1’, D2’)= ((0.4652 * 0.6406) + (-0.6738 * 0.6401)) = -0.1797 ( (0.4652)2 + (-0.6738)2 ) * ( (0.6406)2 + (0.6401) 2 ) sim(D1’, D3’)= ((0.4652 * 0.5622) + (-0.6738 * -0.2760)) = 0.8727 ( (0.4652)2 + (-0.6738)2 ) * ( (0.5622)2 + (-0.2760)2 ) sim(D1’, D4’)= ((0.4652 * 0.2391) + (-0.6738 * 0.2450)) = -0.1921 ( (0.4652)2 + (-0.6738)2 ) * ( (0.2391)2 + (0.2450)2 ) • D3 returns the highest value, pair D1 with D3 • Do the same for D2, D3, and D4.

D1: 3 D2: 4 D3: 1 D4: 2 label 1: 1 3 label 2: 2 4 Result of Simple Data Set • label 1: • D1: Shipment of gold damaged in a fire • D3: Shipment of gold arrived in a truck • label 2: • D2: Delivery of silver arrived in a silver truck • D4: Gold Silver Truck

Check Cluster Using SVM • Now we have the label, we can use it to train with SVM • SVM input format on original data: 1 1:1.00 2:0.00 3:1.00 4:0.00 5:1.00 6:1.00 7:1.00 8:1.00 9:1.00 10:0.00 11:0.00 2 1:1.00 2:1.00 3:0.00 4:1.00 5:0.00 6:0.00 7:1.00 8:1.00 9:0.00 10:2.00 11:1.00 1 1:1.00 2:1.00 3:0.00 4:0.00 5:0.00 6:1.00 7:1.00 8:1.00 9:1.00 10:0.00 11:1.00 2 1:0.00 2:0.00 3:0.00 4:0.00 5:0.00 6:1.00 7:0.00 8:0.00 9:0.00 10:1.00 11:1.00

Results from SVM’s Prediction Results from SVM’s Prediction on Original Data Source: Author’s Research

Using Truncated V Matrix • We want to reduce data size, more practical to use truncated V matrix • SVM input format (truncated V matrix): 1 1:0.4652 2:-0.6738 2 1:0.6406 2:0.6401 1 1:0.5622 2:-0.2760 2 1:0.2391 2:0.2450

SVM Result From Truncated V Matrix Results from SVM’s Prediction on Reduced Data Using truncated V matrix gives better results. Source: Author’s Research

Vector Documents on a Graph D2 D4 D3 D1 Source: Author’s Research

Analysis of the Rank Approximation Cluster Results from Different Ranking Approximation Source: Author’s Research

use the previous methods on larger data sets compare the results with that of humans classification Program Process Flow Program Process Flow

Conceptual Exploration • Reuters-21578 • a collection of newswire articles that have been human-classified by Carnegie Group, Inc. and Reuters, Ltd • most widely used data set for text categorization

Result Analysis Clustering with SVD vs. Humans Classification First Data Set Source: Author’s Research

Result Analysis Clustering with SVD vs. Humans Classification Second Data Set Source: Author’s Research

Result Analysis • highest percentage accuracy for SVD clustering is 81.5% • lower rank value seems to give better results • SVM predicts about the same accuracy as SVD cluster

Result Analysis: Why results may not be higher? • humans classification is more subjective than a program • reducing many small clusters to only 2 clusters by computing the average may decrease the accuracy

Conclusion • Showed how SVM works • Explore the strength of SVM • Showed how SVD can be used for clustering • Analyzed simple and complex data • the method seems to cluster data reasonably • Our method is able to: • reduce data size (by using truncated V matrix) • cluster data reasonably • classify new data efficiently (based on SVM) • By combining known methods, we created a form of unsupervised SVM

Future Work • extend SVD to very large data set that can only be stored in secondary storage • looking for more efficient kernels of SVM

References Bennett, K. P., & Campbell, C. (2000). Support Vector Machines: Hype or Hellelujah?. ACM SIGKDD Explorations. VOl. 2, No. 2, 1-13 Chang, C & Lin, C. (2006). LIBSVM: a library for support vector machines, Retrived November 29, 2006, from http://www.csie.ntu.edu.tw/~cjlin/libsvm Cristianini, N. (2001). Support Vector and Kernel Machines. Retrieved November 29, 2005, from http://www.support-vector.net/icml-tutorial.pdf Cristianini, N., & Shawe-Taylor, J. (2000). An Introduction to Support Vector Machines. Cambridge UK: Cambridge University Press Garcia, E. (2006). SVD and LSI Tutorial 4: Latent Semantic Indexing (LSI) How-to Calculations. Retrieved November 28, 2006, from http://www.miislita.com/information-retrieval-tutorial/svd-lsi-tutorial-4-lsi-how-to-calculations.html Guestrin, C. (2006). Machine Learning. Retrived November 8, 2006, from http://www.cs.cmu.edu/~guestrin/Class/10701/ Hicklin, J., Moler, C., & Webb, P. (2005). JAMA : A Java Matrix Package. Retrieved November 28, 2006, from http://math.nist.gov/javanumerics/jama/

References Joachims, T. (1998). Text Categorization with Support Vector Machines: Learning with Many Relevant Features. http://www.cs.cornell.edu/People/tj/publications/joachims_98a.pdf Joachims, T. (2004). Support Vector Machines. Retrived November 28, 2006, from http://svmlight.joachims.org/ Reuters-21578 Text Categorization Test Collection. Retrived November 28, 2006, from http://www.daviddlewis.com/resources/testcollections/reuters21578/ SVM - Support Vector Machines. DTREG. Retrived November 28, 2006, from http://www.dtreg.com/svm.htm Vapnik, V. N. (2000, 1995). The Nature of Statistical Learning Theory. Springer-Verlag New York, Inc.