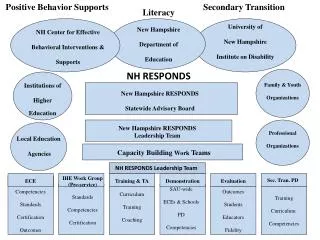

NH RESPONDS Evaluation Component

180 likes | 279 Views

NH RESPONDS Evaluation Component. Pat Mueller David Merves October 6, 2008. Why We Collect Data/Evaluate?. Somebody said you had to Inform instruction School improvement Local, state, and federal accountability Public information Choose/set policy Marketing

NH RESPONDS Evaluation Component

E N D

Presentation Transcript

NH RESPONDSEvaluation Component Pat Mueller David Merves October 6, 2008

Why We Collect Data/Evaluate? • Somebody said you had to • Inform instruction • School improvement • Local, state, and federal accountability • Public information • Choose/set policy • Marketing • Because that’s what all the cool kids are doing…

State Personnel Development Improvement Grants (SIG/SPDGs) SIG/SPDGs are measured against… • OSEP program performance measures • NH RESPONDS performance measures

SPDG Program Performance Measures • % of personnel receiving professional development (PD) on scientific-or evidence-based instructional practices. • % of projects that have implemented PD/training activities that are aligned with improvement strategies in the State Performance Plan (SPP). • % of PD/training activities provided that are based on scientific-or evidence-based instructional/behavioral practices.

SPDG Program Performance Measures • % of PD/ training activities that are sustained through on-going and comprehensive practices (e.g., mentoring, coaching). • % of SPDG projects that successfully replicate the use of scientifically based or evidence-based instructional/behavioral practice in schools

Steps for Conducting an Evaluation SELECT THE CRITERIA AND STANDARDS DEVELOP LOGIC MODEL PREPARE AN EVALUATION PLAN COLLECT DATA ANALYZING DATA & UNDERSTANDING RESULTS COMMUNICATE THE FINDINGS

1. Define the Criteria to Be Evaluated • Terminology • Goals Long-Term Outcomes or Impact • Objectives Short-term & Intermediate Outcomes • Activities Outputs

Two Types of Evaluation Standards • Process/Formative: Assesses ongoing project activities • Begins at program implementation and continues throughout program • Is the program being delivered as planned? • Is the program progressing towards its goals and objectives?

Two Types of Evaluation Standards • Outcome/Summative: Assesses the program’s success and whether the program or initiative had an impact. • Compares the actual results to projected goals/objectives. • Typically used for decision making purposes • Important to look for unanticipated outcomes

2. Logic Models • A conceptual model that links an initiative’s goals and objectives, with expected outputs and/or outcomes. • Numerous types of logic models. • There are many other methods of illustrating the conceptual framework of an initiative.

3. Writing an Evaluation Plan • Components to include: • Program goal/objectives • Evaluation questions • Performance indicators • Data collection procedures • Data analysis method • Person responsible • Timeline

4. Collecting Data • Process/formative data • Amount and type of PD provided • Satisfaction and utility of PD provided • Products developed • These data tend to be gathered by those providing PD • Outcome/Summative data • Reduced office discipline referrals • Reduced suspensions/expulsions • Improved reading scores • These data tend to be collected from the LEA or SEA

NH RESPONDS Data Collection Tools • PD Activity Log completed by TA/PD providers • Minutes • Leadership Team meetings • Workgroup meetings • School & District Improvement Team minutes/products • Surveys/Interviews/Focus groups • Annual Participating Personnel Survey (March/April) • Workshop surveys

NH RESPONDS Data Collection Tools • Fidelity instruments (for PBIS & Literacy) • Benchmarks of Quality • School-wide Evaluation Tool • Existing data • Office Discipline Referrals • Suspension/expulsion data • Reading scores

5. Analyzing Data & Understanding Results • SAU/School-Level • Analysis of student performance to improve instruction (i.e. reading scores) • Analysis of school-level data to improve safety and/or climate (i.e. SET) • Project/Grant Level • Analysis of formative data for program improvement purposes • Aggregate analysis of all outcome data to describe impact of NH RESPONDS

6. Communicate the Findings (Reporting) • Provide on-going feedback to project management (what’s working/what’s not). • Provide on-going data to the NH Bureau related to completion of objectives and success of project efforts. • Provide annual report to the U.S. Department of Education related to completion of objectives and success of project efforts.

Evergreen Educational Consulting WWW.EECVT.COM EEC@GMAVT.NET (802) 434-5607 WWW.SIGNETWORK.ORG