Exploring Categorical Associations in Data Analysis

430 likes | 539 Views

Learn about associations between dependent and independent categorical variables, contingency tables, chi-squared tests, and measures of association strength. Understand statistical independence and expected frequencies for data analysis.

Exploring Categorical Associations in Data Analysis

E N D

Presentation Transcript

Introduction to Data AnalysisMarch 17th, 2008 Associations between categorical variables

This week’s lecture • This week we change tack somewhat to look at dependent and independent categorical variables. • Contingency tables, and ideas of perfect dependence and independence. • Expected frequencies. • Chi-squared tests. • Measures of the strength of association between categorical variables. • Odds ratios. • Other measures of association. • Reading: A & F chapter 8

Some definitions… • Response variable: the variable about which comparisons are made. (a.k.a. Dependent) • Explanatory variable: the variable that defines the groups across which the response variable is compared. (a.k.a Independent) • Associations: two variables are associated if the distribution of the response variable changes in some way as the value of the explanatory variable changes. • We’ve seen already this with differences of means/proportions.

Categorical Associations • This week we’re going to look at categorical dependent (and independent) variables. • If we have a categorical dependent variable (like social class, or vote choice) then our normal regression techniques don’t work. • Easy to tabulate these data and ‘eyeball’ relationships, but how do we measure association more rigorously? • We want to measure the independence of one variable from the other, and this is, to some extent, based on the simple tabulation.

Example for the day • This week we’re interested in the drinking habits of patrons of my local bar, Aromas. We sample 105 people. • In particular we’re interested in how men and women differ in what they normally drink. • Our dependent variable is thus type of drink normally consumed. Aromashas a limited range of drinks, so there are only three categories: ale (Sweet Water IPA), lager and white wine spritzers. • Our independent variable is sex.

Drinking at Aromas (1) • We want to create a contingency table. • This just displays the number of observations for each combination of outcomes over the categories of the variable. • Note that each of our observations fall into only one row and column of this table. • The categories are exhaustive and exclusive. Exhaustive as you can only be a men or a woman, and you can only drink ale, lager or WWSs. Exclusive as you cannot be both a man and a woman, and you never mix your drinks. • We only use one independent variable here, next week we look at many independent variables.

Drinking at Aromas (2) • Our contingency table has the dependent variable in the column and independent variable on the row. Row marginals Column marginals

Drinking at Aromas (3) • To see how favoured drink depends on sex, convert to percentages within rows. 55/105 = 52% of people drink ale 53/69 = 77% of men drink ale 2/36 = 6% of women drink ale

Contingency tables • The two rows for women and men are called the conditional distributions for the dependent variable (drink type), and the set of proportions are the conditional probabilities. • These tables should include the sample size that you have (i.e. 36 women and 69 men). • Tables should not have unnecessary decimal places; 0-1 DPs are sufficient for samples of around 100. • But what we still want to know is, is there an association between sex and drinking preferences?

Statistical independence (1) • In order to make that judgement, we use a concept called statistical independence. • Two variables are statistically independent if the probability of falling into a particular column is independent of the row for the population. • e.g. if 70% of all of Aromas regulars (our population) drink ale, then we would expect 70% of women to drink ale and 70% of men to drink ale if sex is statistically independent of preferred drink. • Of course, we’ve got a sample…

Statistical independence (2) • We’re in the familiar situation of wanting to know something about a population, but we only have a sample. • Because we only have a sample, we don’t know whether a relationship that’s apparent in the observed data (women prefer white wine spritzers) is due to sampling variation or not. • Our null hypothesis (H0) is thus that sex and preferred drink are statistically independent, we test this against the alternative hypothesis (Ha) that they are statistically dependent. • The logic of this is thus very similar to comparing means, again we’re trying to reject the null hypothesis.

Expected frequencies (1) • So how do we test the null hypothesis? • If there’s no relationship then we should expect the proportion of women ale drinkers to be the same as the proportion of ale drinkers in the sample as a whole. • So one way of working whether there’s differences from the null hypothesis is to work out what the expected frequencies are if the null hypothesis were correct. • We can then compare these expected frequencies with the actual observed frequencies and assess whether any differences from the null hypothesis are ‘big’ or ‘small’.

Expected frequencies (2) • So the expected frequency for women drinking ale is 55/105 of all women (36), which is 18.9 women. 18.9 6.5 10.6 20.4 36.1 12.5 Expected frequency of women ale drinkers (if H0 is true)

A single measure? • We can see that there are some big and/or small deviations from the null hypothesis, but how can we summarize them and assess their size? • Use something called the chi-squared statistic (or χ2). • This is (surprise, surprise) based on looking at the squared deviations from the expected frequencies. • Of course some deviations will be big just because the numbers are big, so we also divide the squared deviation by the expected frequency.

Chi-square • We finally take all the squared deviations divided by the expected frequencies for each cell and add them all up. Expected frequency Chi-squared statistic Observed frequency

Working out chi-square And so on. If we add all these numbers up then our chi-square statistic = 78.1. 18.9 6.5 10.6 20.4 36.1 12.5

Is 78 big or small? • A ‘big’ number tells us that H0 is unlikely as the observed frequencies are ‘far away’ from the expected frequencies, but when is a big number, big enough to reject the null hypothesis? • Well if we took lots of samples then we would get a particular sampling distribution. • This is NOT normally distributed, but does follow a particular pattern (called, rather unimaginatively, the chi-squared probability distribution).

More sampling distributions • Before looking at the shape of the sampling distribution for χ2, need to think a bit about how it will vary according to the size of the table. • Large tables (with many rows and columns) will have a bigger value of χ2 just because there’s more numbers to add up. We need to take this into account. • In fact the χ2 probability distribution is different depending on the number of cells, or more accurately something we call degrees of freedom.

Degrees of freedom (1) • Degrees of freedom are a common idea that you’ll meet again, and essentially refers to the number of ‘non-redundant’ pieces of information we have. • In this particular case, it refers to the number of cells that can vary once we know what the marginal distributions are (e.g. the number of men and women, and the number of people that prefer each drink).

Degrees of freedom (2) • In our case, we only have 2 degrees of freedom. • Why? Because once we know two cell numbers, we can work out all the rest. 30 15 53 1

χ2 distribution (1) When DF are low (v = 3), most of the χ2 statistics fall below 5. When DF are high (v = 10), most of the χ2 statistics fall above 5.

χ2 distribution (2) • In fact the mean of the sampling distribution is the number of DF, so as DF increases so does the mean of the distribution. • As the DF increases, the standard deviation of the sampling distribution increases. • Regardless of its properties, we can use the sampling distribution (as we have previously with z-tests) to get a probability of the observations we’ve got occurring by chance.

χ2 distribution (3) • Just like our z-test (or t-test) we ask the question “what is the probability of getting a value of χ2 that is this far from the mean if H0 is correct and there is no association between the two variables?”. • As before the area under the curve beyond that value tells us the p-value. • Only difference is that the distribution (and hence p-value) depends on the DF.

χ2 distribution (4) • The table shows the values for values that have a probability of coming up with 10%, 5%, 2.5% and 1% probability by chance due to sampling variation for different values of DF.

Back to Aromas • For our example the χ2 statistic was 78.1 with 2 degrees of freedom. • If we looked at the table we can see that this would occur by chance less than 1% of the time. • Indeed the probability of seeing this value is effectively zero, and we can reject the null hypothesis that there is no relationship between type of drink preferred and sex. • The χ2 test thus allows us to test for association between categorical variables. • For small sample sizes we use another test (which has similar logic) called Fisher’s exact test. Generally speaking, when any cell has less than 5 cases we should use this small sample test.

Strength of association • So we know that women seem to prefer spritzers to real ale compared to men, but by how much? • While the χ2 test tells us that there is an association, it doesn’t tell us much about strength. • In particular, if we have really large sample sizes then the test will often show statistically significant association, even if the substantive association is weak. • This is easy to show with an example.

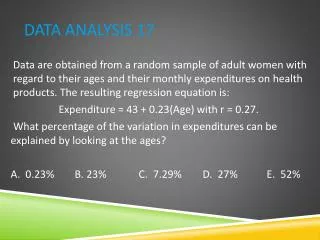

Large samples Interested in the proportion of husbands and wives that are unfaithful to their spouses. χ2 = 8.0 (p-value = 0.005) χ2 = .08 (p-value = 0.78) For given proportions, larger samples will return higher values of χ2

Difference of proportions • For 2 by 2 tables it’s quite easy to measure strength of effect, and we often use something called the difference of proportions. • That’s just the difference in the proportion of people by the independent variable. • For infidelity, the difference is just 51% - 49%, or 2%. • We can apply the CIs for differences of proportions • Often we use other measures though.

Odds ratios (1) • Generally we use something called an odds ratio to look at strength of association. • Odds are closely related to probability, and are the bookmaker’s way of expressing how probable they think an event is.

Odds ratios (2) • e.g. the bookies think that Bulldogs has a 10% chance of winning their first round game in the NCAA tourney. • There is a 10% probability of success (a win). • There is a 90% (as 100% - 10% = 90%) probability of failure (not winning) A failure is thus 9 times as likely as a success. If we play the game again and again, we’d expect UGA to win once for every 9 losses. The reason the bookmakers will offer “9-1 against” on UGA is that on average they will pay out $9 for every $9 they take in bets (assuming unrealistically they don’t want to make a profit).

Odds ratios (3) • This is not only a top tip for the game, understanding odds is important for social scientists. • Wearing our social scientist hats we’re normally interested in odds ratios, that is the ratios of odds in one cell of a contingency table to another. • Let’s take the (classic) example of class voting and head back to the 1950s.

Class voting (1) • We have two classes (working and middle) and two parties (Labour and Conservative). The odds of voting Labour if you’re MC are 0.2/0.8 = 0.25 The odds of voting Labour if you’re WC are 0.6/0.4 = 1.5

Class voting (2) The odds of voting Labour if you’re MC are 0.2/0.8 = 0.25 The odds of voting Labour if you’re WC are 0.6/0.4 = 1.5

Class voting (3) • The odds ratio tells us the how much greater (or smaller) the odds of ‘something happening’ is for two different groups. • e.g. for our class voting example, the odds of voting Labour rather than Conservative are roughly six times greater in the working class as they are in the middle class. • An odds ratio of 1 tells us there is no difference in the odds between the groups. • Equally, values far from 1 tell us the strength of association is large.

Odds ratios – bigger tables The odds of voting Labour if you’re WC are 0.6/0.4 = 1.5 The odds of voting Labour if you’re UC are 0.1/0.9 = 0.11 So the odds of voting Labour rather than Conservative are over 13 times greater in the working class as they are in the upper class. Odds ratio = 1.5/0.11 = 13.6

Why odds? • We could just do this with differences of proportions couldn’t we? • Kind of, but there’s some advantages… • In particular, you can multiply any row or column a table by a non-zero positive number and the odds ratios will not change. • Why is this important? Well in our class voting example this means that one party becoming more popular in all classes does not affect levels of class voting. • Moreover the odds ratio is important for understanding regression models of categorical variables.

Ordinal data (1) • We can obviously use all this stuff for ordinal data, but we’d be missing the extra information that we have from the order of the categories. • There are a number of different measures of association for contingency tables with ordinal data. • Gamma, Kendall’s tau-b and Spearman’s rho-b are the most commonly used. • These are all based on a similar idea, and have relatively similar properties.

Ordinal data (2) • The logic behind how these measures work is based on the idea of concordant and discordant pairs. • A pair of observations is discordant if the subject that is high on one variable is low on the other (there’s a negative relationship). • A pair of observations is concordant if the subject that is high on one variable is high on the other (there’s a positive relationship). • The association is strong if there’s either lots of concordant pairs or lots of discordant pairs. • Lots of discordant pairs means a strong negative relationship. • Lots of concordant pairs means a strong positive relationship.

Ordinal data (3) Move on to here, how many concordant pairs are there? Start here, how many concordant pairs are there? Have another 20*(30+10) = 800 concordant pairs Have 70*(20+30+10) = 4200 concordant pairs

Ordinal data (4) • If we added up all the concordant pairs, we’d have 5300. • If we added up all the discordant pairs we’d have only 500. • More pairs show a positive association than show a negative association. • i.e. for higher values of the class variable, people are more likely to agree with lowering taxes. • We need to standardize this measure (to take account of sample size).

Ordinal data (5) • Zero indicates no association, values close to +1 a positive association and values close to -1 a negative association. • For our data the gamma value is 0.83. • We can calculate a SE for this measure, and hence a p-value as well, allowing us to test whether this is a real association.

Other measures of association • Finally there are other measures of association. A common type being proportional reduction in error measures (PRE). • For nominal data these are Goodman and Kruskal’s tau and Goodman and Kruskal’slambda. • These essentially measure how much better off we are when predicting the dependent value by taking the independent variable into account. • This type of summary measure isn’t used much now, and the use of odds ratios and more sophisticated modelling techniques is definitely preferable.

These aren’t proper models… • Indeed they’re not, so what we need to do is incorporate categorical dependent variables in a more general regression context. • Logistic regression uses binary dependent variables, and is linked to the idea of odds ratios and the χ2 statistic.