Building Mult-Petabyte Online Databases

610 likes | 632 Views

Explore the technology and trends driving the growth of online databases to store vast amounts of data, key strategies for managing large-scale information, and the challenges ahead. Discover cost-effective storage solutions, the importance of parallelism, and the evolving landscape of data storage technologies. Learn about network advancements, the impact of human attention on data utilization, and the necessity of automatic parallelism in data processing. Stay informed on the latest developments shaping the future of data storage and management.

Building Mult-Petabyte Online Databases

E N D

Presentation Transcript

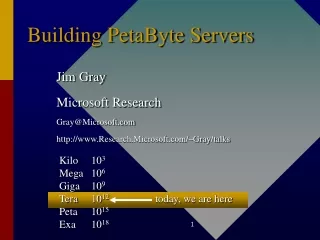

Building Mult-Petabyte Online Databases Jim Gray Microsoft Research Gray@Microsoft.com http://research.Microsoft.com/~Gray

Outline • Technology: • 1M$/PB: store everything online (twice!) • End-to-end high-speed networks • Gigabit to the desktop • Research driven by apps: • EOS/DIS • TerraServer • National Virtual Astronomy Observatory.

Reality Check • Good news • In the limit, processing & storage & network is free • Processing & network is infinitely fast • Bad news • Most of us live in the present. • People are getting more expensive.Management/programming cost exceeds hardware cost. • Speed of light not improving. • WAN prices have not changed much in last 8 years.

Yotta Zetta Exa Peta Tera Giga Mega Kilo How Much Information Is there? Everything! Recorded • Soon everything can be recorded and indexed • Most data never be seen by humans • Precious Resource: Human attentionAuto-Summarization Auto-Searchis key technology.www.lesk.com/mlesk/ksg97/ksg.html All BooksMultiMedia All LoC books (words) .Movie A Photo A Book 24 Yecto, 21 zepto, 18 atto, 15 femto, 12 pico, 9 nano, 6 micro, 3 milli

Trends: ops/s/$ Had Three Growth Phases 1890-1945 Mechanical Relay 7-year doubling 1945-1985 Tube, transistor,.. 2.3 year doubling 1985-2000 Microprocessor 1.0 year doubling

Sort Speedup • Performance doubled every year for the last 15 years. • But now it is 100s or 1,000s of processors and disks • Got 40%/y (70x) from technology and 60%/y (1,000x) from parallelism (partition and pipeline) • See http://research.microsoft.com/barc/SortBenchmark/

1,000 x parallel: 100 seconds scan. At 10 MB/s: 1.2 days to scan Use 100 processors & 1,000 disks Terabyte (Petabyte) ProcessingRequires Parallelism parallelism: use many little devices in parallel

Parallelism Must Be Automatic • There are thousands of MPI programmers. • There are hundreds-of-millions of people using parallel database search. • Parallel programming is HARD! • Find design patterns and automate them. • Data search/mining has parallel design patterns.

Storage capacity beating Moore’s law • 4 k$/TB today (raw disk)

Cheap Storage • 4 k$/TB disks (16 x 60 GB disks @ 210$ each)

240 GB, 2k$ (now)320 GB by year end. • 4x60 GB IDE(2 hot plugable) • (1,100$) • SCSI-IDE bridge • 200k$ • Box • 500 Mhz cpu • 256 MB SRAM • Fan, power, Enet • 700$ • Or 8 disks/box480 GB for ~3K$ ( or 300 GB RAID)

Hot Swap Drives for Archive or Data Interchange • 35 MBps write(so can write N x 60 GB in 40 minutes) • 60 GB/overnite = ~N x 2 MB/second @ 19.95$/nite 17$ 200$

2x800 Mhz 256 MB Cheap Storage or Balanced System • Low cost storage (2 x 1.5k$ servers) 7K$ TB2x (1K$ system + 8x60GB disks + 100MbEthernet) • Balanced server (7k$/.64 TB) • 2x800Mhz (2k$) • 256 MB (200$) • 8 x 80 GB drives (2.8K$) • Gbps Ethernet + switch (1.5k$) • 11k$ TB, 22K$/RAIDED TB

The “Absurd” Disk • 2.5 hr scan time (poor sequential access) • 1 access per second / 5 GB (VERY cold data) • It’s a tape! 1 TB 100 MB/s 200 Kaps

It’s Hard to Archive a PetabyteIt takes a LONG time to restore it. • At 1GBps it takes 12 days! • Store it in two (or more) places online (on disk?).A geo-plex • Scrub it continuously (look for errors) • On failure, • use other copy until failure repaired, • refresh lost copy from safe copy. • Can organize the two copies differently (e.g.: one by time, one by space)

Disk 80 GB 35 MBps 5 ms seek time 3 ms rotate latency 4$/GB for drive 3$/GB for ctlrs/cabinet 4 TB/rack 1 hour scan Tape 40 GB 10 MBps 10 sec pick time 30-120 second seek time 2$/GB for media8$/GB for drive+library 10 TB/rack 1 week scan Disk vs Tape Guestimates Cern: 200 TB 3480 tapes 2 col = 50GB Rack = 1 TB =12 drives The price advantage of tape is narrowing, and the performance advantage of disk is growing At 10K$/TB, disk is competitive with nearline tape.

Gilder’s Law: 3x bandwidth/year for 25 more years • Today: • 10 Gbps per channel • 4 channels per fiber: 40 Gbps • 32 fibers/bundle = 1.2 Tbps/bundle • In lab 3 Tbps/fiber (400 x WDM) • In theory 25 Tbps per fiber • 1 Tbps = USA 1996 WAN bisection bandwidth • Aggregate bandwidth doubles every 8 months! 1 fiber = 25 Tbps

Sense of scale 300 MBps OC48 = G2 Or memcpy() • How fat is your pipe? • Fattest pipe on MS campus is the WAN! 20MBps disk / ATM / OC3 90 MBps PCI 94 MBps Coast to Coast

Redmond/Seattle, WA Information Sciences Institute Microsoft Qwest University of Washington Pacific Northwest Gigapop HSCC (high speed connectivity consortium) DARPA New York Arlington, VA San Francisco, CA 5626 km 10 hops

Networking • WANS are getting faster than LANSG8 = OC192 = 10Gbps is “standard” • Link bandwidth improves 4x per 3 years • Speed of light (60 ms round trip in US) • Software stackshave always been the problem. Time = SenderCPU + ReceiverCPU + bytes/bandwidth This has been the problem

The Promise of SAN/VIA/Infiniband10x in 2 years http://www.ViArch.org/ • Yesterday: • 10 MBps (100 Mbps Ethernet) • ~20 MBps tcp/ip saturates 2 cpus • round-trip latency ~250 µs • Now • Wires are 10x faster Myrinet, Gbps Ethernet, ServerNet,… • Fast user-level communication • tcp/ip ~ 100 MBps 10% cpu • round-trip latency is 15 us • 1.6 Gbps demoed on a WAN

System Bus PCI Bus PCI Bus What’s a Balanced System?

Rules of Thumb in Data Engineering • Moore’s law -> an address bit per 18 months. • Storage grows 100x/decade (except 1000x last decade!) • Disk data of 10 years ago now fits in RAM (iso-price). • Device bandwidth grows 10x/decade – so need parallelism • RAM:disk:tape price is 1:10:30 going to 1:10:10 • Amdahl’s speedup law: S/(S+P) • Amdahl’s IO law: bit of IO per instruction/second(tBps/10 top! 50,000 disks/10 teraOP: 100 M$ Dollars) • Amdahl’s memory law: byte per instruction/second (going to 10)(1 TB RAM per TOP: 1 TeraDollars) • PetaOps anyone? • Gilder’s law: aggregate bandwidth doubles every 8 months. • 5 Minute rule: cache disk data that is reused in 5 minutes. • Web rule: cache everything! http://research.Microsoft.com/~gray/papers/MS_TR_99_100_Rules_of_Thumb_in_Data_Engineering.doc

Up • “Scale Up” • Use “big iron” (SMP) • Cluster into packs for availability • “Scale Out” clones & partitions • Use commodity servers • Add clones & partitions as needed Out Scalability: Up and Out

An Architecture for Internet Services? • Need to be able to add capacity • New processing • New storage • New networking • Need continuous service • Online change of all components (hardware and software) • Multiple service sites • Multiple network providers • Need great development tools • Change the application several times per year. • Add new services several times per year.

Premise: Each Site is a Farm • Buy computing by the slice (brick): • Rack of servers + disks. • Grow by adding slices • Spread data and computation to new slices • Two styles: • Clones: anonymous servers • Parts+Packs: Partitions fail over within a pack • In both cases, remote farm for disaster recovery

Everyone scales outWhat’s the Brick? • 1M$/slice • IBM S390? • Sun E 10,000? • 100 K$/slice • HPUX/AIX/Solaris/IRIX/EMC • 10 K$/slice • Utel / Wintel 4x • 1 K$/slice • Beowulf / Wintel 1x

Outline • Technology: • 1M$/PB: store everything online (twice!) • End-to-end high-speed networks • Gigabit to the desktop • Research driven by apps: • EOS/DIS • TerraServer • National Virtual Astronomy Observatory.

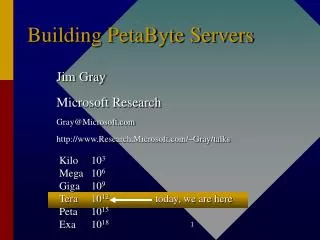

Interesting Apps • EOS/DIS • TerraServer • Sloan Digital Sky Survey Kilo 103 Mega 106 Giga 109 Tera 1012 today, we are here Peta 1015 Exa 1018

The Challenge -- EOS/DIS • Antarctica is melting -- 77% of fresh water liberated • sea level rises 70 meters • Chico & Memphis are beach-front property • New York, Washington, SF, LA, London, Paris • Let’s study it! Mission to Planet Earth • EOS: Earth Observing System (17B$ => 10B$) • 50 instruments on 10 satellites 1999-2003 • Landsat (added later) • EOS DIS: Data Information System: • 3-5 MB/s raw, 30-50 MB/s processed. • 4 TB/day, • 15 PB by year 2007

Designing EOS/DIS • Expect that millions will use the system (online)Three user categories: • NASA 500 -- funded by NASA to do science • Global Change 10 k - other earth scientists • Internet 500 M - everyone else High school students Grain speculators Environmental Impact Reports New applications => discovery & access must be automatic • Allow anyone to set up a peer- node (DAAC & SCF) • Design for Ad Hoc queries, Not Standard Data Products design for pull vs push. computation demand is enormous(pull:push is 100: 1)

Key Architecture Features • 2+N data center design • Scaleable OR-DBMS • Emphasize Pull vs Push processing • Storage hierarchy • Data Pump • Just in time acquisition

Obvious Point: EOS/DIS will be a cluster of SMPs • It needs 16 PB storage = 200K disks in current technology = 400K tapes in current technology • It needs 100 TeraOps of processing = 100K processors (current technology) and ~ 100 Terabytes of DRAM • Startup requirements are 10x smaller • smaller data rate • almost no re-processing work

2+N data center design • duplex the archive (for fault tolerance) • let anyone build an extract (the +N) • Partition data by time and by space (store 2 or 4 ways). • Each partition is a free-standing OR-DBBMS (similar to Tandem, Teradata designs). • Clients and Partitions interact via standard protocols • HTTP+XML,

Data Pump • Some queries require reading ALL the data (for reprocessing) • Each Data Center scans ALL the data every 2 days. • Data rate 10 PB/day = 10 TB/node/day = 120 MB/s • Compute on demand small jobs • less than 100 M disk accesses • less than 100 TeraOps. • (less than 30 minute response time) • For BIG JOBS scan entire 15PB database • Queries (and extracts) “snoop” this data pump.

Problems • Management (and HSM) • Design and Meta-data • Ingest • Data discovery, search, and analysis • Auto Parallelism • reorg-reprocess

What this system taught me • Traditional storage metrics • KAPS: KB objects accessed per second • $/GB: Storage cost • New metrics: • MAPS: megabyte objects accessed per second • SCANS: Time to scan the archive • Admin cost dominates (!!) • Auto parallelism is essential.

Outline • Technology: • 1M$/PB: store everything online (twice!) • End-to-end high-speed networks • Gigabit to the desktop • Research driven by apps: • EOS/DIS • TerraServer • National Virtual Astronomy Observatory.

Microsoft TerraServer: http://TerraServer.Microsoft.com/ • Build a multi-TB SQL Server database • Data must be • 1 TB • Unencumbered • Interesting to everyone everywhere • And not offensive to anyone anywhere • Loaded • 1.5 M place names from Encarta World Atlas • 7 M Sq Km USGS doq (1 meter resolution) • 10 M sq Km USGS topos (2m) • 1 M Sq Km from Russian Space agency (2 m) • On the web (world’s largest atlas) • Sell images with commerce server.

Earth is 500 Tera-meters square USA is 10 tm2 100 TM2 land in 70ºN to 70ºS We have pictures of 6% of it 3 tsm from USGS 2 tsm from Russian Space Agency Compress 5:1 (JPEG) to 1.5 TB. Slice into 10 KB chunks Store chunks in DB Navigate with Encarta™ Atlas globe gazetteer StreetsPlus™ in the USA Someday multi-spectral image of everywhere once a day / hour .2x.2 km2 tile .4x.4 km2 image .8x.8 km2 image 1.6x1.6 km2 image Microsoft TerraServer Background

US Geologic Survey 6 Tera Bytes (14 TB raw but there is redundancy) Most data not yet published Based on a CRADA TerraServer makes data available. 1x1 meter 6 TB Continental US New DataComing USGS “DOQ” USGS Digital Ortho Quads (DOQ)

SPIN-2 Russian Space Agency(SovInfomSputnik)SPIN-2 (Aerial Images is Worldwide Distributor) • 1.5 Meter Geo Rectified imagery of (almost) anywhere • Almost equal-area projection • De-classified satellite photos (from 200 KM), • More data coming (1 m) • Selling imagery on Internet. • Putting 2 tm2 onto Microsoft TerraServer.

Hardware DS3 SPIN-2 Map Site Server Internet Servers 100 Mbps Ethernet Switch Web Servers 6TB Database Server AlphaServer 8400 4x400. 10 GB RAM 324 StorageWorks disks 10 drive tape library (STC Timber Wolf DLT7000 )

Software TerraServer Web Site Web Client ImageServer Active Server Pages Internet InformationServer 4.0 HTML JavaViewer The Internet browser MTS Terra-ServerStored Procedures Internet InfoServer 4.0 Internet InformationServer 4.0 SQL Server 7 MicrosoftSite Server EE Microsoft AutomapActiveX Server Automap Server Image DeliveryApplication SQL Server7 TerraServer DB Image Provider Site(s)

DLTTape “tar” \Drop’N’ LoadMgrDB DoJob Wait 4 Load DLTTape NTBackup ESA ... LoadMgr Cutting Machines LoadMgr AlphaServer4100 AlphaServer4100 60 4.3 GB Drives 10: ImgCutter 20: Partition 30: ThumbImg40: BrowseImg 45: JumpImg 50: TileImg 55: Meta Data 60: Tile Meta 70: Img Meta 80: Update Place ImgCutter 100mbitEtherSwitch \Drop’N’ \Images TerraServer Enterprise Storage Array STCDLTTape Library AlphaServer8400 108 9.1 GB Drives 108 9.1 GB Drives 108 9.1 GB Drives BAD OLD Load

Staging Disk JPEG tiles DLTTape “tar” Metadata Load DB Active Server Pages Cut & Load Scheduling System Image Cutter Merge ODBC Tx TerraLoader ODBC TX TerraServer SQLDBMS Dither Image Pyramid From base ODBC Tx New Image Load and Update

TerraServer Daily Traffic Jun 22, 1998 thru June 22, 1999 30M Sessions 20M Hit Count Page View DB Query Image 10M 0 6/22/98 7/22/98 8/22/98 9/22/98 1/22/99 2/22/99 3/22/99 4/22/99 5/22/99 6/22/99 10/22/98 11/22/98 12/22/98 After a Year: • 2 TB of data750 M records • 2.3 billion Hits • 2.0 billion DB Queries • 1.7 billion Images sent(2 TB of download) • 368 million Page Views • 99.93% DB Availability • 3rd design now Online • Built and operated by team of 4 people

TerraServer Current Effort • Added USGS Topographic maps (4 TB) • The other 25% of the US DOQs (photos) • Adding digital elevation maps • Integrated with Encarta Online • Open architecture: publish XML and C# interfaces. • Adding mult-layer maps (with UC Berkeley) • High availability (4 node cluster with failover) • Geo-Spatial extension to SQL Server

Outline • Technology: • 1M$/PB: store everything online (twice!) • End-to-end high-speed networks • Gigabit to the desktop • Research driven by apps: • EOS/DIS • TerraServer • National Virtual Astronomy Observatory.

(inter) National Virtual Observatory • Almost all astronomy datasets will be online • Some are big (>>10 TB) • Total is a few Petabytes • Bigger datasets coming • Data is “public” • Scientists can mine these datasets • Computer Science challenge: Organize these datasets Provide easy access to them.