A Robust Real Time Face Detection

A Robust Real Time Face Detection. Outline. AdaBoost – Learning Algorithm Face Detection in real life Using AdaBoost for Face Detection Improvements Demonstration. AdaBoost. A short Introduction to Boosting (Freund & Schapire, 1999)

A Robust Real Time Face Detection

E N D

Presentation Transcript

Outline • AdaBoost – Learning Algorithm • Face Detection in real life • Using AdaBoost for Face Detection • Improvements • Demonstration

AdaBoost A short Introduction to Boosting (Freund & Schapire, 1999) Logistic Regression, AdaBoost and Bregman Distances (Collins, Schapire, Singer, 2002)

Boosting • The Horse-Racing Gambler Problem • Rules of thumb for a set of races • How should we choose the set of races in order to get the best rules of thumb? • How should the rules be combined into a single highly accurate prediction rule? • Boosting !

AdaBoost - the idea • AdaBoost agglomerates many weak classifiers into one strong classifier. • Initialize sample weights • For each cycle: • Find a classifier that performs well on the weighted sample • Increase weights of misclassified examples • Return a weighted list of classifiers IQ Shoe size Shoe size

distribution AdaBoost - algorithm example decision step

AdaBoost – training error • Freund and Schapire (1997) proved that: • AdaBoost adapts to the error rates of the individual weak hypotheses. • Therefore it is called ADABoost.

AdaBoost – generalization error • Freund and Schapire (1997) showed that:

AdaBoost – generalization error • The analysis implies that boosting will overfit if the algorithm is run for too many rounds • However, it was observed empirically that AdaBoost does not overfit, • even when run thousands of rounds. • Moreover, it was observed that the generalization errorcontinues to drive down long after training error reached zero

AdaBoost – generalization error • An alternative analysis was presented by Schapire et al. (1998), that suits the empirical findings

AdaBoost – different point of view • We try to solve the problem of approximating the y’s using a linear combination of weak hypotheses • In other words, we are interested in the problem of finding a vector of parameters α such thatis a ‘good approximation’ of yi • For classification problems we try to match the sign of f(xi) to yi

AdaBoost – different point of view • Sometimes it is advantageous to minimize some other (non-negative) loss function instead of the number of classification errors • For AdaBoost the loss function is • This point of view was used by Collins, Schapire and Singer (2002) to demonstrate that AdaBoost converges to optimality

Face Detection in Monkeys There are cells that ‘detect faces’

Face Detection in Human There are ‘processes of face detection’

Faces Are Special We humans analyze faces in a ‘different way’

We humans analyze faces in a ‘different way’ Faces Are Special

Faces Are Special We analyze faces in a ‘different way’

Face Recognition in Human We analyze faces ‘in a specific location’

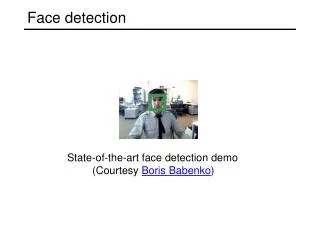

RobustReal-Time Face Detection Viola and Jones, 2003

Features Picture analysis, Integral Image

Features • The system classifies images based on the value of simple features Two-rectangle Value = ∑ (pixels in white area) - ∑ (pixels in black area) Three-rectangle Four-rectangle

Contrast Features Source Result Notice that each feature is related to a special location in the sub-window

Features • Notice that each feature is related to a special location in the sub-window • Why features and not pixels? • Encodedomain knowledge • Feature based system operates faster • Inspiration from human vision

Features • Later we will see that there are other features that can be used to implement an efficient face detector • The original system of Viola and Jonesused only rectangle features

Computing Features • Given a detection resolution of 24x24, and size of ~200x200, the set of rectangle features is ~160,000 ! • We need to find a way to rapidly compute the features

Integral Image • Intermediate representation of the image • Computed in one pass over the original image

x (0,0) s(x,y) = s(x,y-1) + i(x,y) ii(x,y) = ii(x-1,y) + s(x,y) (x,y) y Integral Image Using the integral image representation one can compute the value of any rectangular sum in constant time. For example the integral sum inside rectangle D we can compute as: ii(4) + ii(1) – ii(2) – ii(3)

-1 +1 +2 -2 +1 -1 (x,y) (x,y) Integral Image Integral Image

Building a Detector Cascading, training a cascade

Main Ideas • The Features will be used as weak classifiers • We will concatenate several detectorsserially into a cascade • We will boost (using a version of AdaBoost) a number of features to get ‘good enough’ detectors

Weak Classifiers Weak Classifier : A feature which best separates the examples Given a sub-window (x), a feature (f), a threshold (Θ), and a polarity (p) indicating the direction of the inequality: Probability for this threshold

Weak Classifiers • A weak classifier is a combination of a feature and a threshold • We have K features • We have N thresholds where N is the number of examples • Thus there are KN weak classifiers

Weak Classifier Selection • For each featuresort the examples based on feature value • For each element evaluate the total sum of positive/negative example weights (T+/T-) and the sum of positive/negative weights below the current example (S+/S-) • The error for a threshold which splits the range between the current and previous example in the sorted list is :

positive/negative example weights An example the sum of positive/negative weights below the current example For e calculation weight Feature value Error = min(A,B) examples decision

Main Ideas: Cascading • The Features will be used as weak classifiers • We will concatenate several detectors serially into a cascade • We will boost (using a version of AdaBoost) a number of features to get ‘good enough’ detectors

Cascading • We start with simple classifiers which reject many of the negative sub-windows while detecting almost all positive sub-windows • Positive results from the first classifier triggers the evaluation of a second (more complex) classifier, and so on • A negative outcome at any point leads to the immediate rejection of the sub-window

Main Ideas: Boosting • The Features will be used as weak classifiers • We will concatenate several detectors serially into a cascade • We will boost (using a version of AdaBoost) a number of features to get ‘good enough’ detectors

Training a cascade • User selects values for: • Maximum acceptablefalse positive rate per layer • Minimum acceptabledetection rate per layer • Target overall false positive rate • User gives a set of positive and negative examples

Training a cascade (cont.) • While the overall false positive rateis not met: • While the false positive rate of current layer is less than the maximum per layer: • Train a classifier with n features using AdaBoost on a set of positive and negative examples • Decrease threshold when the current classifier detection rate of the layer is more than the minimum • Evaluate current cascade classifier on validation set • Evaluate current cascade detector on a set of non faces images and put any false detections into the negative training set

Training Data Set • 4916 hand labeled faces • Aligned to base resolution (24x24) • Non faces for first layer were collected from 9500 non faces images • Non faces for subsequent layers were obtained by scanning the partial cascade across non faces and collecting false positives (max 6000 for each layer)

Structure of the Detector • 38 layer cascade • 6060 features

Speed of final Detector • On a 700Mhz Pentium III processor, the face detector can process a 384 by 288 pixel image in about .067 seconds

Improvements Learning Object Detection from a Small Number of Examples: the Importance of Good Features (Levy & Weiss, 2004)

Improvements • Performance depends crucially on the features that are used to represent the objects (Levy & Weiss, 2004) • Good Features imply: • Good results from small training databases • Bettergeneralization abilities • Shorter (faster) classifiers

Edge Orientation Histogram • Invariant to global illumination changes • Captures geometric properties of faces • Domain knowledge represented: • Inner part of the face includes more horizontal edges then vertical • The ration between vertical and horizontal edges is bounded • The area of the eyes includes mainly horizontal edges • The chin has more or less the same number of oblique edges on both sides

Edge Orientation Histogram • Called EOH • The EOH can be calculated using some kind of Integral Image: • We find the gradients at the point (x,y) using Sobel masks • We calculate the orientation of the edge (x,y) • We divide the edges into K bins • The result is stored in K matrices • We use the same idea of Integral Imagefor the matrices