Enhancing Grid Storage Elements: Status & Future Directions at GridPP7 Oxford

The presentation at GridPP7 in Oxford (31 June - 02 July 2003) discusses the current state and future of Storage Elements (SE) within grid computing. Topics include the integration of DataGrid with WP2 Data Replication Services, collaboration among leading laboratories like CERN and FermiLab, and the development of the Storage Resource Manager (SRM). It highlights the role of SE in managing file access, metadata, and control interfaces, while also addressing future priorities, scalability, and enhanced reservation mechanisms in SRM.

Enhancing Grid Storage Elements: Status & Future Directions at GridPP7 Oxford

E N D

Presentation Transcript

Storage Element Status GridPP7 Oxford, 31 June – 02 July 2003

Contents • What is an SE? • The SE Today • What will it provide Really Soon Now™? • What is left for future developments?

Collaborations • DataGrid Storage Element • Integrate with WP2 Data Replication Services (Reptor, Optor) • Jobs running on worker nodes in a ComputingElement cluster may read or write files to an SE • SRM – Storage Resource Manager • Collaboration between Lawrence Berkeley, FermiLab, Jefferson Lab, CERN, Rutherford Appleton Lab

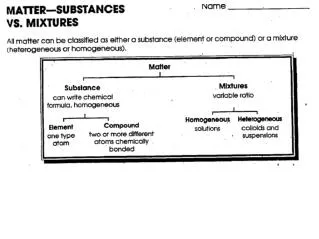

EU DataGrid Storage Element • Grid Interface to Mass Storage Systems (MSS) • Flexible and extensible • Provides additional services for underlying MSS • E.g. access control, file metadata,…

Control Interface • E.g. • cache file: stage file in from mass storage and place in disk cache • register existing file • SRM style interface • Interface was written before we had the SRM v.1 WSDL • Web service interface

More about SRM • Users know Site File Names (SFN) or Physical File Names (PFN) • lxshare0408.cern.ch/bongo/mumble SE cache file request id Disk cache MSS file

More about SRM • Client queries the status of a request • Better that client polls than server callbacks • Server (ideally) able to give time estimate SE status? “not ready, try later” Disk cache MSS file

More about SRM • When request is ready, client gets a Transfer URL (TURL) • gsiftp://lxshare0408.cern.ch/flatfiles/01/data/16bd30e2a899b7321baf00146acbe953 SE status? ready: TURL Disk cache MSS file file

More about SRM • Client accesses the file in the SE’s disk cache using (usually) non-SE tools SE Disk cache MSS globus- url-copy file file file

More about SRM • Finally, client informs SE that data transfer is done • This is required for cache management etc SE done ok Disk cache MSS file file

Data transfer interface • GridFTP • The standard data transfer protocol in SRM collaboration • Some SEs will be NFS mounted • Caching and pinning still required before the file is accessed via NFS • Easy to add new data transfer protocols • E.g. http, ftp, https,…

Information Interface • Can publish into MDS • Can publish (via GIN) R-GMA • Using GLUE schema for StorageElement • http://hepunx.rl.ac.uk/edg/wp3/documentation/doc/schemas/Glue-SE.html • Also a file metadata function as part of the control interface

Implementation • XML messages passed between applications • Functionality implemented in handlers • A handler is a specialised program reading a message, parsing it, and returning a message • Each handler does one specific thing

Handlers processing request Request Manager Handler 1 User lookup Handler 2 File lookup Handler n Return Time

Architecture handler3 handler4 handler5 Global Data handler1 handler2 request • The request contains the sequence of names of handlers • As each handler processes the request, it calls a library that moves the name to an audit section • The library allows easy access to global data • Handlers may also have handler-specific data in the XML • Storing the XML output from each handler as the request gets processed makes it easy to debug the SE sequence XML audit

Mapping to local user • SE runs as unprivileged user with a highly constrained setuid executable to map to local users • Mapping done using gridmap file, using pooled accounts if available • Needed for access to some (many) mass storage systems

Deployment • MSS working CERN – Castor and disk RAL – Atlas DataStore and Disk • Testing HPSS via RFIO (CC-IN2P3) ESA/ESRIN testing disk, then plan tape MSS (AMS) NIKHEF disk • Others SARA building from source on SGI INSA/WP10 – to build support for DICOM servers UAB Castor – testing

Installation • RPMs and source available • Source compiles with gcc 2.95.x and 3.2.3 • Configures using LCFG-ng • Tools available to build and install Ses without LCFG: ./configure –mss-type=disk make make install

Priorities for September • WP2 TrustManager adopted for security but need to LCFG configure • Currently building proper queuing system • SRM v.1.1 interface • Access control – use GACL • Improved disk cache management (including pinning) • VOMS support • SRM v2.1 interface (partial support)

Future Directions • Guaranteed reservations • SRM2 recommendations: volatile, durable, permanent files and space • Full SRM v2.1 • Scalability • Scalability will be achieved by making a single SE distributed • Not hard to do