Inferential Statistics 1

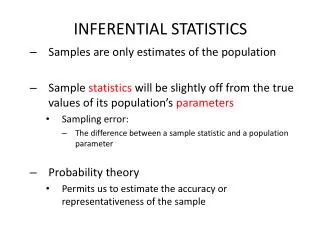

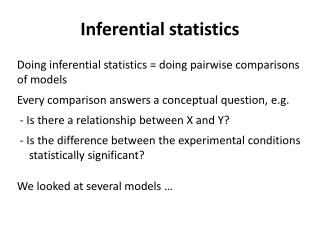

Inferential Statistics 1. Jan 17, 2014. Concepts for Today. Hypothesis, Null hypothesis Research question Null is the hypothesis of “ no relationship ” Normal Distribution Bell curve Standard normal distribution has a mean of “ 0 ” and a standard deviation of “ 1 ” “ Alpha .05 ”

Inferential Statistics 1

E N D

Presentation Transcript

Inferential Statistics 1 Jan 17, 2014

Concepts for Today • Hypothesis, Null hypothesis • Research question • Null is the hypothesis of “no relationship” • Normal Distribution • Bell curve • Standard normal distribution has a mean of “0” and a standard deviation of “1” • “Alpha .05” • Expect to get results different than those found this many times if the experiment was repeated 100 times. Percent error we are willing to tolerate • “P” Value • The probability of the alpha level. • Student’s t-test • Used to test for significant differences between two mean values, or a difference from a specific level of a mean, such as zero: hypothesis testing • Confidence Intervals • What is the expected range of possible observations, based on the data we have?

Census versus Sample • Can you sample every person in a population? • In most cases, it is impossible to survey every person in a population to come up with a distribution of data so we never can know for sure what a distribution may look like. • So we use a sample. • A sample is drawn and we hope that the sample is representative of the population. (e.g. Sheldon knocking) • Size of sample is based in large part on the amount of variability in the population, not the “size” of the population. • Generally, when n greater than or equal to about 30, we assume we have a normal distribution • This is based in part on a concept you may recall called the “Central Limit Theorem: the distribution of sample means is approximately normal regardless of whether the population being sampled is normal.

NormalDistribution • When we talk about a “normal distribution” you can picture the Bell Curve • A normal distribution is defined by its mean and std. dev. • The mean is a measure of location (where is the mean?) • The std. dev. is a measure of spread or variation. • A normal distribution underlying our data is a requirement for many statistical procedures. • Lit Eval Note: When you suspect that data may not be normally distributed, you need to look very closely at the statistics used.

The Standard Normal Distribution • Standard Normal Curve (Z - Distribution) • Has a mean of “0” and a std. dev. of “1” • The area under the whole distribution is 1 • The AUC between any two points can be interpreted as the relative frequency of the values included between those points • Understanding this concept is essential to understanding p and alpha. Z = X – u / s • Where: X is the variable to be standardized, u is the population mean and s is the population standard deviation

Example: Transformation of a distribution with a mean of 50 and a standard deviation of 5

Application:Consider the distribution of SAT scores in a population of students.What is the proportion of personshaving SAT scores between 500 and 650?

Example:Normal Distribution of SAT scores Population Mean μ=500 • Standard deviation σ=100 • 68% of SAT scores fall between 400 and 600 • Approximately 95% of scores fall between 300 and 700

Distribution 1 Distribution 2 What happens if we do not have a normal distribution of data? Is it possible to draw conclusions about areas under the curve, as we did with the Z distribution, a symmetrical distribution? It depends…we often still do, depending on how far from normal a distribution may be. Rule of thumb, n=30.

Alpha • If we did an experiment 100 times, we would expect to get similar results (an observed value equal to or less than we got this time) 95 times, and we would expect a different result only 5 times if we choose an alpha level of .05.

Alpha The probability of concluding there is a difference between groups when there really is no difference between them (i.e., you found one of those times when Sheldon knocked something other than his normal 3 times, also referred to as a type I error). A statistical result is usually considered statistically significant if the probability of a type I error (alpha) is less than 5%.

Alpha level: 0.05 or bust? So what happens when alpha is .0512: do you conclude the study has obtained non-significant results? What are the implications of having steadfast rules for statistical significance?

P-value The level of statistical significance. A value of p<0.05 means that the probability that the result is due to chance is less than 1 in 20 and is the same as alpha < 0.05. The smaller the p-value, the greater your confidence in the statistical result. Alpha does not change whereas p values are dependent on the actual value of the statistic in question.

What is the difference between an alpha level and a p-value? When we do research, we set a standard that is relatively conservative that a researcher must meet in order to claim that s/he has made a discovery of a some phenomenon or answered some question. The standard is the alpha level, usually set at .05. Assuming that the null hypothesis is true, this means we may reject the null only if the observed data are so unusual that they would have occurred by chance at most 5 % of the time. The smaller the alpha, the more stringent the test (the more unlikely it is to find a statistically significant result). Once the alpha level has been set, a statistic (like t or Z) is computed. Each statistic has an associated probability value called a p-value, or the likelihood of an observed statistic occurring due to chance, given the sampling distribution. Alpha sets the standard for statistical significance, yes or no – whether or not we can reject the null hypothesis. The p-value indicates the actual level of how extreme the data are.

Alpha, Type I and Type II Errors • Alpha is the probability of a Type I error: • The error of rejecting the null hypothesis if it is really true – or saying something is significant when it is not. • We found a difference when there is not really a difference. • With this kind of error, a drug that does not work could get to market. Sometimes referred to as a false positive.

Alpha, Type I and Type II Errors • Type II error (beta): • The probability of concluding that there is no difference between treatment groups when there really is a difference • The error of accepting the null hypothesis and concluding no difference, when it is actually false and there is a difference. • Failing to recognize a real difference. Sometimes referred to as a False Negative. • In this type error, we could keep a potentially life saving drug off the market.

Which is worse? Type I or Type II error? In research, we generally set, in advance of doing research alpha (type I) levels established at 0.05 and beta (type II) levels at 0.20. What does this tell us?

Statistical Power The ability of a study to detect a significant difference between treatment groups; the probability that a study will have a statistically significant result (p<0.05). Power = 1- beta (the false-negative rate). By convention, adequate study power is usually set at 0.8 (80%). This corresponds to beta of 0.2 (a false-negative rate of 20%). Power increases as sample size increases. The power of a study should be stated in the methods section of a study report.

Statistical Power • Statistical Power • The probability of correctly rejecting the null hypothesis - find it true when it is. • Has become common to see power reported in clinical studies. • Think of it as your “confidence” in your results. • Power is (1-Type II) error • So, its 1 – the chance you got it wrong = the probability you got it right. • Typically we see Alpha 0.05, Beta 0.20, Power is 80% • While alpha = 0.05 is an absolute according to most statistical experts, power is not. • Power analysis is used in sample size planning and can be used for hypothesis testing

Statistical Power • To calculate power all you need is: • Desired alpha level • An estimate of how big the effect is in the population. • Estimate of the variability.

Power: What do you think?: • Suppose you calculate that you need 1800 subjects to achieve 80% power for a hypertension study you are conducting. After completing the study you end up with 1788 subjects. What implications does this have for the quality of your results? Is the study flawed because you did not achieve sufficient power? (You missed your goal by 12 subjects.) • Effect size and variability of your results will dictate the power. Depending on the size of these two variables, you may still have reached sufficient power. • Even if you did not reach .80 or 80%, what are the implications?

In General: • Test statistics take the form of: Test Statistic = Some measure of difference Some measure of variation

Z score and t score • Students t-test is one of many test-statistics. • The t distribution is very similar to the Z distribution, except that the area under the curve is affected by the sample size. • Z vs. t computationally: • Z = x1 – x2 / s.d. • t = x1- x2 / (s.d./sqrt n)

T- test • One of the most common statistical tests you will see. • Compares mean scores, or compares a mean score to a fixed value: • i.e., average change in blood pressure is more than 10 mmHg • Average change in blood pressure between drug A and drug B is zero • Basically, a t-test is a Z score, adjusted for sample size.

T- tests • Generally, all test statistics take on the form of “some difference divided by some measure of variation” • For the t-test, it is; t = mean –observation / (s / sqrt n) – however: • The actual test statistic (the calculation) varies with the nature of your study, depending on if you have “dependent” or “independent” samples • Dependent samples are also referred to as “within-subjects” “repeated measures” or simply the same subjects are present in both groups being compared. • Independent samples compares two separate, unrelated groups. • Dependence or independence of the samples affects the variability – and this is accounted for in the calculations of the different tests (dependent and independent t-tests) • Look for recognition of this issue as this usually means the authors know what they are doing!

Example: Hypothesis Testing • Assume: • Random sample of 100 students taking statistics exam in 2012 • Mean score=86, standard deviation=25 • From 2000 to 2012, mean score was 80 (population mean) • Question: Did 2013’s students do significantly better on the test than previous years? • Null form: There is no difference in 2012 students test scores than previous years.

Example (cont.) • Assume the variable test score has a normal distribution • Step 1: • Hypotheses: • Null Hypothesis = H0: μ equals 80 • Alternative Hypothesis = HA: μ does not equal 80 (or μ > 80?) • In this example, should we use a 1-sided or 2-sided test? • Step 2: • Decide on what percent of error we are willing to tolerate (alpha) for incorrectly rejecting our H0 • This is the Type I error - (i.e., incorrectly saying that this year’s students did better when in fact they did not) • Let’s say, we are willing to accept a 5% Type I error or α=0.05

Example (cont.) • Step 3: • Based on accepted level of α and sample size, determine the critical value of t statistic above which H0 will be rejected • For α=0.05, and n=100, • Critical value (c.v.)=1.66 (from t-distribution table, next slide, one-sided or 1-tailed test)

Example Continued • Step 4: Calculate the t statistic t = (sample mean – population mean) / ( s / sqrt n ) t = (86 -80) / (25 / sqrt 100) = 2.4 • Step 5: Since 2.4 (t-calc) > 1.66 (t-table) the hypothesis that the two means are equal is rejected. • Or, the means are different based on this result.

How much confidence do we have in the measurement of our mean? • Confidence interval (C.I) is an interval around our mean that indicates the reliability of the mean • C.I. = mean ± t (s / sqrt n) • 95% CI of μ = mean ± 1.96 (s / sqrt n) • 95% of all sample means fall within 1.96 standard deviations of the population mean • 99% CI of μ = x ± 2 . 58 ( s / sqrt n ) • 99% of all sample means fall within 2.58 standard deviations of the population mean1

Example • Assume: • Sample size n=100 • Sample mean age = 54.85 years • Sample standard deviation = 5.50 • 95% CI = 54.85 ± 1.96 (5.5 / sqrt 100) = (53.78, 55.93) • This range captures the true value of mean population age with 95% certainty • There is a 2.5% chance that the true mean actually lies above 55.93, or lies below 53.78 • My question was always: “Where does the 1.96 come from?”

Application of t-test: Short-term outcomes of an employer-sponsored diabetes management program at an ambulatory care pharmacy clinic. Yoder et al. American Journal of Health-System Pharmacists