User Interfaces for Information Access

User Interfaces for Information Access. Marti Hearst IS202, Fall 2006. Outline. What do people search for? Why is supporting search difficult? What works in search interfaces? When does search result grouping work? What about social tagging and search?.

User Interfaces for Information Access

E N D

Presentation Transcript

User Interfaces for Information Access Marti Hearst IS202, Fall 2006

Outline • What do people search for? • Why is supporting search difficult? • What works in search interfaces? • When does search result grouping work? • What about social tagging and search?

Question/Answer Browse and Build Text Data Mining A of Information Needs Spectrum • What is the typical height of a giraffe? • What are some good ideas for landscaping my client’s yard? • What are some promising untried treatments for Raynaud’s disease?

Questions and Answers • What is the height of a typical giraffe? • The result can be a simple answer, extracted from existing web pages. • Can specify with keywords or a natural language query • However, most search engines are not set up to handle questions properly. • Get different results using a question vs. keywords

Classifying Queries • Query logs only indirectly indicate a user’s needs • One set of keywords can mean various different things • “barcelona” • “dog pregnancy” • “taxes” • Idea: pair up query logs with which search result the user clicked on. • “taxes” followed by a click on tax forms • Study performed on Altavista logs • Author noted afterwards that Yahoo logs appear to have a different query balance. Rose & Levinson, Understanding User Goals in Web Search, Proceedings of WWW’04

Classifying Web Queries Rose & Levinson, Understanding User Goals in Web Search, Proceedings of WWW’04

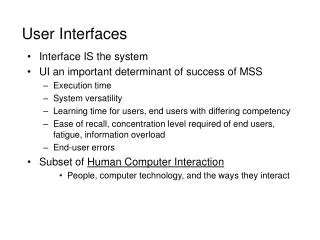

Why is Supporting Search Difficult? • Everything is fair game • Abstractions are difficult to represent • The vocabulary disconnect • Users’ lack of understanding of the technology

Everything is Fair Game • The scope of what people search for is all of human knowledge and experience. • Other interfaces are more constrained (word processing, formulas, etc) • Interfaces must accommodate human differences in: • Knowledge / life experience • Cultural background and expectations • Reading / scanning ability and style • Methods of looking for things (pilers vs. filers)

Abstractions Are Hard to Represent • Text describes abstract concepts • Difficult to show the contents of text in a visual or compact manner • Exercise: • How would you show the preamble of the US Constitution visually? • How would you show the contents of Joyce’s Ulysses visually? How would you distinguish it from Homer’s TheOdyssey or McCourt’s Angela’s Ashes? • The point: it is difficult to show text without using text

Vocabulary Disconnect • If you ask a set of people to describe a set of things there is little overlap in the results.

Lack of Technical Understanding • Most people don’t understand the underlying methods by which search engines work.

People Don’t Understand Search Technology A study of 100 randomly-chosen people found: • 14% never type a url directly into the address bar • Several tried to use the address bar, but did it wrong • Put spaces between words • Combinations of dots and spaces • “nursing spectrum.com” “consumer reports.com” • Several use search form with no spaces • “plumber’slocal9” “capitalhealthsystem” • People do not understand the use of quotes • Only 16% use quotes • Of these, some use them incorrectly • Around all of the words, making results too restrictive • “lactose intolerance –recipies” • Here the – excludes the recipes • People don’t make use of “advanced” features • Only 1 used “find in page” • Only 2 used Google cache Hargattai, Classifying and Coding Online Actions, Social Science Computer Review 22(2), 2004 210-227.

People Don’t Understand Search Technology Without appropriate explanations, most of 14 people had strong misconceptions about: • ANDing vs ORing of search terms • Some assumed ANDing search engine indexed a smaller collection; most had no explanation at all • For empty results for query “to be or not to be” • 9 of 14 could not explain in a method that remotely resembled stop word removal • For term order variation “boat fire” vs. “fire boat” • Only 5 out of 14 expected different results • Understanding was vague, e.g.: • “Lycos separates the two words and searches for the meaning, instead of what’re your looking for. Google understands the meaning of the phrase.” Muramatsu & Pratt, “Transparent Queries: Investigating Users’ Mental Models of Search Engines, SIGIR 2001.

What Works for Search Interfaces? • Query term highlighting • in results listings • in retrieved documents • Sorting of search results according to important criteria (date, author) • Grouping of results according to well-organized category labels (see Flamenco) • DWIM only if highly accurate: • Spelling correction/suggestions • Simple relevance feedback (more-like-this) • Certain types of term expansion • So far: not really visualization Hearst et al: Finding the Flow in Web Site Search, CACM45(9), 2002.

Highlighting Query Terms • Boldface or color • Adjacency of terms with relevant context is a useful cue.

found! found! don’t know don’t know Highlighted query term hits using Google toolbar Microso US Blackout PGA Microsoft

Small Details Matter • UIs for search especially require great care in small details • In part due to the text-heavy nature of search • A tension between more information and introducing clutter • How and where to place things important • People tend to scan or skim • Only a small percentage reads instructions

Small Details Matter • UIs for search especially require endless tiny adjustments • In part due to the text-heavy nature of search • Example: • In an earlier version of the Google Spellchecker, people didn’t always see the suggested correction • Used a long sentence at the top of the page: “If you didn’t find what you were looking for …” • People complained they got results, but not the right results. • In reality, the spellchecker had suggested an appropriate correction. • Interview with Marissa Mayer by Mark Hurst: http://www.goodexperience.com/columns/02/1015google.html

Small Details Matter • The fix: • Analyzed logs, saw people didn’t see the correction: • clicked on first search result, • didn’t find what they were looking for (came right back to the search page • scrolled to the bottom of the page, did not find anything • and then complained directly to Google • Solution was to repeat the spelling suggestion at the bottom of the page. • More adjustments: • The message is shorter, and different on the top vs. the bottom • Interview with Marissa Mayer by Mark Hurst: http://www.goodexperience.com/columns/02/1015google.html

Using DWIM • DWIM – Do What I Mean • Refers to systems that try to be “smart” by guessing users’ unstated intentions or desires • Examples: • Automatically augment my query with related terms • Automatically suggest spelling corrections • Automatically load web pages that might be relevant to the one I’m looking at • Automatically file my incoming email into folders • Pop up a paperclip that tells me what kind of help I need. • THE CRITICAL POINT: • Users love DWIM when it really works • Users DESPISE it when it doesn’t

DWIM that Works • Amazon’s “customers who bought X also bought Y” • And many other recommendation-related features

DWIM Example: Spelling Correction/Suggestion • Google’s spelling suggestions are highly accurate • But this wasn’t always the case. • Google introduced a version that wasn’t very accurate. People hated it. They pulled it. (According to a talk by Marissa Mayer of Google.) • Later they introduced a version that worked well. People love it. • But don’t get too pushy. • For a while if the user got very few results, the page was automatically replaced with the results of the spelling correction • This was removed, presumably due to negative responses • Information from a talk by Marissa Mayer of Google

Query Reformulation • Query reformulation: • After receiving unsuccessful results, users modify their initial queries and submit new ones intended to more accurately reflect their information needs. • Web search logs show that searchers often reformulate their queries • A study of 985 Web user search sessions found • 33% went beyond the first query • Of these, ~35% retained the same number of terms while 19% had 1 more term and 16% had 1 fewer Use of query reformulation and relevance feedback by Excite users, Spink, Janson & Ozmultu, Internet Research 10(4), 2001

Query Reformulation • Many studies show that if users engage in relevance feedback, the results are much better. • In one study, participants did 17-34% better with RF • They also did better if they could see the RF terms than if the system did it automatically (DWIM) • But the effort required for doing so is usually a roadblock. Koenemann & Belkin, A Case for Interaction: A Study of Interactive Information Retrieval Behavior and Effectiveness, CHI’96

Query Reformulation • What happens when the web search engines suggests new terms? • Web log analysis study using the Prisma term suggestion system: Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03.

Query Reformulation Study • Feedback terms were displayed to 15,133 user sessions. • Of these, 14% used at least one feedback term • For all sessions, 56% involved some degree of query refinement • Within this subset, use of the feedback terms was 25% • By user id, ~16% of users applied feedback terms at least once on any given day • Looking at a 2-week session of feedback users: • Of the 2,318 users who used it once, 47% used it again in the same 2-week window. • Comparison was also done to a baseline group that was not offered feedback terms. • Both groups ended up making a page-selection click at the same rate. Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03.

Query Reformulation Study Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03.

Query Reformulation Study • Other observations • Users prefer refinements that contain the initial query terms • Presentation order does have an influence on term uptake Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03.

Query Reformulation Study • Types of refinements Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03.

Prognosis: Query Reformulation • Researchers have always known it can be helpful, but the methods proposed for user interaction were too cumbersome • Had to select many documents and then do feedback • Had to select many terms • Was based on statistical ranking methods which are hard for people to understand • Indirect Relevance Feedback can improve general ranking (see section on social search)

The Need to Group • Interviews with lay users often reveal a desire for better organization of retrieval results • Useful for suggesting where to look next • People prefer links over generating search terms* • But only when the links are for what they want *Ojakaar and Spool, Users Continue After Category Links, UIETips Newsletter, http://world.std.com/~uieweb/Articles/, 2001