Reward processing (1)

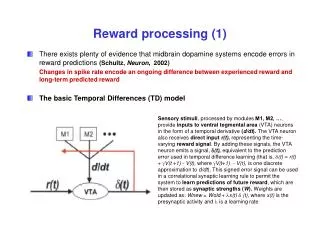

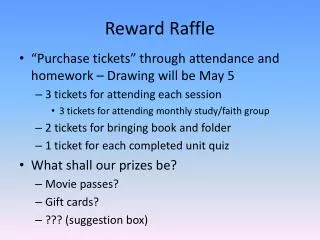

Reward processing (1). There exists plenty of evidence that midbrain dopamine systems encode errors in reward predictions (Schultz, Neuron , 2002) Changes in spike rate encode an ongoing difference between experienced reward and long-term predicted reward

Reward processing (1)

E N D

Presentation Transcript

Reward processing (1) • There exists plenty of evidence that midbrain dopamine systems encode errors in reward predictions (Schultz, Neuron, 2002) Changes in spike rate encode an ongoing difference between experienced reward and long-term predicted reward • The basic Temporal Differences (TD) model Sensory stimuli, processed by modules M1, M2, …, provide inputs to ventral tegmental area (VTA) neurons in the form of a temporal derivative (d/dt). The VTA neuron also receives direct input r(t), representing the time-varying reward signal. By adding these signals, the VTA neuron emits a signal, (t), equivalent to the prediction error used in temporal difference learning (that is, (t) = r(t) + V(t +1) - V(t), where V(t+1) - V(t), is one discrete approximation to d/dt). This signed error signal can be used in a correlational synaptic learning rule to permit the system to learn predictions of future reward, which are then stored as synaptic strengths (W). Weights are updated as: Wnew = Wold + x(t) (t), where x(t) is the presynaptic activity and is a learning rate

Reward processing (2) • The reward prediction error signal, encoded as phasic changes in dopamine delivery, is suspected to directly bias action selection. (Ej.: there are heavy dopaminergic projections to neural targets like the dorsal striatum thought to participate in the sequencing and selection of motor action.) (A) Action selection (decision making) model that uses a reward prediction error to bias action choice. Two actions, 1 and 2, are available to the system, and in analogy with reward predictions learned for sensory cues, each action has an associated weight that is modified using the prediction error signal. The weights are used as a drive or incentive to take one of the two available actions. (B) Once an action is selected and an immediate reward received, the associated weight is updated.

From reward signals to valuation signals (1) • “The way the brain encodes “predictions” and “expectancy” is still an open question.” (Engel, Fries, & Singer, Nature Reviews Neuroscience, 2001) • Montague & Berns (Neuron, 2002) suggest that the orbitofrontal system (OFS) computes an ongoing valuation of rewards, punishments, and their predictors. By providing a common valuation scale for diverse stimuli, this system emits a signal useful for comparing the value of future events: a signal required for decision-making algorithms. • Any valuation scheme for reward predictors must take two important principles into account: • A dynamical estimate of the future cannot be exact. As time passes, the uncertainty (error) in the estimate will accumulate. • The value of a fixed return diminishes as a function of the time to payoff, that is, the time from now until the reward arrives.

From reward signals to valuation signals (2) • A diffusion approach is suggested to describe the accumulation of uncertainty with time in a future reward: • Let n be “now”, x a time in the future. Let be the estimated value of the reward in time x. A new estimation, adjusted to account for the time uncertainty would be: where and D is a constant. • An exponential decrease of the value of a reward predictor with time is proposed. where qis the probability per unit time that an event occurs in Δtthat is more valuable than the current value of the reward predictor.

From reward signals to valuation signals (3) • The scheme that combines these two principles is called the predictor-valuation model. This model, with its “stay-on-a-choice-or-switch” argument results in a procedure for continuously deciding whether the current value of a predictor is worth continued processing (investment). A hypothesis is that the orbito-frontal cortex and striatum are the likely sites to participate in such an important valuation function.

Reward signals and top-down processing • “Reward signals are thought to gate learning processes that optimize functional connections between prefrontal [cortex] and lower-order sensorimotor [neural] assemblies.” (Engel, Fries, & Singer, Nature Reviews Neuroscience, 2001)

Reward processing • There exists plenty of evidence that midbrain dopamine systems encode errors in reward predictions (Schultz, Neuron, 2002) Changes in spike rate encode an ongoing difference between experienced reward and long-term predicted reward • The reward prediction error signal, encoded as phasic changes in dopamine delivery, is suspected to directly bias action selection. (Ej.: there are heavy dopaminergic projections to neural targets like the dorsal striatum thought to participate in the sequencing and selection of motor action.) • Montague & Berns (Neuron, 2002) suggest that the orbitofrontal system (OFS) computes an ongoing valuation of rewards, punishments, and their predictors. By providing a common valuation scale for diverse stimuli, this system emits a signal useful for comparing the value of future events: a signal required for decision-making algorithms. • “Reward signals are thought to gate learning processes that optimize functional connections between prefrontal [cortex] and lower-order sensorimotor [neural] assemblies.” (Engel, Fries, & Singer, Nature Reviews Neuroscience, 2001)

Top-Down Influences: Attention, Memory Sensory processing STIMULI REWARD PREDICTION ERROR VENTRAL TEGMENTAL AREA (VTA) BEHAVIOUR UPDATING B E H A V I O U R REWARD PROXIES (PREDICTORS) Continuous Prediction Valuation REWARD I N N E R W O R L D O U T E R W O R L D Reward processing

Reward processing (References) • Schultz, W., (2002). Getting formal with dopamine and reward. Neuron, Vol.36, Oct., 241–263. • Montague, P.R., and Berns, G.S. (2002). Neural economics and biological substrates of valuation. Neuron Vol.36, 265–284. • Usher, M. and McClelland, J.L., (2001).The time course of perceptual choice: The leaky, competing accumulator model. Psychological Review, Vol.108, 550-592. • Engel, A.K., Fries, P., and Singer, W. (2001). Dynamic predictions: oscillations and synchrony in top-down processing. Nature Reviews Neuroscience, Vol.2, 704-716. • Fiorillo CD, Tobler PN, Schultz W. (2003) Discrete Coding of Reward Probability and Uncertainty by Dopamine Neurons. Science, Vol.299, 21March. • Breiter, H.C., Aharon, I., Kahneman, D., Dale, A., and Shizgal, P. (2001). Functional imaging of neural responses to expectancy andexperience of monetary gains and losses. Neuron, Vol.30, 619–639. • Montague, P.R., and Sejnowksi, T.J. (1994). The predictive brain: temporal coincidence and temporal order in synaptic learning mechanisms. Learning & Memory, Vol.1, 1–33 • O'Doherty,J.P., Dayan, P., Friston, K., Critchley, H., and Dolan, R.J. (2003) Temporal Difference Models and Reward-Related Learning in the Human Brain, Neuron, Vol. 38, 329–337, April 24