Advanced Coarse-Grained Coherence Techniques for Multiprocessor Systems

920 likes | 1.07k Views

This presentation by Mikko H. Lipasti from the University of Wisconsin-Madison discusses advancements in coarse-grained coherence techniques utilized in multiprocessor systems. Highlighting the challenges of maintaining coherent caches, it covers innovative approaches such as Broadcast Snoop Reduction and Stealth Prefetching aimed at optimizing memory access and reducing unnecessary broadcasts. The techniques promise enhancements in system performance by minimizing latency, snoop activity, and improving power efficiency while introducing mechanisms like Region Coherence Arrays for better management of coherence at a larger granularity.

Advanced Coarse-Grained Coherence Techniques for Multiprocessor Systems

E N D

Presentation Transcript

Coarse-Grained Coherence Mikko H. Lipasti Associate Professor Electrical and Computer Engineering University of Wisconsin – Madison Joint work with: Jason Cantin, IBM (Ph.D. ’06) Natalie Enright Jerger Prof. Jim Smith Prof. Li-Shiuan Peh (Princeton) http://www.ece.wisc.edu/~pharm

Motivation • Multiprocessors are commonplace • Historically, glass house servers • Now laptops, soon cell phones • Most common multiprocessor • Symmetric processors w/coherent caches • Logical extension of time-shared uniprocessors • Easy to program, reason about • Not so easy to build Mikko Lipasti-University of Wisconsin

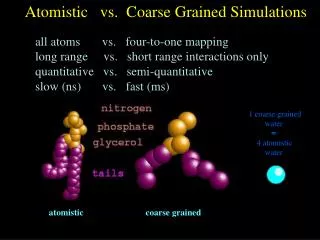

P0 P1 P2 P3 P4 P5 P6 P7 Coherence Granularity • Track each individual word • Too much overhead • Track larger blocks • 32B – 128B common • Less overhead, exploit spatial locality • Large blocks cause false sharing • Solution: use multiple granularities • Small blocks: manage local read/write permissions • Large blocks: track global behavior Mikko Lipasti-University of Wisconsin

Coarse-Grained Coherence • Initially • Identify non-shared regions • Decouple obtaining coherence permission from data transfer • Filter snoops to reduce broadcast bandwidth • Later • Enable aggressive prefetching • Optimize DRAM accesses • Customize protocol, interconnect to match Mikko Lipasti-University of Wisconsin

Coarse-Grained Coherence • Optimizations lead to • Reduced memory miss latency • Reduced cache-to-cache miss latency • Reduced snoop bandwidth • Fewer exposed cache misses • Elimination of unnecessary DRAM reads • Power savings on bus, interconnect, caches, and in DRAM • World peace and end to global warming Mikko Lipasti-University of Wisconsin

Coarse-Grained Coherence Tracking • Memory is divided into coarse-grained regions • Aligned, power-of-two multiple of cache line size • Can range from two lines to a physical page • A cache-like structure is added to each processor for monitoring coherence at the granularity of regions • Region Coherence Array(RCA) Mikko Lipasti-University of Wisconsin

Region Coherence Arrays • Each entry has an address tag, state, and count of lines cached by the processor • The region state indicates if the processor and / or other processors are sharing / modifying lines in the region • Customize policy/protocol/interconnect to exploit region state Mikko Lipasti-University of Wisconsin

Talk Outline • Motivation • Overview of Coarse-Grained Coherence • Techniques • Broadcast Snoop Reduction [ISCA 2005] • Stealth Prefetching [ASPLOS 2006] • Power-Efficient DRAM Speculation • Hybrid Circuit Switching • Virtual Proximity • Circuit-switched snooping • Research Group Overview Mikko Lipasti-University of Wisconsin

Unnecessary Broadcasts Mikko Lipasti-University of Wisconsin

Broadcast Snoop Reduction • Identify requests that don’t need a broadcast • Send data requests directly to memory w/o broadcasting • Reducing broadcast traffic • Reducing memory latency • Avoid sending non-data requests externally Example Mikko Lipasti-University of Wisconsin

Simulator Evaluation PHARMsim: near-RTL but written in C • Execution-driven simulator built on top of SimOS-PPC • Four 4-way superscalar out-of-order processors • Two-level hierarchy with split L1, unified 1MB L2 caches, and 64B lines • Separate address / data networks –similar to Sun Fireplane Mikko Lipasti-University of Wisconsin

Workloads • Scientific • Ocean, Raytrace, Barnes • Multiprogrammed • SPECint2000_rate, SPECint95_rate • Commercial (database, web) • TPC-W, TPC-B, TPC-H • SPECweb99, SPECjbb2000 Mikko Lipasti-University of Wisconsin

Broadcasts Avoided Mikko Lipasti-University of Wisconsin

Execution Time Mikko Lipasti-University of Wisconsin

Summary • Eliminates nearly all unnecessary broadcasts • Reduces snoop activity by 65% • Fewer broadcasts • Fewer lookups • Provides modest speedup Mikko Lipasti-University of Wisconsin

Talk Outline • Motivation • Overview of Coarse-grained Coherence • Techniques • Broadcast Snoop Reduction [ISCA-2005] • Stealth Prefetching [ASPLOS 2006] • Power-Efficient DRAM Speculation • Hybrid Circuit Switching • Virtual Proximity • Circuit-switched snooping • Research Group Overview Mikko Lipasti-University of Wisconsin

Prefetching in Multiprocessors • Prefetching • Anticipate future reference, fetch into cache • Many prefetching heuristics possible • Current systems: next-block, stride • Proposed: skip pointer, content-based • Some/many prefetched blocks are not used • Multiprocessors complications • Premature or unnecessary prefetches • Permission thrashing if blocks are shared • Separate study [ISPASS 2006] Mikko Lipasti-University of Wisconsin

Stealth Prefetching Lines from non-shared regions can be prefetched stealthily and efficiently • Without disturbing other processors • Without downgrades, invalidations • Without preventing them from obtaining exclusive copies • Without broadcasting prefetch requests • Fetched from DRAM with low overhead Example Mikko Lipasti-University of Wisconsin

Stealth Prefetching • After a threshold number of L2 misses (2), the rest of the lines from a region are prefetched • These lines are buffered close to the processor for later use (Stealth Data Prefetch Buffer) • After accessing the RCA, requests may obtain data from the buffer as they would from memory • To access data, region must be in valid state and a broadcast unnecessary for coherent access Mikko Lipasti-University of Wisconsin

L2 Misses Prefetched Mikko Lipasti-University of Wisconsin

Speedup Mikko Lipasti-University of Wisconsin

Summary Stealth Prefetching can prefetch data: • Stealthily: • Only non-shared data prefetched • Prefetch requests not broadcast • Aggressively: • Large regions prefetched at once, 80-90% timely • Efficiently: • Piggybacked onto a demand request • Fetched from DRAM in open-page mode Mikko Lipasti-University of Wisconsin

Talk Outline • Motivation • Overview of Coarse-grained Coherence • Techniques • Broadcast Snoop Reduction [ISCA-2005] • Stealth Prefetching [ASPLOS 2006] • Power-Efficient DRAM Speculation • Hybrid Circuit Switching • Virtual Proximity • Circuit-switched snooping • Research Group Overview Mikko Lipasti-University of Wisconsin

DRAM Read Xmit Block Power-Efficient DRAM Speculation • Modern systems overlap the DRAM access with the snoop, speculatively accessing DRAM before snoop response • Trading DRAM bandwidth for latency • Wasting power • Approximately 25% of DRAM requests are reads that speculatively access DRAM unnecessarily Broadcast Req Snoop Tags Send Resp Mikko Lipasti-University of Wisconsin

DRAM Operations Mikko Lipasti-University of Wisconsin

Power-Efficient DRAM Speculation • Direct memory requests are non-speculative • Lines from externally-dirty regions likely to be sourced from another processor’s cache • Region state can serve as a prediction • Need not access DRAM speculatively • Initial requests to a region (state unknown) have a lower but significant probability of obtaining data from other processors’ caches Mikko Lipasti-University of Wisconsin

Useless DRAM Reads Mikko Lipasti-University of Wisconsin

Useful DRAM Reads Mikko Lipasti-University of Wisconsin

DRAM Reads Performed/Delayed Mikko Lipasti-University of Wisconsin

Summary Power-Efficient DRAM Speculation: • Can reduce DRAM reads 20%, with less than 1% degradation in performance • 7% slowdown with nonspeculative DRAM • Nearly doubles interval between DRAM requests, allowing modules to stay in low-power modes longer Mikko Lipasti-University of Wisconsin

Talk Outline • Motivation • Overview of Coarse-grained Coherence • Techniques • Broadcast Snoop Reduction [ISCA-2005] • Stealth Prefetching [ASPLOS 2006] • Power-Efficient DRAM Speculation • Hybrid Circuit Switching • Virtual Proximity • Circuit-switched snooping • Research Group Overview Mikko Lipasti-University of Wisconsin

Chip Multiprocessor Interconnect • Options • Buses: don’t scale • Crossbars: too expensive • Rings: too slow • Packet-switched mesh • Attractive for all the same 1990’s DSM reasons • Scalable • Low latency • High link utilization Mikko Lipasti-University of Wisconsin

CMP Interconnection Networks • But… • Cables/traces are now on-chip wires • Fast, cheap, plentiful • Short: 1 cycle per hop • Router latency adds up • 3-4 cycles per hop • Store-and-forward • Lots of activity/power • Is this the right answer? Mikko Lipasti-University of Wisconsin

Circuit-Switched Interconnects • Communication patterns • Spatial locality to memory • Pairwise communication • Circuit-switched links • Avoid switching/routing • Reduce latency • Save power? • Poor utilization! Maybe OK Mikko Lipasti-University of Wisconsin

Router Design • Switches consist of • Configurable crossbar • Configuration memory • 4-stage router pipeline exposes only 1 cycle if CS • Can also act as packet-switched network • Design details in [CA Letters ‘07] Mikko Lipasti-University of Wisconsin

Protocol Optimization • Initial 3-hop miss establishes CS path • Subsequent miss requests • Sent directly on CS path to predicted owner • Also in parallel to home node • Predicted owner sources data early • Directory acks update to sharing list • Benefits • Reduced 3-hop latency • Less activity, less power Mikko Lipasti-University of Wisconsin

Hybrid Circuit Switching (1) • Hybrid Circuit Switching improves performance by up to 7% Mikko Lipasti-University of Wisconsin

Hybrid Circuit Switching (2) • Positive interaction in co-designed interconnect & protocol • More circuit reuse => greater latency benefit Mikko Lipasti-University of Wisconsin

Summary Hybrid Circuit Switching: • Routing overhead eliminated • Still enable high bandwidth when needed • Co-designed protocol • Optimize cache-to-cache transfers • Substantial performance benefits • To do: power analysis Mikko Lipasti-University of Wisconsin

Talk Outline • Motivation • Overview of Coarse-grained Coherence • Techniques • Broadcast Snoop Reduction [ISCA-2005] • Stealth Prefetching [ASPLOS 2006] • Power-Efficient DRAM Speculation • Hybrid Circuit Switching • Virtual Proximity • Circuit-switched snooping • Research Group Overview Mikko Lipasti-University of Wisconsin

Server Consolidation on CMPs • CMP as consolidation platform • Simplify system administration • Save power, cost and physical infrastructure • Study combinations of individual workloads in full system environment • Micro-coded hypervisor schedules VMs • See An Evaluation of Server Consolidation Workloads for Multi-Core Designs in IISWC 2007for additional details • Nugget: shared LLC a big win Mikko Lipasti-University of Wisconsin

Virtual Proximity • Interactions between VM scheduling, placement, and interconnect • Goal: placement agnostic scheduling • Best workload balance • Evaluate 3 scheduling policies • Gang, Affinity and Load Balanced • HCS provides virtual proximity Mikko Lipasti-University of Wisconsin

Scheduling Algorithms • Gang Scheduling • Co-schedules all threads of a VM • No idle-cycle stealing • Affinity Scheduling • VMs assigned to neighboring cores • Can steal idle cycles across VMs sharing core • Load Balanced Scheduling • Ready threads assigned to any core • Any/all VMs can steal idle cycles • Over time, VM fragments across chip Mikko Lipasti-University of Wisconsin

Load balancing wins with fast interconnect • Affinity scheduling wins with slow interconnect • HCS creates virtual proximity Mikko Lipasti-University of Wisconsin

Virtual Proximity Performance • HCS able to provide virtual proximity Mikko Lipasti-University of Wisconsin

As physical distance (hop count) increases, HCS provides significantly lower latency Mikko Lipasti-University of Wisconsin

Summary Virtual Proximity [in submission] • Enables placement agnostic hypervisor scheduler • Results: • Up to 17% better than affinity scheduling • Idle cycle reduction : 84% over gang and 41% over affinity • Low-latency interconnect mitigates increase in L2 cache conflicts from load balancing • L2 misses up by 10% but execution time reduced by 11% • A flexible, distributed address mapping combined with HCS out-performs a localized affinity-based memory mapping by an average of 7% Mikko Lipasti-University of Wisconsin

Talk Outline • Motivation • Overview of Coarse-grained Coherence • Techniques • Broadcast Snoop Reduction [ISCA-2005] • Stealth Prefetching [ASPLOS 2006] • Power-Efficient DRAM Speculation • Hybrid Circuit Switching • Virtual Proximity • Circuit-switched snooping • Research Group Overview Mikko Lipasti-University of Wisconsin

Circuit Switched Snooping (1) • Scalable, efficient broadcasting on unordered network • Remove latency overhead of directory indirection • Extend point-to-point circuit-switched links to trees • Low latency multicast via circuit-switched tree • Help provide performance isolation as requests do not share same communication medium Mikko Lipasti-University of Wisconsin

Circuit-Switched Snooping (2) • Extend Coarse Grain Coherence Tracking (CGCT) • Remove unnecessary broadcasts • Convert broadcasts to multicasts • Effective in Server Consolidation Workloads • Very few coherence requests to globally shared data Mikko Lipasti-University of Wisconsin