Importance Sampling

Importance Sampling. ICS 276 Fall 2007 Rina Dechter. Outline. Gibbs Sampling Advances in Gibbs sampling Blocking Cutset sampling (Rao-Blackwellisation) Importance Sampling Advances in Importance Sampling Particle Filtering. Importance Sampling Theory. Importance Sampling Theory.

Importance Sampling

E N D

Presentation Transcript

Importance Sampling ICS 276 Fall 2007 Rina Dechter

Outline • Gibbs Sampling • Advances in Gibbs sampling • Blocking • Cutset sampling (Rao-Blackwellisation) • Importance Sampling • Advances in Importance Sampling • Particle Filtering

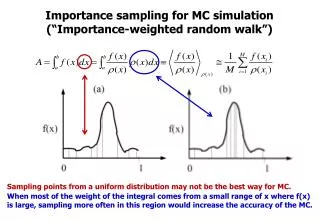

Importance Sampling Theory • Given a distribution called the proposal distribution Q (such that P(Z=z,e)>0=> Q(Z=z)>0) w(Z=z) is called as importance weight

Importance Sampling Theory Underlying principle, Approximate Average over a set of numbers by an average over a set of sampled numbers

Importance Sampling (Informally) • Express the problem as computing the average over a set of real numbers • Sample a subset of real numbers • Approximate the true average by sample average. • True Average: • Average of (0.11, 0.24, 0.55, 0.77, 0.88,0.99)=0.59 • Sample Average over 2 samples: • Average of (0.24, 0.77) = 0.505

How to generate samples from Q • Express Q in product form: • Q(Z)=Q(Z1)Q(Z2|Z1)….Q(Zn|Z1,..Zn-1) • Sample along the order Z1,..Zn • Example: • Q(Z1)=(0.2,0.8) • Q(Z2|Z1)=(0.2,0.8,0.1,0.9) • Q(Z3|Z1,Z2)=Q(Z3|Z1)=(0.5,0.5,0.3,0.7)

Q(Z1)=(0.2,0.8) • Q(Z2|Z1)=(0.2,0.8,0.1,0.9) • Q(Z3|Z1,Z2)=Q(Z3|Z1)=(0.5,0.5,0.3,0.7) How to sample from Q Domains of each variable is {0,1} • Generate a random number between 0 and 1 0 1 0.2 Which value to select for Z1? 1 0

How to sample from Q? • Each Sample Z=z • Sample Z1=z1 from Q(Z1) • Sample Z2=z2 from Q(Z2|Z1=z1) • Sample Z3=z3 from Q(Z3|Z1=z1) • Generate N such samples

Likelihood weighting • Q= Prior Distribution=CPTs of the Bayesian network

Likelihood weighting example P(S) Smoking P(C|S) P(B|S) lung Cancer Bronchitis P(X|C,S) P(D|C,B) X-ray Dyspnoea P(S, C, B, X, D)= P(S) P(C|S) P(B|S) P(X|C,S) P(D|C,B)

Likelihood weighting example P(S) Smoking Q=Prior Q(S,C,D)=Q(S)*Q(C|S)*Q(D|C,B=0) =P(S)P(C|S)P(D|C,B=0) P(C|S) P(B|S) lung Cancer Bronchitis Sample S=s from P(S) Sample C=c from P(C|S=s) Sample D=d from P(D|C=c,B=0) P(X|C,S) P(D|C,B) X-ray Dyspnoea

Difference between estimating P(E=e) and P(Xi=xi|E=e) Unbiased Asymptotically Unbiased

Outline • Gibbs Sampling • Advances in Gibbs sampling • Blocking • Cutset sampling (Rao-Blackwellisation) • Importance Sampling • Advances in Importance Sampling • Particle Filtering

Research Issues in Importance Sampling • Better Proposal Distribution • Likelihood weighting • Fung and Chang, 1990; Shachter and Peot, 1990 • AIS-BN • Cheng and Druzdzel, 2000 • Iterative Belief Propagation • Changhe and Druzdzel, 2003 • Iterative Join Graph Propagation and variable ordering • Gogate and Dechter, 2005

Research Issues in Importance Sampling (Cheng and Druzdzel 2000) • Adaptive Importance Sampling

Adaptive Importance Sampling • General case • Given k proposal distributions • Take N samples out of each distribution • Approximate P(e)

Cutset importance sampling (Gogate and Dechter, 2005) and (Bidyuk and Dechter 2006) • Divide the Set of variables into two parts • Cutset (C) and Remaining Variables (R)

Outline • Gibbs Sampling • Advances in Gibbs sampling • Blocking • Cutset sampling (Rao-Blackwellisation) • Importance Sampling • Advances in Importance Sampling • Particle Filtering

Dynamic Belief Networks (DBNs) Transition arcs Xt Xt+1 Yt Yt+1 Bayesian Network at time t Bayesian Network at time t+1 X10 X0 X1 X2 Y10 Y0 Y1 Y2 Unrolled DBN for t=0 to t=10

Query • Compute P(X 0:t |Y 0:t ) or P(X t |Y 0:t ) • Example P(X0:10|Y0:10) or P(X10|Y0:10) • Hard!!! over a long time period • Approximate! Sample!

Particle Filtering (PF) • = “condensation” • = “sequential Monte Carlo” • = “survival of the fittest” • PF can treat any type of probability distribution, non-linearity, and non-stationarity; • PF are powerful sampling based inference/learning algorithms for DBNs.

Particle Filtering On white board