Visual Illusions and Neural Networks

Explore how visual illusions trick perception and learn how neural networks function in interpreting patterns and achieving goals. Discover the models of neurons and artificial neural networks as explained in Lecture 3. Gain insights into linear separability and threshold logic units in neural networks.

Visual Illusions and Neural Networks

E N D

Presentation Transcript

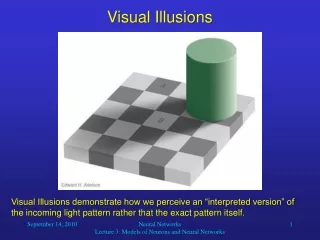

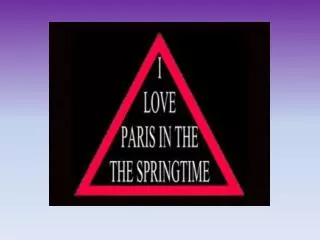

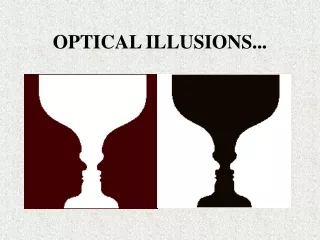

Visual Illusions Visual Illusions demonstrate how we perceive an “interpreted version” of the incoming light pattern rather that the exact pattern itself. Neural Networks Lecture 3: Models of Neurons and Neural Networks

Visual Illusions He we see that the squares A and B from the previous image actually have the same luminance (but in their visual context are interpreted differently). Neural Networks Lecture 3: Models of Neurons and Neural Networks

How do NNs and ANNs work? • NNs are able to learn by adapting their connectivity patterns so that the organism improves its behavior in terms of reaching certain (evolutionary) goals. • The strength of a connection, or whether it is excitatory or inhibitory, depends on the state of a receiving neuron’s synapses. • The NN achieves learning by appropriately adapting the states of its synapses. Neural Networks Lecture 3: Models of Neurons and Neural Networks

An Artificial Neuron synapses neuron i • x1 x2 Wi,1 Wi,2 … xi … Wi,n xn net input signal output Neural Networks Lecture 3: Models of Neurons and Neural Networks

fi(neti(t)) 1 0 neti(t) The Activation Function • One possible choice is a threshold function: The graph of this function looks like this: Neural Networks Lecture 3: Models of Neurons and Neural Networks

Binary Analogy: Threshold Logic Units (TLUs) Example: w1 = 1 • x1 w2 = 1 x2 = 1.5 x1 x2 x3 w3 = -1 x3 TLUs in technical systems are similar to the threshold neuron model, except that TLUs only accept binary inputs (0 or 1). Neural Networks Lecture 3: Models of Neurons and Neural Networks

XOR Binary Analogy: Threshold Logic Units (TLUs) Yet another example: • x1 w1 = = x1 x2 w2 = x2 Impossible! TLUs can only realize linearly separable functions. Neural Networks Lecture 3: Models of Neurons and Neural Networks

1 1 1 0 1 1 x2 x2 0 1 0 1 0 0 0 1 0 1 x1 x1 Linear Separability • A function f:{0, 1}n {0, 1} is linearly separable if the space of input vectors yielding 1 can be separated from those yielding 0 by a linear surface (hyperplane) in n dimensions. • Examples (two dimensions): linearly separable linearly inseparable Neural Networks Lecture 3: Models of Neurons and Neural Networks

Linear Separability • To explain linear separability, let us consider the function f:Rn {0, 1} with where x1, x2, …, xn represent real numbers. This is the exactly the function that our threshold neurons use to compute their output from their inputs. Neural Networks Lecture 3: Models of Neurons and Neural Networks

3 3 3 x2 x2 x2 2 2 2 1 1 1 -3 -3 -3 -2 -2 -2 -1 -1 -1 1 1 1 2 2 2 3 3 3 x1 x1 x1 -1 -1 -1 -2 -2 -2 -3 -3 -3 Linear Separability Input space in the two-dimensional case (n = 2): 1 1 1 0 0 0 w1 = 1, w2 = 2, = 2 w1 = -2, w2 = 1, = 2 w1 = -2, w2 = 1, = 1 Neural Networks Lecture 3: Models of Neurons and Neural Networks

Linear Separability • So by varying the weights and the threshold, we can realize any linear separation of the input space into a region that yields output 1, and another region that yields output 0. • As we have seen, a two-dimensional input space can be divided by any straight line. • A three-dimensional input space can be divided by any two-dimensional plane. • In general, an n-dimensional input space can be divided by an (n-1)-dimensional plane or hyperplane. • Of course, for n > 3 this is hard to visualize. Neural Networks Lecture 3: Models of Neurons and Neural Networks

x2 1 0 1 0 1 0 0 1 x1 Linear Separability • Of course, the same applies to our original function f of the TLU using binary input values. • The only difference is the restriction in the input values. • Obviously, we cannot find a straight line to realize the XOR function: In order to realize XOR with TLUs, we need to combine multiple TLUs into a network. Neural Networks Lecture 3: Models of Neurons and Neural Networks

Multi-Layered XOR Network 1 • x1 0.5 -1 x2 1 x1 x2 0.5 1 -1 x1 0.5 1 x2 Neural Networks Lecture 3: Models of Neurons and Neural Networks

x1 Wi,1 xi Wi,2 x2 Capabilities of Threshold Neurons • What can threshold neurons do for us? • To keep things simple, let us consider such a neuron with two inputs: The computation of this neuron can be described as the inner product of the two-dimensional vectorsx and wi, followed by a threshold operation. Neural Networks Lecture 3: Models of Neurons and Neural Networks

The Net Input Signal • The net input signal is the sum of all inputs after passing the synapses: This can be viewed as computing the inner product of the vectors wi and x: where is the angle between the two vectors. Neural Networks Lecture 3: Models of Neurons and Neural Networks

second vector component x wi first vector component Capabilities of Threshold Neurons • Let us assume that the threshold = 0 and illustrate the function computed by the neuron for sample vectors wiand x: Since the inner product is positive for -90 90, in this example the neuron’s output is 1 for any input vector x to the right of or on the dotted line, and 0 for any other input vector. Neural Networks Lecture 3: Models of Neurons and Neural Networks

Capabilities of Threshold Neurons • By choosing appropriate weights wi and threshold we can place the line dividing the input space into regions of output 0 and output 1in any position and orientation. • Therefore, our threshold neuron can realize any linearly separable function Rn {0, 1}. • Although we only looked at two-dimensional input, our findings apply to any dimensionality n. • For example, for n = 3, our neuron can realize any function that divides the three-dimensional input space along a two-dimension plane. Neural Networks Lecture 3: Models of Neurons and Neural Networks