Old Client Memory Issues

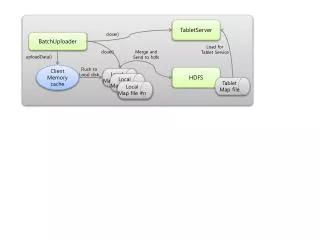

Old Client Memory Issues. ATLAS FAX Meeting September 23, 2013 Andrew Hanushevsky, SLAC http://xrootd.org. What The XRootD Client Does. Allocates a memory cache for each open file By this is zero but the proxy server default is 512MB This is historical but unfortunately still true

Old Client Memory Issues

E N D

Presentation Transcript

Old Client Memory Issues ATLAS FAX Meeting September 23, 2013 Andrew Hanushevsky, SLAC http://xrootd.org

What The XRootDClient Does • Allocates a memory cache for each open file • By this is zero but the proxy server default is 512MB • This is historical but unfortunately still true • Uses a lazy thread model • Each open file may wind up using a thread • Normally true for heavy reading and writing • Idle threads are reclaimed in the background • A scan is made every 30 seconds • And competes with other activities

What Are The Effects? • Largely immaterial for normal analysis jobs • These are not very heavy uses of the client • Such jobs use TTree-Cache not the client cache • Can provoke high memory usage in concentrated uses • Typically, the proxy server that uses the client • Active proxy servers can see high memory usage • The default cache allocation policy • Slow thread stack de-allocation • Above exacerbated by SL6 memory allocation • Several bugs in glibcmalloc routines • Presumably last one fixed 3/14/13

What Are The Mitigations? • This largely applies only to the proxy server • Add the following to the config file • pss.setoptReadCacheSize0 • Set the following envarbefore starting the proxy • MALLOC_ARENA_MAX=1 • Normally exported in /etc/sysconfig/xrootd • Be very careful to apply it only to the proxy!

Will That Really Work? • Apparently not in RH 6.2 glibc-2.12-1.47.el6.x86_64 • See https://bugzilla.redhat.com/show_bug.cgi?id=799327 • So, try also setting MALLOC_CHECK_=1 • Unfortunately, this will use a lot more CPU • You can read the gruesome details at • https://www.ibm.com/developerworks/community/blogs/kevgrig/entry/linux_glibc_2_10_rhel_6_malloc_may_show_excessive_virtual_memory_usage?lang=en

What About XRootD Itself? • If you see bloat we recommend • MALLOC_ARENA_MAX=4 • Normally exported in /etc/sysconfig/xrootd • We have seen this issue at SLAC and elsewhere