Automated analysis of Spatial Grids

730 likes | 1.1k Views

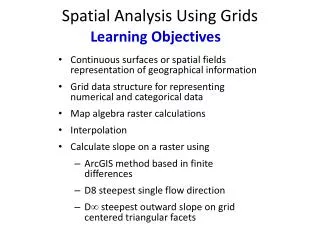

Automated analysis of Spatial Grids. Valliappa.Lakshmanan@noaa.gov Part of the short course on Artificial Intelligence at the American Meteorological Society Annual Meeting, Jan. 2006, Atlanta. Information from grids. Observed data are often on grids Satellite imagery Ground-based radar

Automated analysis of Spatial Grids

E N D

Presentation Transcript

Automated analysis of Spatial Grids Valliappa.Lakshmanan@noaa.gov Part of the short course on Artificial Intelligence at the American Meteorological Society Annual Meeting, Jan. 2006, Atlanta. lakshman@ou.edu

Information from grids • Observed data are often on grids • Satellite imagery • Ground-based radar • Other data can be gridded • You may need to extract information from these grids • Is there a boundary? • Where are the troughs? lakshman@ou.edu

Spatial grids as images • Our spatial grids are often two dimensional • Automated extraction of information from images is a long-established field in machine intelligence • We can treat spatial grids as images • And apply image processing techniques to our data. lakshman@ou.edu

Image processing terminology lakshman@ou.edu

Other terms for this field • There are subtle differences between image processing and • Data mining • Relationships between identified signatures • Knowledge discovery • A human draws the inferences • Computer Vision • Pattern recognition when it doesn’t work lakshman@ou.edu

Limitations of image processing • Scenarios where pattern recognition succeeds: • Highly controlled environments • Assembly lines, robotics • Photography • Red, green, blue channels can be assumed independent • IR channels can not! • Objects have sharp boundaries • Storms, etc. do not • The most mature sub-fields • Filters (smoothing, edge finding) • Segmentation lakshman@ou.edu

Workflow of AI applications • Artificial Intelligence applications that operate on spatial data usually follow these steps: • Gridding: take spatial data and make it a locally uniform resolution grid • Filtering: smoothing, pattern matching, etc. • Edge finding: find areas of drastic spatial change • Segmentation: find contiguous areas of constant value • Feature extraction: find features based on edges/regions • Classification: classify features using a rule base, neural network, support vector machine, etc. • Normally done with all the features from all your data fields • This will be covered by the other speakers lakshman@ou.edu

A note on convention • Image processing uses matrix notation • (0,0) is the top-left corner of the image • (0,1) is the first row, second column. • As long as you don’t think of the first index as “x” and the second index as “y”, you will be okay • In what direction does the first index increase? Answer: South or downwards lakshman@ou.edu

Image processing assumptions • Traditionally image processing assumes a Markov random process • Two pixels are correlated if they are adjacent to each other • If you have pixels: a b c • a and b are correlated as are b and c • But the correlation between a and c is captured solely by the correlation between a-b and b-c • No “second-order” correlation term. • A simplifying assumption that usually causes no problems. • If your field is random (no correlation at all) or if the correlation is second-order image processing techniques may not work lakshman@ou.edu

Image processing assumptions • An assumption that could cause problems: • The field has uniform resolution • Projected spatial grids are not uniform resolution. • Locally, most projected grids can be assumed uniform. • Pixels 10 km apart don’t vary that much in size. • Be careful with global operators on projected grids • Segmentation is a global operation • Filtering, edge finding, etc. are fine. • Filters are local operations. lakshman@ou.edu

Radar data • Radar data are NOT uniform resolution • Size of a “pixel” varies with range • Dramatic changes • Two options: • Use varying-size windows for local operators • Hard to keep track! • Convert to a Cartesian grid before applying image processing techniques • Will cause problems for local operators at far ranges where data are “repeated” • This is an issue with most projected data as well lakshman@ou.edu

Missing Data • Some times, you will have spatial data where some pixels are missing • Can you treat this data as some value? • Say zero? • Will simplify your task considerably • Can dramatically speed up operations • If not, you can vary the formulae to use only those pixels for which you have data • Filtering results may have higher quality • Operations will be much slower lakshman@ou.edu

Summary: Spatial grids • To treat spatial grids as images, • Operate on the raw, un-projected data • Less chance of repeated values • For “gridded” data, operate on grids at the resolution closest to half the spacing between observations. • Change “missing” data to background value if available. • Use local operators (e.g: filters) freely • Be careful with global operations (e.g: segmentation) • Convert computed features (such as area) to projected systems lakshman@ou.edu

Gridding From insitu observations to grids lakshman@ou.edu

In-situ observations • Some environmental data are measured by in-situ instruments • Not remotely sensed • These measurements are at geographically dispersed points • Need to be converted into grids lakshman@ou.edu

Gridding observations • In-situ observations have to be interpolated on to grids to process as images • What is the pixel resolution? • What interpolation techniques are available? lakshman@ou.edu

Pixel resolution • If the chosen pixel resolution is too coarse, lose some of the observations • If the chosen pixel resolution is too fine, strong gradients may not be apparent • A good rule of thumb: • Choose pixel resolution to be half the mean distance between observation points lakshman@ou.edu

Interpolation methods • Interpolation methods: • Cressman analysis • Barnes analysis • Kriging lakshman@ou.edu

Cressman analysis • The value at each pixel of grid: • Every observation gets weighted based on its distance from grid point • R is the “radius of influence” • Higher the R, the more distant points are considered lakshman@ou.edu

Barnes analysis • The problem with a Cressman analysis: • Even if a pixel is collocated with an observation, the final value of pixel will be different • Due to (small) effect of pixels further away • Barnes analysis tries to account for this lakshman@ou.edu

Barnes analysis equation • Barnes also changed the Cressman interpolation function to be exponential • Smoother than simple polynomials lakshman@ou.edu

Barnes analysis: the technique • Perform Cressman analysis on obs • Compute errors at observation points • Perform Cressman analysis on errors • Give the error field a weight • Add the two fields • One pass of Barnes analysis • Can repeat Barnes analysis • N-pass Barnes analysis • Closer and closer to the value of the observations at the observation points. lakshman@ou.edu

Kriging • Kriging is a principled method of interpolation • Compute correlation between observations • Use variogram to determine scaling parameter • Works only if the observations are very close to each other • M. A. Oliver and R. Webster "Kriging: a method of interpolation for geographical information system", Int’l. J. Geographical Information Systems, 1990, VOL. 4, No. 3, 313-332 lakshman@ou.edu

Convolution Filters A surprisingly powerful local operation lakshman@ou.edu

Smoothing: an intuitive look • How would you smooth the image on the top right? • So that the edges aren’t quite so ragged? • To get an image like bottom right? lakshman@ou.edu

Averaging is a smoothing operation • The most obvious way to smooth is to replace each pixel by a local average: • I used k=3 in the WDSS-II filter “average” • http://www.wdssii.org/ • The dataset I’m using for demonstration is available online. See: • http://cimms.ou.edu/~lakshman/aitut/ lakshman@ou.edu

How it works • What does this equation mean? • For every pixel x,y, look in a 2D neighborhood, add up all the values and divide by the number of pixels. • (2k+1) is the neighborhood size • k is the half-size • Because the neighborhood size needs to be odd, most software will ask you for the half-size, k lakshman@ou.edu

Convolution • Another way to think about smoothing: • At each pixel, you assign weights to the neighboring pixels. • And compute a weighted average. • W is a (2k+1)x(2k+1) matrix shown on right. • Called the convolution matrix or kernel • Technically, this is cross-correlation, but if you are using symmetric kernels (as we almost always will be), cross-correlation and convolution are the same thing. lakshman@ou.edu

Smoothing kernels • The “boxcar” kernel • Poor characteristics in transition areas. • More spatial relocation of maxima • Smudges more • A better convolution kernel is where the kernel function drops off with distance. • A Gaussian convolution kernel offers the best space/frequency trade off • Frequency = raggedness • Space = location • You can smooth a lot more effectively because the features don’t move or smudge as much. Gauss:9 Average:3 lakshman@ou.edu

Gaussian Kernel • x and y range from –k to k. • Since the Gaussian has infinite range, you need to choose k appropriately. • Choosing k to be 3 * largest sigma is a reasonable choice. • The sigmas are the scale • Divide by total weight (The W above doesn’t add up to 1) lakshman@ou.edu

Convolution kernels • By changing the weights in the convolution kernel, you can extract many features. lakshman@ou.edu

Matching filters • In general, make your convolution kernel be the feature you are trying to extract. • The above kernel returns large magnitudes for thin vertical lines • But not just thin vertical lines • Need to normalize by smoothed value also • Can use the matched filter idea to find objects lakshman@ou.edu

Scale and rotation • Convolution filters are: • Scale dependent • What if the gradient is 5 pixels apart? • Need a matching filter with columns 5 apart! • Orientation dependent • What if the boundary is horizontal? • Need a matching filter with that orientation. • Remember typical usage? • Do you know the size of a machine part before hand? • How about a squall line? lakshman@ou.edu

Scale and orientation • If you specify your convolution filter as Gaussian functions, it is easy to specify rotation and scale. • x and y range from –k to k. • Since the Gaussian has infinite range, you need to choose k appropriately. • Choosing k to be 3 * largest sigma is a reasonable choice. • Theta is the orientation from x-axis (anti-clockwise from south) • The sigmas are the scale (use same sigma for circular kernels; different sigmas for elliptical ones) • Divide by total weight (The W above doesn’t add up to 1) lakshman@ou.edu

Speed • The larger the scale of interest, the larger the half-size of the kernel. • Will take a long time to compute. • Since we need to compute weighted average at every pixel. • Two speed ups possible • Convolution can be performed in Fourier Transform space. • Convolution can be performed using separable filters. lakshman@ou.edu

Convolution with Fourier Transforms • To convolve with Fourier Transforms: • Compute 2D FFT of original image • Can not have missing data • Fill with background value somehow • Compute 2D FFT of convolution kernel • The convolution kernel has to be same size as image (pad with zeros) • Multiply the FFT values pixel-by-pixel • Take inverse 2D FFT of result. • The larger your kernel, the more dramatic the speed up. • Can be 100-200 times faster. • Seconds instead of hours! • Resources • fftw is a free, nice Fourier Transform library. • Lakshmanan, V: 2000: Speeding up a large scale filter. J. of Oc. and Atm. Tech., 17, 468-473 lakshman@ou.edu

Convolution with separable filters • What if you have missing data? • Can not use FFT method • Problem with radar data in particular. • If the convolution filter is “separable”: • Compute weighted average row-wise on original image. • Compute weighted average on the row-wise result column-by-column. • If your filter was 129x129, 60 times faster than doing the standard way. lakshman@ou.edu

What is a separable filter • A filter is separable if • For example, if the convolution filter is a simple Gaussian: • If you want to vary orientation, see: • Lakshmanan, V 2004: A separable filter for directional smoothing. IEEE Geosc. and Remote Sensing Letters, 1, 192-195 lakshman@ou.edu

Edge finding • The “line-finding” filter is not a robust way to find edges • Similar problems to using boxcar for smoothing • Use Laplacian of a Gaussian (Canny) • Differentiate the smoothing kernel twice. • Convolve image with that kernel • Look for zero crossings in the convolution result. • J. Canny, ``A computational approach to edge detection", IEEE Trans. on Pattern Analysis and Machine Intelligence, 8(6),pp679-698 (1986) lakshman@ou.edu

Multi scale or orientation analysis • Use filter banks: • To perform multi scale (multi resolution) or orientation analysis • A bunch of filters at different scales and orientations • Combine their results by a weighted average or maximum • Drawbacks: • Repeated convolutions on original image. • Results of the filters are not related. • Can get very expensive • Simplifications possible: • We can steer the results based on a few filters • The resulting features are related • The squall line contains storm cells • So far, we have only considered image-to-image • The resulting images are related. • Use wavelets lakshman@ou.edu

Wavelets • Wavelet analysis • breaks up images • smoother and smoother results on the right (s0,s1) • The lost “detail” information on the left • 3 images of the size of the corresponding s • Can not use any old filter • So that s1 is half the size of s0 • Convolution filter has to be a wavelet function • Haar function (boxcar) • Daubechies p-functions • Many others • Fast Wavelet transformations exist • Use wavelets only if you need this decomposition • Otherwise, use simple multi scale analysis with lots of Gaussian filters. • Gaussian filters have lots of nice properties. • Plus everybody knows what they are! lakshman@ou.edu

Texture • In convolution, we compute weighted average of the original image’s pixels • Can also do other operations inside neighborhood • Texture operators • Operators based on relative ordering lakshman@ou.edu

Texture operators • Texture is a good way to distinguish different objects • Not just on data value, but on distribution of data values • Lakshmanan, V., V. DeBrunner, and R. Rabin, 2000: Texture-based segmentation of satellite weather imagery. Int'l Conference on Image Processing, Vancouver, 732-735. • 2nd and 3rd order statistics • Variance, standard deviation • Homogeneity • Entropy • Shannon’s measure of information content • Bin the data values and form a histogram • P is the fraction of pixels in each bin lakshman@ou.edu

Percent filters • Operators based on sorting • Take the median value • Excellent smoothing filter • Optimal when you have speck noise • Take the maximum value • Effect of dilating the field spatially • Minimum erodes the field spatially • Use percentiles • 50% is the median (maximum noise reduction) • 0% is the min (heavily noise dependent) • 100% is the max • Intermediate values trade off on noise reduction lakshman@ou.edu

Dilation and Erosion filters Using the second-highest or second-lowest value in the neighborhood is an excellent dilation/erosion filter. Implemented this way in WDSSII. dilate erode orig lakshman@ou.edu

Filter Banks and Neural Networks • Classification using NN • Use filter banks • Convolution filters • Texture operators • Percentile operators • Each pixel provides a pattern • May have to select pixels – not use all of them • Since a 1000x1000 image has 1 million patterns. • But is only data case lakshman@ou.edu

NN random error Effect of post processing QCNN • Features computed in local neighborhoods of radar gates • Classified by NN • Returns [0,1] • Is it perfect? • If we use this directly as a mask, there will be holes in the result! • Lakshmanan, V., A. Fritz, T. Smith, K. Hondl, and G. J. Stumpf, 2005: An automated technique to quality control radar reflectivity data. J. Applied Meteorology, subm. Original Effect of Pre-processing (don’t care) QC’ed lakshman@ou.edu

NN random error Effect of post processing Actual QC technique Original Effect of Pre-processing (don’t care) QC’ed lakshman@ou.edu

Images to Objects • So far, we have looked at ways of processing images • Result is still image • Bunch of pixels • Need to group pixels together into objects • Classify, track, etc. these objects if possible. lakshman@ou.edu

Segmentation From images to objects lakshman@ou.edu