Navigating Privacy in the Digital Age

770 likes | 879 Views

Exploring the nuances of online privacy with examples like HTTP cookies, Google Street View, and Facebook. Discusses gray areas, security concerns, and user awareness.

Navigating Privacy in the Digital Age

E N D

Presentation Transcript

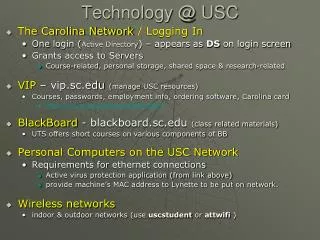

PrivacyUSC CSci499 Dr. Genevieve Bartlett USC/ISI

Privacy • The state or condition of being free from observation.

Privacy • The state or condition of being free from observation. Not really possible today…at least not on the internet.

Privacy • The right of people to choose freely under what circumstances and to what extent they will reveal themselves, their attitude, and their behavior to others.

Privacy is not black and white • Lots of grey areas and points for discussion • What seems private to you may not seem private to me • Three examples to start us off: • HTTP Cookies • Google Street View • Facebook

HTTP cookies: What are they? • Cookies = small text file • Received from a server, stored on your machine • Usually web • Purpose: HTTP is stateless, so cookies maintain state for the HTTP protocol • Eg keeping the contents of your “shopping cart” while you browse a site

HTTP cookies: 3rd party cookies • You visited your favorite site unicornsareawesome.com • unicornsareawesome.com pulls ads from lameads.com • You get a cookie from lameads.com, even though you never visited lameads.com • lameads.com can track your browsing habits every time you visit any page with ads from lameads.com… those might be a lot of pages

HTTP cookies: Grey Area? • 3rd party cookies allow ad servers to personalize your ads = more useful to you. Good! • But • You choose to go to unicornsareawesome.com= ok with unicornsareawesome.com knowing about how you use their site • Nowhere did you choose to let lameads.com monitor your browsing habits

Short Discussion: • Collusion: tool to track these 3rd party cookies • TED talk on “Tracking the Trackers” • http://www.ted.com/talks/gary_kovacs_tracking_the_trackers.html

Google Street View: What is it? • Google cars drive around and take 360° panoramic pictures. • Images are stitched together and can be browsed through on the Internet

Google Street View: Grey Area • Expectation of privacy? • I’m in public, I can expect people will see me • Expectations? • Picture linked to location • Searchable • Widely available • Available for a long time to come

Facebook: What is it? • Social networking site • Connect with friends • Share pictures, interests (“likes”)

Facebook: Grey Area • Who uses Facebook data and how is data used? • 4.7 million liked a page about health conditions or treatments. Insurance agents? • 4.8 million shared information about dates of vacations. Burglars? • 2.6 million discussed recreational use of alcohol. Employers?

Facebook: More Grey • Security issues with Facebook • Confusion over privacy settings • Sudden changes in default privacy settings • Facebook tracks browsing habits, even if a user isn’t logged in (third-party cookies) • Facebook sells user information to ad agencies and behavioral trackers

Why start with these examples? • 3 examples: HTTP cookies, Google Street View, Facebook • Lots more “every day” examples • Users gain benefits by sharing data • Tons of data generated, widely shared and accessible and stored (for how long?) • Are users really aware of how and who?

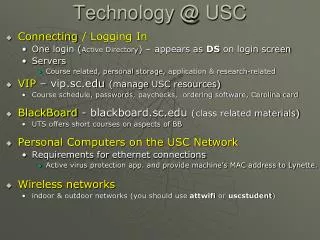

Today’s Agenda • Privacy and Privacy & Security • How do we “safely” share private data? • Privacy and Inferred Information • Privacy and Social Networks • How do we design a system with privacy in mind?

Privacy and Privacy & Security • How do we “safely” share private data? • Privacy and Inferred Information • Privacy and Social Networks • How do we design a system with privacy in mind?

Examples private information • Tons of information can be gained from Internet use: • Behavior • Eg. Person X reads reddit.com at work. • Preferences • Eg. Person Y likes high heel shoes and uses Apple products. • Associations • Eg. Person X and Person Y are friends. • PPI (private, personal/protected information) • credit card #s, SSN, nick names, addresses • PII (personally identifying information) • Eg. Your age + your address = I know who you are, even if I’m not given your name.

How do we achieve privacy? • policy + security mechanisms • + law + ethics + trust • Anonymity & Anonymization mechanisms • Make each user indistinguishable from the next • Remove PPI & PII • Aggregate information

Who wants private info? • Governments – surveillance • Businesses – targeted advertising, following trends • Attackers – monetize information or cause havoc • Researchers – medical, behavioral, social, computer

Who has private info? • You and me • End-users • Customers • Patients • Businesses • Protect mergers, product plans, investigations • Government & law enforcement • National security • Criminal investigations

Privacy and Security • Security enables privacy • Data is only as safe as the system its on • Sometimes security at odds with privacy • Eg. Security requires authentication, but privacy is achieved through anonymity • Eg. TSA pat down at the airport

Privacy and Privacy & Security • How do we “safely” share private data? • Privacy and Inferred Information • Privacy and Social Networks • How do we design a system with privacy in mind?

Why do we want to share? • Share existing data sets: • Research • Companies • Buy data from each other • Check out each other’s assets before merges/buyouts • Start a new dataset: • Mutually beneficial relationships • Share data with me and you can use this service

Sharing everything? • Easy, but what are the ramifications? • Legal/policy may limit what can be shared/collected • IRBs: Institutional Review Board • HITECH & HIPAA: Health Insurance Portability and Accountability Act • Future use and protection of data?

Mechanisms for limited sharing • Remove really sensitive stuff (sanitization) • PPI & PII (private, personal & private identifying) • Without a crystal ball, this is hard • Anonymization • Replace information to limit ability to tie entities to meaningful identities • Aggregation • Remove PII by only collecting/releasing statistics

Anonymization Example • Network trace: PAYLOAD

Anonymization Example • Network trace: PAYLOAD All sorts of PII and PPI in there!

Anonymization Example • Network trace: PAYLOAD Routing information: IP addresses, TCP flags/options, OS fingerprinting

Anonymization Example • Network trace: PAYLOAD Remove IPs? Anonymize IPs?

Anonymization Example • Network trace: PAYLOAD Removing IPs severely limits what you can do with the data. Replace with something identifying, but not the same data. IP1 = A IP2 = B Etc.

Aggregation Example • “Fewer U.S. Households Have Debt, But Those Who Do Have More, Census Bureau Reports”

Methods can be bad or good • Just because someone uses aggregation or anonymization, doesn’t mean the data is safe • Example: • Release aggregate stats of people’s favorite color?

Privacy and Privacy & Security • How do we “safely” share private data? • Privacy and Inferred Information • Privacy and Social Networks • How do we design a system with privacy in mind?

What is Inferred? • Take 2 sources of information, correlate data • X + Y = …. • Example: Google Street View + what my car looks like + where I live = you know where I was back in November

Another example • Paula Broadwell who had an affair with CIA director David Petraeus, similarly took extensive precautions to hide her identity. She never logged in to her anonymous e-mail service from her home network. Instead, she used hotel and other public networks when she e-mailed him. The FBI correlated hotel registration data from several different hotels -- and hers was the common name.

Another example: Netflix & IMDB • Netflix prize: released an anonymized dataset • Correlated with IMDB: undid anonymization (University of Texas)

Privacy and Privacy & Security • How do we “safely” share private data? • Privacy and Inferred Information • Privacy and Social Networks • How do we design a system with privacy in mind?

What is social networking data? • Associations • Not what you say, but who you talk to OMG NEW BOYFRIEND

Why is social data interesting? • From a privacy point of view: • Guilt by association • Eg. Government very interested • Phone records (US) • Facebook activity (Iran)

Computer Communication • Computer communication = social network • What sites/servers you visit/use = information on your relationship with those sites/servers • Never mind the content…How often you visit and who you visit may reveal a lot! Unicornsareawesome.com You

How do we provide privacy? • Of course encrypt content (payload)! • But: Network/transport layer = no encryption • (for now) • Anyone along the path can see source and destination… so now what?

Onion Routing • General idea: bounce connection through a bunch of machines

Don’t we bounce around already? Not actually what happens……

Don’t we bounce around already? Closer to what actually happens.

Don’t we bounce around already? • Yes, we route packets through a series of routers • BUT this doesn’t protect the privacy of who’s talking to whom… • Why? PAYLOAD