Exploring Data Relationships: Scatterplots, Correlation, and Regression Analysis

460 likes | 585 Views

This guide delves into the essential concepts of data relationships among two or more variables, particularly focusing on response and explanatory variables. It covers scatterplots to visually assess associations, the calculation of correlation coefficients to measure linear relationships, and the formulation of regression lines to predict outcomes. Key concepts include the distinction between correlation and causation, the significance of outliers, and the importance of context in interpreting relationships. With practical examples such as SAT scores and health metrics, it provides a comprehensive overview of analyzing data effectively.

Exploring Data Relationships: Scatterplots, Correlation, and Regression Analysis

E N D

Presentation Transcript

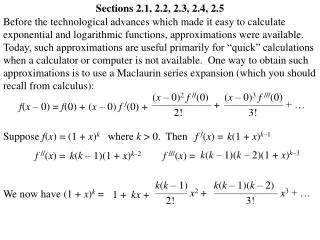

Sections 2.1-2.2 Looking at Data-Relationships

Data with two or more variables: • Response vs Explanatory variables • Scatterplots • Correlation • Regression line

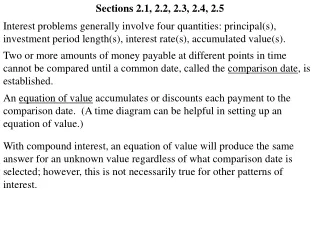

Association between a pair of variables • Association: Some values of one variable tend to occur more often with certain values of the other variable • Both variables measured on same set of individuals • Examples: • Height and weight of same individual • Smoking habits and life expectancy • Age and bone-density of individuals

Causation? • Caution: Often there are spurious, other variables lurking in the background • Shorter women have lower risk of heart attack • Countries with more TV sets have better life expectancy rates • More deaths occur when ice cream sales peak • Just explore association or investigate a causal relationship?

Preliminaries: • Who are the individuals observed? • What variables are present? • Quantitative or categorical? • Association measures depend on types of variables • Response variable measures outcome of interest • Explanatory variable explains and sometimes causes changes in response variable

Examples • Different amount of alcohol given to mice, body temperature noted (belief: drop in body temperature with increasing amount of alcohol) • Response variable? • Explanatory variable? • SAT scores used to predict college GPA • Response variable? • Explanatory variable?

Examples • Does fidgeting keep you slim? • Some people don’t gain weight even when they overeat. Perhaps fidgeting and other “nonexercise activity” explains why, here is the data: • We want to plot Y vs. X • Which is Y? • Which is X?

Things to look for on scatterplot: • Form (linear, curve, exponential, parabola) • Direction: • Positive Association: Y increases as X increases • Negative Association: Y decreases as X increases • Strength: Do the points follow the form quite closely or scattered? • Outliers: deviations from overall relationship • Let’s look again…

Example: State mean SAT math score plotted against the percent of seniors taking the exam

Adding a categorical variable or grouping • May enhance understanding of the data • Categorical variable is (region): • “e” is for northeastern states • “m” is for midwestern states • All others states excluded

Other things: • Plotting different categories via different symbols may throw light on data • Read examples 2.7-2.9 for more examples of scatterplots • Existence of a relationship does not imply causation • SAT math and SAT verbal scores have strong relationship • But a person’s intelligence is causing both • The relationship does not have to hold true for every subject, it is random

2.2 Correlation • Linear relationships are quite common • Correlation coefficient r measures strength and direction of a linear relationship between two quantitative variables X and Y • Data structure: (X,Y) pairs measured on n individuals • Weight and blood pressure • Age and bone-density

Correlation (r) • Lies between -1 and 1 • If switch roles of X and Y r remains the same • Unit free, unaffected by linear transformation • Positive correlation means positive association • negative correlation means negative association • X and Y should both be quantitative • r near 0 implies weak (or no) linear relationship; closer to +1 or -1 suggests very strong linear pattern

Formula: • Calculation: • Usually by software or calculator • Calculate means and standard deviations of data • Standardize X and Y: • take off respective mean • divide by corresponding standard deviation • Take products of X(standardized)*Y (standardized) for each subject • Add up and divide by n-1

Issues: • r is affected by outliers • Captures only the strength of the “linear” relationship • it could be true that Y and X have a very strong non-linear relationship but r is close to zero • r = +1 or -1 only when points lie perfectly on a straight line. (Y=2X+3) • SAS program: correlation.doc • proc corr is the procedure

Summary • Scatterplots: look for form, direction, strength, outliers • Correlation: Numerical measure capturing direction and strength of a linear relationship • Sign of r: direction • Value of r: strength • Always: Plot the data, look at other descriptive measures along with the correlation

Sections 2.3-2.4 Looking at Data-Relationships

2.3 Regression Line • Straight line which describes best how the response variable y changes when the explanatory variable x changes • We do distinguish between Y and X cannot switch their roles • Equation of straight line: y = a + b x • a is the intercept (where it crosses the y-axis) • b is the slope (rate) • Procedure • calculate best a and b for your data • Find the line that best fits your data • Use this line to predict y for different values of x

Example:Regression line for NEA data. We can predict the mean fat gain at 400 calories

Prediction and Extrapolation • Fitted line for NEA data: Pred. fat gain = 3.505 – 0.00344(NEA) • Prediction at 400 calories: Pred. fat gain = 3.505 – 0.00344*400 = 2.13 kg • So when a person’s NEA increases by 400 calories when they overeat, they will have a predicted fat gain of 2.13 kilograms.

Prediction and Extrapolation • Warning: Extrapolation--predicting beyond the range of the data--is dangerous! • Prediction at 1500 calories Pred. fat gain = 3.505 – 0.00344*1500 = -1.66 kg • So predicting for a 1500 NEA increase when overeating, the prediction is that they will lose 1.66 kilograms of weight • Not trustworthy • Far outside the range of the data

Least Squares Regression (LSR) Line • The line which makes the sum of squares of the vertical distances of the data points from the line as small as possible • y is the observed (actual) response • ŷ is the predicted response by using the line • Residuals • Error in prediction • y – ŷ

Formula for Least Squares Regression line Given (explanatory x, response y):

Using the formula: • Slope: b = -.7786 * 1.1389/257.66 = -0.00344 • Intercept: a = (mean of y) – slope * (mean of x) = 2.388 – (-0.00344)*324.8 = 3.505 • Regression line: Predicted fat gain = 3.505 – 0.00344*cal ŷ = 3.505 – 0.00344x

Example: Predicted values and Residuals • Predicted fat gain for observation 2 (-57 cal.) ŷ2 = 3.505 – 0.00344*(-57) = 3.70108 kg • Observed fat gain: y2 = 3.0 kg • Residual or error in prediction = y2 - ŷ2 = 3.0 – 3.70108 = -0.70108 kg

Residual practice • Residual is yi – ŷi • For NEA data observation 14 has NEA = x14 = 580 • Find the predicted value, ŷ14 • Find the residual, y14 - ŷ14

Properties of regression line • Cannot switch Y and X • Passes through the mean of x and mean of y • Physical interpretation of the slope b: • with one unit increase in X, how much does Y change on average? • Example: NEA data: with 1 calorie increase in NEA, fain gain changes by -0.00344 kg • How about 100 increase in NEA?

Properties (cont.) • Sign of slope (b) is sign of correlation (r) • captures the direction of linear association • Slope b is affected by scale change but not by a shift (adding or subtracting a constant from all data points) • Convert: X from months to years • Let’s say the slope is 5, when using months • What would the slope be if we used years for X instead? • If Y increases by 5 per month, it’ll increase by ? per year?

Using software • SAS will evaluate the least squares regression line but you have to know where to find them in the output • Residuals and predicted values are also printed • SAS program : regression.doc • the regression procedure is proc reg • We will do a deeper analysis of regression in chapter 10

Correlation and Regression • In correlation, X and Y are interchangeable, NOT so in regression. • Slope (b), depends on correlation (r) • R2—Coefficient of Determination • Square of correlation • Fraction of variation in y explained by LSR line • Higher R2 suggests better fit • Example: R2 = 0.6062 for NEA data • means that 60.62% of the unexplained variation in fat gain is explained by your fitted regression line with x = NEA.

R2—another example • Explains the part of the variation of y which comes from the linear relationship between y and x. In this case between Height and Age. less spread tight fit R2 = 0.989 more scatter more error in prediction R2 = 0.849

2.4 Caveats about correlation & regression • Residuals can tell us whether we have a good fit • Residual = observed y - predicted y • Residual plot: plot of residuals vs x • Used to assess the fit of regression line • Residuals add up to zero and have a mean of zero • Thus, a fit is considered good if the plot shows a random spread of points about the zero line but without any definitive pattern

Residual plot • Scatterplot of residuals against explanatory variable • Helps assess the fit of regression line

Outliers and influential observations • Outliers: Lies outside the pattern of other observations • Y-outliers: large residual • X-outliers: often influential in regression • Influential points: Deleting this point changes your statistical analysis drastically • pull the regression line towards themselves • Least squares regression is NOT robust to presence of outliers

Example: Gesell data • r = 0.4819 • Subject 15: • Y-outlier • Far from line • High residual • Subject 18: • X-outlier • Close to line • Small residual

Example: Gesell data • r = 0.4819 • Drop 15: • r = 0.5684 • Drop 18: • r = 0.3837 • Both have some influence, but neither seems excessive

Causation • Association does not imply causation! • An association between x and y, even if it is very strong, is not itself good evidence that changes in x actually cause changes in y. • Causation: Variable X directly causes a change in Variable Y • Example: • X = plant food • Y = plant’s growth

Common Response • Other variables may affect the relationship between X and Y • Beware of lurking variables • Example: for children, • X = height • Y = Math Score • Z = Age

Confounding • Other variables may affect the relationship between X and Y • Can’t separate effects of X and Z on Y • Example: • X = number years of education • Y = income • Z = ??