ReverseTesting: An Efficient Framework to Select Amongst Classifiers under Sample Selection Bias

240 likes | 331 Views

ReverseTesting: An Efficient Framework to Select Amongst Classifiers under Sample Selection Bias. Wei Fan IBM T.J.Watson Ian Davidson SUNY Albany. Sampling process. Where Sample Selection Bias Comes From?. Universe of Examples: Joint probability distribution P(x,y) = P(y|x) P(x)

ReverseTesting: An Efficient Framework to Select Amongst Classifiers under Sample Selection Bias

E N D

Presentation Transcript

ReverseTesting: An Efficient Framework to Select Amongst Classifiers under Sample Selection Bias Wei Fan IBM T.J.Watson Ian Davidson SUNY Albany

Sampling process Where Sample Selection Bias Comes From? Universe of Examples: Joint probability distribution P(x,y) = P(y|x) P(x) DM models this universe Training Data Question: Is the training data a good sample of the universe? Algorithm x Model y

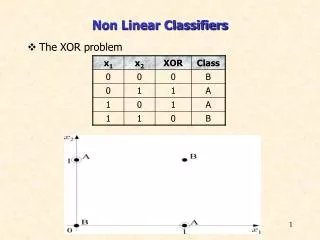

Universe of Examples Two classes: red and green red: f2>f1 green: f2<=f1

Unbiased & Biased Samples Biased Sample: less likely to sample points close to decision boundary Rather Unbiased Sample: evenly distributed

Trained from Unbiased Sample Trained from Biased Sample Single Decision Tree Error = 2.9% Error = 7.9%

Trained from Unbiased Sample Trained from Biased Sample Random Decision Tree Error = 3.1% Error = 4.1%

What can we observe? • Sample Selection Bias does affect modeling. • Some techniques are more sensitive to bias than others. • Models’ accuracy do get affected. • One important question: • How to choose amongst the best classification algorithm, given potentiallybiased dataset?

Ubiquitous Problem • Fundamental assumption: training data is an unbiased sample from the universe of examples. • Catalogue: • Purchase history is normally only based on each merchant’s own data • However, may not be representative of a population that may potentially purchase from the merchant.. • Drug Testing: • Fraud Detection: • Other examples (see Zadrozny’04 and Smith and Elkan’04)

Effect of Bias on Model Construction • Inductive model: • P(y|x,M): non-trivial dependency on the constructed model M. • Recall that P(y|x) is the true conditional probability “independent” from any modeling techniques. • In general, P(y|x,M) != P(y|x). • If the model M is the “correct model”, sample selection bias doesn’t affect learning. (Fan,Davidson,Zadrozny, and Yu’05) • Otherwise, it does. • Key Issues: • for real-world problems, we normally do not know the relationship between P(y|x,M) and P(y|x). • No exact idea about where the bias comes from.

Re-Capping Our focus • How to choose amongst the best classification algorithm, given potentiallybiased dataset? • No information on the exactly how the data is biased • No information on if the learners are affected by the bias. • No information on true model, P(y|x)

Failure of Traditional Methods • Given sample section bias, cross-validation based methods are a bad indicator of which methods are the most accurate. • Results come next.

ReverseTesting • Basic idea: how to use testing data’s feature vector x’s to help ordering different models even when their true labels y are not known.

MA MBA MAA Labeled test data MBB MB MAB A A DA B B DB Basic Procedure Train Test Train Estimate the performance of MA and MB based on the order of MAA, MAB, MBA and MBB

Rule • If “A’s labeled test data” can construct “more accurate models”for both algorithm A and B evaluated on labeled training data, then A is expected to be more accurate. • If MAA > MAB and MBA > MBB then choose A • Similarly, • If MAA < MAB and MBA < MBB then choose B • Otherwise, undecided.

Heuristics of ReverseTesting • Assume that: • A is more accurate than B • Use both A and B labeled data to train two models. • Using A’s data is likely to train a more accurate model than B’s data.

Why CV won’t work? Sparse Region

CV under-estimate in sparse regions • 1. Examples in sparse regions are under represented in CV’s averaged results. • Comparing those examples near the decision boundary • A model performs badly in these under sample regions are not accurately • estimated in cross-validation. • 2. CV could also create “biased folds” in these “sparse” regions. • Their estimate on biased region itself could also be unreliable. • 3. No information on how a model behaves on “feature vectors” not represented in • the training data.

Decision Boundary of one fold in 10-fold CV 1-fold Full Training Data

Desiderata in ReverseTesting • Not reduce the size of “sparse regions” as 10-fold CV does • Not use “training model” or something close to training model. • Utilize “feature vectors” not present in the training dataset.

C45 Decision Boundary C45 can never learn such a model from training data RDT labeled data C45 labeled data RDT Data C45 labeled data Training Data

RDT Decision Boundary C45 labeled data RDT labeled data

Model Comparison • “Feature vectors in testing data” change the “decision boundary. • The model constructed by algorithm A from A’s own labeled data != original “training model”. • A’s “inductive bias” is represented in B’s space. • “Use the changed boundary to include more emphasis on these sparse regions for both A and B re-trained on the two labeled test datasets.

Summary • Sample Selection bias is a ubiquitous problem for DM and ML in practice. • For most applications and modeling, techniques, sample selection bias does affect accuracy. • Given sample selection bias, CV based method is bad at estimating order. • ReverseTesting can do a much better job. • Future work: • not only orders but also estimates accuracy.