Machine Learning Hidden Markov Model

Machine Learning Hidden Markov Model. Darshana Pathak University of North Carolina at Chapel Hill Research Seminar – November 14, 2012. Disclaimer. All the information in the following slides assumes that “There is a GREAT human mind behind every computer program.”.

Machine Learning Hidden Markov Model

E N D

Presentation Transcript

Machine Learning Hidden Markov Model DarshanaPathak University of North Carolina at Chapel Hill Research Seminar – November 14, 2012

Disclaimer All the information in the following slides assumes that “There is a GREAT human mind behind every computer program.”

What is Machine Learning? • Make Computers learn from a given task and experience. • “Field of study that gives computers the ability to learn without being explicitly programmed”. • Arthur Samuel (1959)

Why Machine Learning? • Human Learning is terribly slow! (?) • 6 years to start school, around 20 more years to become cognitive /computer scientist... • Linear programming, calculus, Gaussian models, optimization techniques and so on…

Why Machine Learning? • No copy process in human beings - ‘one-trial learning’ in computers. • Computers can be programmed to learn – Both human and computer programs make errors, error is predictable for computer, we can measure error.

Some more reasons… • Growing flood of electronic data – Machines can digest huge amounts of data which is not possible for human. • Supporting computational power is also growing! • Data mining – to help improve decisions • Medical records study for diagnosis • Speech/handwriting/face recognition • Autonomous driving, robots

Important Distinction • Machine learning focuses on prediction, based on known properties learned from the training data. • Data mining focuses on the discovery of (previously) unknownproperties on the data. • Example: Purchase history/behavior of a customer.

Hidden Markov Model

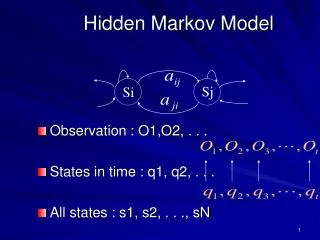

Hidden Markov Model -HMM • A Markov model with hidden states. • Markov Model – Stochastic Model that assumes Markov property. • Stochastic model – A system with stochastic process (random process).

HMM – Stochastic model • Stochastic process vs. Deterministic process. • SP is probabilistic counterpart of DP. • Examples: • Games involving dice and cards, coin toss. • Speech, audio, video signals • Brownian motion • Medical data of patients • Typing behavior (Related to my project)

HMM – Markov Model • Markov Model – Stochastic Model that assumes Markov property. • Markov property Memory-less property • Future states of the process depend only upon the present state, • And not on the sequence of events that preceded it.

Funny example of Markov chain • 0 – Home; 4 – Destination • 1,2,3 corners;

Hidden Markov Model - HMM • A Markov model with hidden states – Partially observable system.

HMM • Markov process is hidden, we can see sequence of output symbols (observations).

HMM: Simple Example • Determine the average annual temperature at a particular location over a series of years (Past when thermometers were not invented). • 2annual temperatures, Hot – H and Cold - C. • A correlation between the size of tree growth rings and temperature. • We can observe Tree ring size. • Temperature is unobserved – hidden.

HMM – Formation of problem • 2 hidden states – H and C • 3 observed states – tree ring sizes. Small – S, Medium – M, Large – L. • The transition probabilities, observation matrix and initial state distribution. • All matrices are row stochastic.

HMM – Formation of problem • Consider a 4 year sequence. • We observe the series of tree rings S;M; S; L. O = (0, 1, 0, 2) • We need to determine temperature (H or C) for these 4 years i. e. Most likely state sequence of Markov process given observations.

HMM – Formation of problem • X = (x0, x1, x2, x3) • O = (O0, O1, O2, O3) • A = State transition probability (aij) • B = Observation probability matrix (bij)

HMM – Formation of problem • aij = P(state qjat t + 1 | state qiat t) • Bj(k) = P(observation k at t | state qj at t) • P(X) = πx0 * bx0(O0) * ax0,x1 * bx1(O1) * ax1,x2 * bx2(O2) * ax2,x3bx3(O3) • P(HHCC) = 0.6(0.1)(0.7)(0.4)(0.3)(0.7)(0.6)(0.1) = 0.000212

Applying HMM to Error Generation • Erroneous data in real-world data sets • Typing errors are very common. • Insertion • Deletion • Replace • Is there any way to determine most probable sequence or patterns of errors made by typist?

Applying HMM to Error Generation • Examples: 1. BRIDGETT and BRIDGETTE 2. WILLIAMS and WILIAMS 3. LATONYA and LATOYA 4. FREEMAN and FREEMON

Applying HMM to Error Generation • Sequence of characters/Alignment Problem

HMM & Error Generation • Hidden states: Pointer positions • Observations: Output character sequence • Problems: • Finding Path - Given an input, output character sequence and HMM model, determine most probable operation sequence? • Training - Given n pairs of input and output sequences, what is the model that maximizes probability of output? • Likelihood - Given input, output and the model, determine likelihood of observed sequence.

References • Why should machines learn? – Herbert A. Simon, Department of Computer Science and Psychology, Carnegie-Mellon University, C.I.P. # 425 • http://en.wikipedia.org/wiki/Machine_learning • http://en.wikipedia.org/wiki/Hidden_Markov_model • A Revealing Introduction to Hidden Markov Models – Mark Stamp, Department of Computer Science, San Jose State University