Hidden Markov Model

Hidden Markov Model. Three Holy Questions of Markov. EHSAN KHODDAM MOHAMMADI. Markov Model (1/). Used when modeling a sequence of random variables that aren’t independent the value of each variable depends only on the previous element in the sequence

Hidden Markov Model

E N D

Presentation Transcript

Hidden Markov Model Three Holy Questions of Markov EHSAN KHODDAM MOHAMMADI

Markov Model (1/) • Used when modeling a sequence of random variables that • aren’t independent • the value of each variable depends only on the previous element in the sequence • In other words, in a Markov model, future elements are conditionally independent of the past elements given the present element

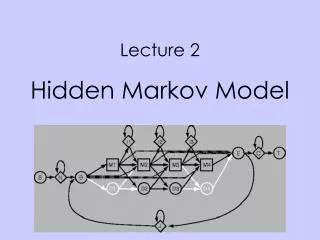

Probabilistic Automata • Actually HMM is an Automata • States • Probabailstic rules for state-transition • State-Emission • Formal definition will come later • In an HMM, you don’t know the state sequence that the model passes through, but only some probabilistic function of it • You Model the underlying Dynamics of a process which generating surface events, You don’t know what’s going on but you could predict it!

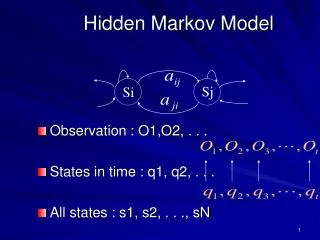

Markov model (2/) • X = (X1, …, XT): a sequence of random variables, Taking values in some finite set S = {s1, …, sN} or the state space • Markov properties • limited horizon: • P(Xt+1 = sk| X1,t) = P(Xt+1 = sk| Xt) • time invariant (stationary): • P(Xt+1 = Si| Xt = sj) = P(X2 = si| X1 = sj) • X: a Markov chain

Markov Model (3/) • Transition matrix, A = [ aij ] aij = P(Xt+1 = si| Xt = sj) • Initial state probabilities, Π = [ πi ] πi = P(X1 = si) • Probability of a Markov chain X = (X1, …, XT) P(X1, …, XT) = P(X1) P(X2|X1) … P(XT|XT-1) πX1aX2.X1 … aXTXT-1 = πX1Πt=1,T-1aXtXt-1

Crazy Soft Drink Machine 0.3 0.7 0.5 0.5

Three Holy Questions! • 1. Given a model = (A, B,П), how do we efficiently compute how likely a certain observation is, that is P(O|μ)? • 2. Given the observation sequence O and a model μ how do we choose a state sequence X1, …, XT, that best explains the observations? • 3. Given an observation sequence O, and a space of possible models found by varying the model parameters = (A, B, П), how do we find the model that best explains the observed data?

The Return of Crazy Machine • What is the probability of seeing the output sequence {lem, ice_t} if the machine always starts off in the cola preferring state?

Solutions to Holy Questions! • Forward Algorithm(D.P.) • Viterbi Algorithm (D.P.) • Baum-Welch Algorithm (EM optimization)

REFERENCE • “Foundations Of Statistical Natural Language Processing”, Ch 9, Manning & Schutze , 2000 • “Hidden Markov Models for Time Series - An Introduction Using R”, Zucchini, 2009 • “NLP 88 Class lectures” , CSE, Shiraz University, Dr. Fazli, 2009