Analysis of Variance

290 likes | 667 Views

Analysis of Variance. ANOVA. Probably the most popular analysis in psychology Why? Ease of implementation Allows for analysis of several groups at once Allows analysis of interactions of multiple independent variables. Assumptions.

Analysis of Variance

E N D

Presentation Transcript

ANOVA • Probably the most popular analysis in psychology • Why? • Ease of implementation • Allows for analysis of several groups at once • Allows analysis of interactions of multiple independent variables.

Assumptions • As before, if the assumptions of the test are not met we may have problems in its interpretation • The usual suspects: • Independence of observations • Normally distributed variables of measure • Homogeneity of Variance

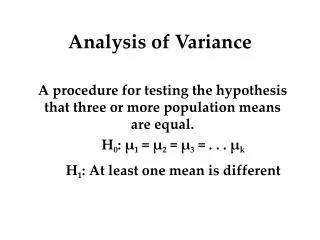

Null hypothesis • H0: 1= 2… = k • H1: not H0 • Anova will tell us that the means are different in some way • As the assumptions specify that the shape and dispersion should be equal, the only way left to differ is in terms of means • However we will have to do multiple comparisons to give us the specifics

Why not multiple t-tests? • Anova allows for an easy way to look at interactions • Probability of type one error goes up with multiple tests • Probability .95 of not making type 1: .95*.95*.95 = .857 1-.857 = .143 probability of making type 1 error • So probability of type I error = 1 - (1-)c • Note: some note that each analysis could be taken as separate and independent of all others

Review • With an independent samples t-test we looked to see if two groups had different means • With this formula we found the difference between the groups and divided by the variability within the groups by calculation of their respective variances which we then pooled together.

Comparison to t-test • In this sense with our t-statistic we have a ratio of the difference between groups to the variability within the groups (individual scores from group means) • Total variability comes from: • Differences between groups • Differences within groups • A similar approach is taken for ANOVA as well

ts and Fs • Note that the t-test is just a special case (2-group) of Analysis of Variance • t2 = F

Computation • Sums of squares • Treatment • Error • Total (Treatment + Error) • SSTotal = sums of squared deviations of scores about the grand mean • SSTreat = sums of squares of the deviations of the means of each group from the grand mean (with consideration of group N) • Sserror = the rest • or SSTotal – SSTreat • Sums of squared deviations of the scores about their group mean

SSTotal = • SSTreat = • SSerror =

The more the sample means differ, the larger will be the between-samples variation

Example • Ratings for a reality tv show involving former WWF stars, people randomly abducted from the street, and a couple orangutans • 1) 18-25 group 7 4 6 8 6 6 2 9 Mean = 6 SD = 2.2 • 2) 25-45 group 5 5 3 4 4 7 2 2 Mean = 4 SD = 1.7 • 3) 45+ group 2 3 2 1 2 1 3 2 Mean = 2 SD = .76 • SSTreat = 8(6-4)2 + 8(4-4)2 + 8(2-4)2 = 64 • SSTotal = (7-4)2 + (4-4)2 + (6-4)2 … + (3-4)2 + (2-4)2 = 122 • SSerror = SSTotal – SSTreat = 58 • For SSerror we could have also added variances 2.22+1.72+.762 and multiplied by n-1 (7).

Now what? • Well now we just need df and we’re set to go. • SStreat = k – 1 where k is the number of groups (each group mean deviating from the grand mean) • SSerror = N - k (loss of degree of freedom for each group mean) • SStotal = N - 1 (loss of degree of freedom from using the grand mean in the calculation) or just add the other two.

The F Ratio • The F ratio has a sampling distribution like the t did • That is, estimates of F vary depending on exactly which sample you draw • Again, this sampling distribution has known properties given the df that can be looked up in a table/provided by computer so we can test hypotheses

Next • Construct an ANOVA table: • MS refers to the Mean Squares which are found by dividing the SS by their respective df. Since both of the SS values are summed values they are influenced by the number of scores that were summed (for example, SStreat used the sum of only 3 different values (the group means) compared to SSerror, which used the sum of 24 different values). To eliminate this bias we can calculate the average sum of squares (known as the mean squares, MS). • Our F ratio (or F statistic) is the ratio of the two MS values.

Finally • To look for statistical significance, check your table at 2 and 21 degrees of freedom at your chosen alpha level

Interpretation • There is some statistically significant difference among the group means. • Measure of the ratio of the variation explained by the model and the variation explained by nonsystematic factors, i.e. experimental effect to the individual differences in performance. • If < 1 then it must represent a non-significant effect. • The reason why is because if the F-ratio is less than 1 it means that MSe is greater than MSt, which in real terms means that there is more nonsystematic than systematic variance. • This is why you will sometimes see just F < 1 reported

F-ratio and p-value Between Groups SS Effect of exp. m. & Error Within Groups SS Error • The F-ratio is essentially this ratio, but after allowance for n (number of respondents, number of experimental conditions) has been made • The greater the effect of the experimental manipulation, the larger F will be all else being equal • The p-value is the probability that the F-ratio obtained (or more extreme) occurred due to sampling error assuming the null hypothesis is true • As always p(D|H0)

Interpretation • So they are different in some fashion, what else do we know? • Nada. • This is the limit of the Analysis of Variance at this point. All that can be said is that there is some difference among the means of some kind. The details require further analyses which will be covered later.

Unequal n • Want equal ns if at all possible • If not we will have to adjust our formula for SStreat, though as it was presented before (and below) holds for both scenarios. • The more discrepant they are, the more we may have trouble generalizing the results, especially if there are violations of our assumptions. • Minor differences (you know what those are right?) are not going to be a big deal.

Violations of assumptions • When we violate our assumption of homogeneity of variance other options become available. • Levene’s is the standard test of this for ANOVA as well though there are others. • Levene’s is considered to be conservative, and so even if close to p = .05 you should probably go with a corrective measure. • Especially be concerned with unequal n

HoV violation • Options: • Kruskal-Wallis • Welch procedure • Brown-Forsythe • See Tomarkin & Serlin 1986 for a comparison of these measures that can be used when HoV assumption is violated

Welch’s F and B-F • When you report the df, add round up and add a squiggly (~) • According to Tomarkin & Serlin, Welch’s is probably more powerful in most heterogeneity of variance situations • It depends on the situation, but Welch’s tends to perform better generally

Example output violation of HoV Regular F

Violation of normality • When normality is a concern, we can transform the data or use nonparametric techniques (e.g. bootstrapped estimates) • The Kruskal-Wallis we just looked at is a non-parametric one-way on the ranked values of the dependent variable

Gist • Approach One-way ANOVA much as you would a t-test. • Same assumptions and interpretation taken to 3 or more groups • One would report similar info: • Effect sizes, confidence intervals, graphs of means etc. • With ANOVA one must run planned comparisons or post hoc analyses (next time) to get to the good stuff as far as interpretation • Turn to robust options in the face of yucky data and/or violations of assumptions • E.g. using trimmed means