Neural Networks Chapter 9

360 likes | 583 Views

Neural Networks Chapter 9. Joost N. Kok Universiteit Leiden. Unsupervised Competitive Learning. Competitive learning Winner-take-all units Cluster/Categorize input data Feature mapping. 1. 2. 3. Unsupervised Competitive Learning. winner. output. input (n-dimensional).

Neural Networks Chapter 9

E N D

Presentation Transcript

Neural NetworksChapter 9 Joost N. Kok Universiteit Leiden

Unsupervised Competitive Learning • Competitive learning • Winner-take-all units • Cluster/Categorize input data • Feature mapping

1 2 3 Unsupervised Competitive Learning

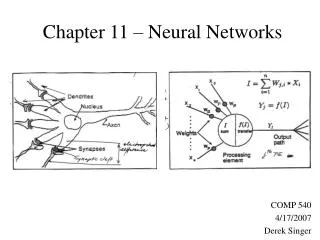

winner output input (n-dimensional) Unsupervised Competitive Learning

Simple Competitive Learning • Winner: • Lateral inhibition

Simple Competitive Learning • Update weights for winning neuron

Simple Competitive Learning • Update rule for all neurons:

Graph Bipartioning • Patterns: edges = dipole stimuli • Two output units

Simple Competitive Learning • Dead Unit Problem Solutions • Initialize weights tot samples from the input • Leaky learning: also update the weights of the losers (but with a smaller h) • Arrange neurons in a geometrical way: update also neighbors • Turn on input patterns gradually • Conscience mechanism • Add noise to input patterns

Vector Quantization • Classes are represented by prototype vectors • Voronoi tessellation

Learning Vector Quantization • Labelled sample data • Update rule depends on current classification

Adaptive Resonance Theory • Stability-Plasticity Dilemma • Supply of neurons, only use them if needed • Notion of “sufficiently similar”

Adaptive Resonance Theory • Start with all weights = 1 • Enable all output units • Find winner among enabled units • Test match • Update weights

Feature Mapping • Geometrical arrangement of output units • Nearby outputs correspond to nearby input patterns • Feature Map • Topology preserving map

i i w w After learning Before learning Self Organizing Map • Determine the winner (the neuron of which the weight vector has the smallest distance to the input vector) • Move the weight vector w of the winning neuron towards the input i

Self Organizing Map • Impose a topological order onto the competitive neurons (e.g., rectangular map) • Let neighbors of the winner share the “prize” (The “postcode lottery” principle) • After learning, neurons with similar weights tend to cluster on the map

Self Organizing Map • Input: uniformly randomly distributed points • Output: Map of 202 neurons • Training • Starting with a large learning rate and neighborhood size, both are gradually decreased to facilitate convergence

Feature Mapping • Retinotopic Map • Somatosensory Map • Tonotopic Map

Hybrid Learning Schemes supervised unsupervised

Counterpropagation • First layer uses standard competitive learning • Second (output) layer is trained using delta rule

Radial Basis Functions • First layer with normalized Gaussian activation functions