Understanding Response Patterns to Initial Smoking Experience: A Cross-sectional LCA Study

Identify patterns of first responses to cigarettes, examine relationships with current smoking behavior, and avoid recall bias. Analyze data, conduct Latent Class Analysis, fit models, and determine optimal number of classes. Utilize polychoric correlation, Bayes' rule, and model statistics for precision. Illustrate with Mplus procedure example and categorical data summaries.

Understanding Response Patterns to Initial Smoking Experience: A Cross-sectional LCA Study

E N D

Presentation Transcript

Cross-sectional LCA Patterns of first response to cigarettes

First smoking experience • Have you ever tried a cigarette (including roll-ups), even a puff? • How old were you when you first tried a cigarette? • When you FIRST ever tried a cigarette can you remember how it made you feel? (tick as many as you want) • It made me cough • I felt ill • It tasted awful • I liked it • It made me feel dizzy

Aim • To categorise the subjects based on their pattern of responses • To assess the relationship between first-response and current smoking behaviour • To try not to think too much about the possibility of recall bias

Step 1 Look at your data!!!

Examine your data structure • LCA converts a large number of response patterns into a small number of ‘homogeneous’ groups • If the responses in your data are fair mutually exclusive then there’s no point doing LCA • Don’t just dive in

How many items endorsed? numresp | Freq. Percent Cum. ------------+----------------------------------- 0 | 69 2.75 2.75 1 | 1,597 63.70 66.45 2 | 569 22.70 89.15 3 | 202 8.06 97.21 4 | 68 2.71 99.92 5 | 2 0.08 100.00 ------------+----------------------------------- Total | 2,507 100.00

Examine pattern frequency +---------------------------------------+ | cough ill taste liked dizzy num | |---------------------------------------| 16. | 0 1 1 0 0 17 | 17. | 0 0 1 1 0 13 | 18. | 1 1 0 0 1 9 | 19. | 1 1 0 0 0 8 | 20. | 0 1 1 0 1 7 | |---------------------------------------| 21. | 1 0 1 1 1 7 | 22. | 1 0 1 1 0 6 | 23. | 0 1 0 0 1 5 | 24. | 1 1 1 1 1 2 | 25. | 0 1 0 1 1 2 | |---------------------------------------| 26. | 0 1 0 1 0 1 | 27. | 1 1 1 1 0 1 | 28. | 1 1 0 1 1 1 | 29. | 0 0 1 1 1 1 | 30. | 1 1 0 1 0 1 | +---------------------------------------+ +---------------------------------------+ | cough ill taste liked dizzy num | |---------------------------------------| 1. | 0 0 1 0 0 468 | 2. | 0 0 0 1 0 452 | 3. | 1 0 0 0 0 449 | 4. | 1 0 1 0 0 279 | 5. | 0 0 0 0 1 194 | |---------------------------------------| 6. | 1 1 1 0 0 94 | 7. | 1 0 0 1 0 87 | 8. | 1 0 0 0 1 76 | 9. | 0 0 0 0 0 69 | 10. | 1 1 1 0 1 59 | |---------------------------------------| 11. | 0 0 0 1 1 56 | 12. | 1 0 1 0 1 47 | 13. | 1 0 0 1 1 35 | 14. | 0 1 0 0 0 34 | 15. | 0 0 1 0 1 27 | |---------------------------------------|

Examine correlation structure Polychoric correlation matrix

Step 2 Now you can fit a latent class model

Latent Class models • Work with observations at the pattern level rather than the individual (person) level +---------------------------------------+ | cough ill taste liked dizzy num | |---------------------------------------| 1. | 0 0 1 0 0 468 | 2. | 0 0 0 1 0 452 | 3. | 1 0 0 0 0 449 | 4. | 1 0 1 0 0 279 | 5. | 0 0 0 0 1 194 | |---------------------------------------|

Latent Class models • For a given number of latent classes, using application of Bayes’ rule plus an assumption of conditional independence one can calculate the probability that each pattern should fall into each class • Derive the likelihood of the obtained data under each model (i.e. assuming different numbers of classes) and use this plus other fit statistics to determine optimal model i.e. optimal number of classes

Latent Class models • Bayes’ rule: • Conditional independence: P( pattern = ’01’ | class = i) = P(pat(1) = ‘0’ | class = i)*P(pat(2) = ‘1’ | class = i)

How many classes can I have?~ degrees of freedom • 32 possible patterns • Each additional class requires • 5 df to estimate the 5 prevalence of each item that class (i.e. 5 thresholds) • 1 df for an additional cut of the latent variable defining the class distribution • Hence a 5-class model uses up 5*5 + 4 = 29 degrees of freedom leaving 3df to test the model

Standard thresholds • Mplus thinks of binary variables as being a dichotomised continuous latent variable • The point at which a continuous N(0,1) variable must be cut to create a binary variable is called a threshold • A binary variable with 50% cases corresponds to a threshold of zero • A binary variable with 2.5% cases corresponds to a threshold of 1.96

Standard thresholds Figure from Uebersax webpage

Data: File is “..\smoking_experience.dta.dat"; listwise is on; Variable: Names are sex cough ill taste liked dizzy numresp less_12 less_13; categorical are cough ill taste liked dizzy ; usevariables are cough ill taste liked dizzy; Missing are all (-9999) ; classes = c(3); Analysis: proc = 2 (starts); type = mixture; starts = 1000 500; stiterations = 20; Output: tech10;

What you’re actually doing model: %OVERALL% [c#1 c#2]; %c#1% [cough$1]; [ill$1]; [taste$1]; [liked$1]; [dizzy$1]; + five more threshold parameters for %c#2% and %c#3% Defines the latent class variable Defines the within class thresholds i.e. the prevalence of the endorsement of each item

SUMMARY OF CATEGORICAL DATA PROPORTIONS COUGH Category 1 0.537 Category 2 0.463 ILL Category 1 0.904 Category 2 0.096 TASTE Category 1 0.590 Category 2 0.410 LIKED Category 1 0.735 Category 2 0.265 DIZZY Category 1 0.789 Category 2 0.211

RANDOM STARTS RESULTS RANKED FROM THE BEST TO THE WORST LOGLIKELIHOOD VALUES Final stage loglikelihood values at local maxima, seeds, and initial stage start numbers: -6343.937 685561 9973 -6343.937 172907 9395 -6343.937 497824 9464 -6343.937 770684 7725 -6343.937 584663 5193 -6343.937 872295 2899 -6343.937 116150 3570 -6343.937 271339 4768 -6343.937 472383 9650 -6343.937 707126 3683 Etc.

How many random starts? • Depends on • Sample size • Complexity of model • Number of manifest variables • Number of classes • Aim to find consistently the model with the lowest likelihood, within each run

Loglikelihood values at local maxima, seeds, and initial stage start numbers: -10148.718 987174 1689 -10148.718 777300 2522 -10148.718 406118 3827 -10148.718 51296 3485 -10148.718 997836 1208 -10148.718 119680 4434 -10148.718 338892 1432 -10148.718 765744 4617 -10148.718 636396 168 -10148.718 189568 3651 -10148.718 469158 1145 -10148.718 90078 4008 -10148.718 373592 4396 -10148.718 73484 4058 -10148.718 154192 3972 -10148.718 203018 3813 -10148.718 785278 1603 -10148.718 235356 2878 -10148.718 681680 3557 -10148.718 92764 2064 Loglikelihood values at local maxima, seeds, and initial stage start numbers -10153.627 23688 4596 -10153.678 150818 1050 -10154.388 584226 4481 -10155.122 735928 916 -10155.373 309852 2802 -10155.437 925994 1386 -10155.482 370560 3292 -10155.482 662718 460 -10155.630 320864 2078 -10155.833 873488 2965 -10156.017 212934 568 -10156.231 98352 3636 -10156.339 12814 4104 -10156.497 557806 4321 -10156.644 134830 780 -10156.741 80226 3041 -10156.793 276392 2927 -10156.819 304762 4712 -10156.950 468300 4176 -10157.011 83306 2432 Success Not there yet

Scary “warnings” IN THE OPTIMIZATION, ONE OR MORE LOGIT THRESHOLDS APPROACHED AND WERE SET AT THE EXTREME VALUES. EXTREME VALUES ARE -15.000 AND 15.000. THE FOLLOWING THRESHOLDS WERE SET AT THESE VALUES: * THRESHOLD 1 OF CLASS INDICATOR TASTE FOR CLASS 3 AT ITERATION 11 * THRESHOLD 1 OF CLASS INDICATOR DIZZY FOR CLASS 3 AT ITERATION 12 * THRESHOLD 1 OF CLASS INDICATOR ILL FOR CLASS 3 AT ITERATION 16 * THRESHOLD 1 OF CLASS INDICATOR LIKED FOR CLASS 1 AT ITERATION 34 * THRESHOLD 1 OF CLASS INDICATOR TASTE FOR CLASS 1 AT ITERATION 93 WARNING: WHEN ESTIMATING A MODEL WITH MORE THAN TWO CLASSES, IT MAY BE NECESSARY TO INCREASE THE NUMBER OF RANDOM STARTS USING THE STARTS OPTION TO AVOID LOCAL MAXIMA.

THE MODEL ESTIMATION TERMINATED NORMALLY TESTS OF MODEL FIT Loglikelihood H0 Value -6343.937 H0 Scaling Correction Factor 1.006 for MLR Information Criteria Number of Free Parameters 17 Akaike (AIC) 12721.873 Bayesian (BIC) 12820.930 Sample-Size Adjusted BIC 12766.916 (n* = (n + 2) / 24)

Chi-Square Test of Model Fit for the Binary and Ordered Categorical (Ordinal) Outcomes Pearson Chi-Square Value 623.040 Degrees of Freedom 14 P-Value 0.0000 Likelihood Ratio Chi-Square Value 563.869 Degrees of Freedom 14 P-Value 0.0000

FINAL CLASS COUNTS AND PROPORTIONS FOR THE LATENT CLASSES BASED ON THE ESTIMATED MODEL Latent Classes 1 600.41143 0.23949 2 1517.83320 0.60544 3 388.75538 0.15507 CLASSIFICATION OF INDIVIDUALS BASED ON THEIR MOST LIKELY LATENT CLASS MEMBERSHIP Latent Classes 1 630 0.25130 2 1396 0.55684 3 481 0.19186

Entropy (fuzzyness) CLASSIFICATION QUALITY Entropy 0.832 Average Latent Class Probabilities for Most Likely Latent Class Membership (Row) by Latent Class (Column) 1 2 3 1 0.952 0.048 0.000 2 0.000 0.979 0.021 3 0.000 0.252 0.748

Model results Two-Tailed Estimate S.E. Est./S.E. P-Value Latent Class 1 Thresholds COUGH$1 1.604 0.133 12.103 0.000 ILL$1 7.371 4.945 1.490 0.136 TASTE$1 15.000 0.000 999.000 999.000 LIKED$1 -15.000 0.000 999.000 999.000 DIZZY$1 1.890 0.139 13.604 0.000

Categorical Latent Variables Two-Tailed Estimate S.E. Est./S.E. P-Value Means C#1 0.435 0.124 3.500 0.000 C#2 1.362 0.135 10.058 0.000

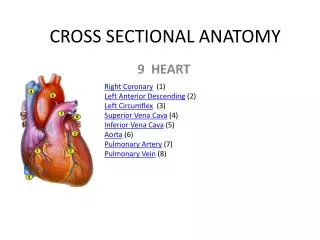

RESULTS IN PROBABILITY SCALE Latent Class 1 COUGH Category 1 0.833 0.018 45.072 0.000 Category 2 0.167 0.018 9.059 0.000 ILL Category 1 0.999 0.003 321.448 0.000 Category 2 0.001 0.003 0.202 0.840 TASTE Category 1 1.000 0.000 0.000 1.000 Category 2 0.000 0.000 0.000 1.000 LIKED Category 1 0.000 0.000 0.000 1.000 Category 2 1.000 0.000 0.000 1.000 DIZZY Category 1 0.869 0.016 54.848 0.000 Category 2 0.131 0.016 8.284 0.000

Conditional independence • The latent class variable accounts for the covariance structure in your dataset • Conditional on C, any pair of manifest variables should be uncorrelated • Harder to achieve for a cross-sectional LCA • With a longitudinal LCA there tends to be a more ordered pattern of correlations based on proximity in time

Tech10 – response patterns MODEL FIT INFORMATION FOR THE LATENT CLASS INDICATOR MODEL PART RESPONSE PATTERNS No. Pattern No. Pattern No. Pattern No. Pattern 1 10000 2 00100 3 00010 4 11100 5 11101 6 00001 7 10101 8 10010 9 10100 10 00101 11 10001 12 00000 13 00011 14 01101 15 10011 16 00110 17 11000 18 10111 19 11011 20 01100 21 10110 22 01000 23 01001 24 11111 25 01010 26 11001 27 01011 28 11010 29 00111 30 11110

Tech10 – Bivariate model fit • 5 manifest variables → number of pairs = Overall Bivariate Pearson Chi-Square 215.353 Overall Bivariate Log-Likelihood Chi-Square 214.695 Compare with χ² (10 df) = 18.307

Tech10 – Bivariate model fit Not bad:- Estimated Probabilities Standardized Variable Variable H1 H0 Residual (z-score) COUGH ILL Category 1 Category 1 0.511 0.506 0.457 Category 1 Category 2 0.026 0.031 -1.321 Category 2 Category 1 0.393 0.398 -0.467 Category 2 Category 2 0.070 0.065 0.925 Bivariate Pearson Chi-Square 2.726 Bivariate Log-Likelihood Chi-Square 2.798

Tech10 – Bivariate model fit Terrible:- Estimated Probabilities Standardized Variable Variable H1 H0 Residual (z-score) COUGH ILL Category 1 Category 1 0.566 0.534 3.149 Category 1 Category 2 0.338 0.370 -3.255 Category 2 Category 1 0.024 0.056 -6.850 Category 2 Category 2 0.072 0.040 7.977 Bivariate Pearson Chi-Square 116.657 Bivariate Log-Likelihood Chi-Square 117.162

Conditional Independence violated Need more classes

Obtain the ‘optimal’ model Assess the following for models with increasing classes • aBIC • Entropy • BLRT (Bootstrap LRT) • Conditional Independence (Tech10) • Ease of interpretation • Consistency with previous work / theory

5-class model • aBIC values are still decreasing • Tech 10 is still quite high – residual correlations between ill and both liked and dizzy • BLRT rejects 4-class model • Not enough df to fit 6-class model so we cannot assess fit of 5-class • Seems unlikely as BLRT values are decreasing slowly

Cross-sectional LCA Patterns of first response to cigarette Attempt 2

What to do? • We need more degrees of freedom • There were only 5 questions on response to smoking • Add something else: • How old were you when you first tried a cigarette? • Split into pre-teen / teen • 6 binary variables means 64 d.f. to play with

6-class model results CLASS COUNTS AND PROPORTIONS FOR THE LATENT CLASSES BASED ON THE ESTIMATED MODEL Latent classes 1 53.23894 2.1% 2 541.96140 21.7% 3 396.04196 15.9% 4 454.89294 18.2% 5 750.87470 30.1% 6 295.99007 11.9% CLASSIFICATION OF INDIVIDUALS BASED ON THEIR MOST LIKELY LATENT CLASS MEMBERSHIP Latent classes 1 34 1.4% 2 540 21.7% 3 403 16.2% 4 447 17.9% 5 840 33.7% 6 229 9.2%

Examine entropy in more detail • Model-level entropy = 0.876 • Class level entropy: 1 2 3 4 5 6 1 0.953 0.000 0.000 0.000 0.026 0.020 2 0.000 0.997 0.000 0.000 0.002 0.001 3 0.000 0.000 0.958 0.000 0.017 0.025 4 0.000 0.000 0.000 0.949 0.041 0.011 5 0.025 0.005 0.000 0.036 0.851 0.083 6 0.000 0.000 0.043 0.003 0.036 0.918

Pattern level entropy • Save out the model-based probabilities • Open in another stats package • Collapse over response patterns

Save out the model-based probabilities savedata: file is "6-class-results.dat"; save cprobabilities;

Varnames shown at end of output SAVEDATA INFORMATION Order and format of variables COUGH F10.3 ILL F10.3 TASTE F10.3 LIKED F10.3 DIZZY F10.3 LESS_13 F10.3 ALN F10.3 QLET F10.3 SEX F10.3 CPROB1 F10.3 CPROB2 F10.3 CPROB3 F10.3 CPROB4 F10.3 CPROB5 F10.3 CPROB6 F10.3 C F10.3

Open / process in Stata Remove excess spaces from data file, then: insheet using 6-class-results.dat, delim(" ") local i = 1 local varnames "COUGH ILL TASTE LIKED DIZZY LESS_13 ALN QLET SEX CPROB1 CPROB2 CPROB3 CPROB4 CPROB5 CPROB6 C" foreach x of local varnames { rename v`i' `x' local i=`i'+1 } gen num = 1 collapse (mean) CPROB* C (count) num, by(COUGH ILL TASTE LIKED DIZZY LESS_13)