Online convex optimization Gradient descent without a gradient

Online convex optimization Gradient descent without a gradient. Abie Flaxman CMU Adam Tauman Kalai TTI Brendan McMahan CMU. Goal: find x f(x) ¸ max z 2 S f(z) – . High-dimensional. } . Standard convex optimization. Convex feasible set S ½ < d Concave function f : S ! <.

Online convex optimization Gradient descent without a gradient

E N D

Presentation Transcript

Online convex optimizationGradient descent without a gradient Abie Flaxman CMU Adam Tauman Kalai TTI Brendan McMahan CMU

Goal: find x f(x) ¸ maxz2Sf(z) – High-dimensional } Standard convex optimization Convex feasible set S ½<d Concave function f : S !<

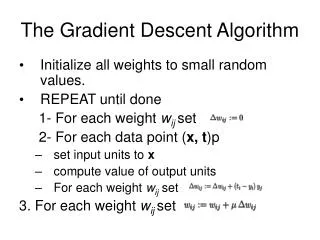

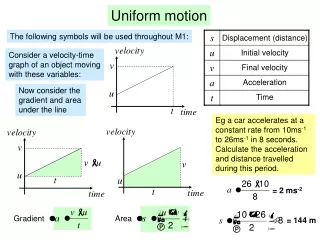

Steepest ascent • Move in the direction of steepest ascent • Compute f’(x) (rf(x) in higher dimensions) • Works for convex optimization • (and many other problems) x1 x2 x3 x4

Typical application • Toyota produces certain numbers of cars per month • Vector x 2<d (#Corollas, #Camrys, …) • Profit of Toyota is concave function of production vector • Maximize total (eq. average) profit PROBLEMS

Problem definition and results • Sequence of unknown (concave) functions • On tth month, choose production xt2 S ½<d • Receive profit ft(xt) • Maximize avg. profit • No assumption on distribution of ft 8 z, z’ 2 S || z – z’ || · D ||rf(z)|| · G

First try Zinkevich ’03: If we could only compute gradients… f4(x4) f3(x3) f2(x2) f4 PROFIT f1(x1) f3 f2 f1 x4 x3 x2 x1 #CAMRYS

Idea: 1-point gradient estimate With probability ½, estimate = f(x + )/ With probability ½, estimate = –f(x – )/ PROFIT E[ estimate ] ¼ f’(x) x x- x+ #CAMRYS

In expectation, gradient ascent on For online optimization, use Zinkevich’s analysis of online gradient ascent [Z03] Analysis PROFIT x- x+ #CAMRYS

d-dimensional online algorithm • Choose u 2<d, ||u||=1 • Choose xt+1 = xt + u ft(xt+u)/ • Repeat x3 x4 x1 x2 S

Hidden complication… Dealing with steps outside set is difficult! S

Hidden complication… Dealing with steps outside set is difficult! S’ …reshape into Isotropic position

Related work & conclusions • Related work • Online convex gradient descent [Zinkevich03] • One point gradient estimates [Spall97,Granichin89] • Same exact problem [Kleinberg04] (different solution) • Conclusions • Can estimate gradient of a function from single evaluation (using randomness) • Adaptive adversary ft(xt)=ft(x1,x2,…,xt) • Useful for situations with a sequence of different functions, no gradient info, one evaluation per function, and others