Methods For Nonlinear Least-Square Problems

390 likes | 548 Views

This overview discusses methods for addressing nonlinear least squares problems, widely applicable in graphics, machine learning, and robotics. The document covers techniques like gradient descent, Newton's method, Gauss-Newton, and Levenberg-Marquardt. Emphasis is placed on the challenges of finding global versus local minima, assumptions for smoothness in cost functions, and stopping criteria for optimization processes. Practical implementations and the role of regularization terms to enhance solution uniqueness are also highlighted, providing a foundation for various applications like inverse kinematics and data fitting.

Methods For Nonlinear Least-Square Problems

E N D

Presentation Transcript

Methods For Nonlinear Least-Square Problems Jinxiang Chai

Applications • Inverse kinematics • Physically-based animation • Data-driven motion synthesis • Many other problems in graphics, vision, machine learning, robotics, etc.

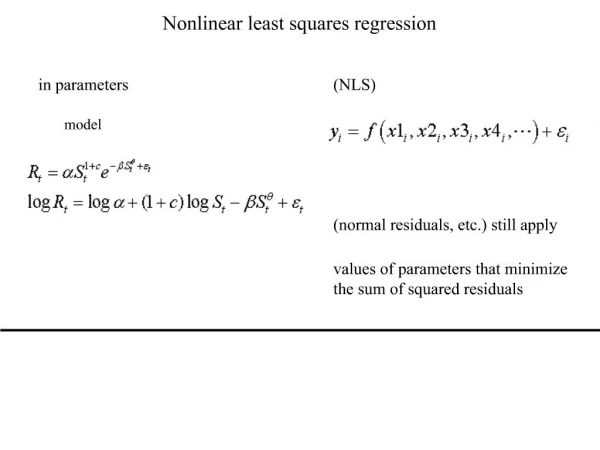

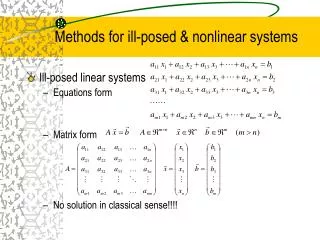

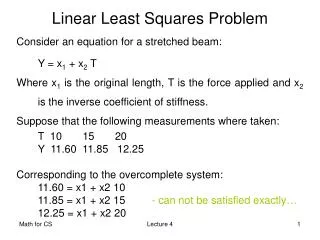

Where , i=1,…,m are given functions, and m>=n Problem Definition Most optimization problem can be formulated as a nonlinear least squares problem

Base Inverse Kinematics Find the joint angles θ that minimizes the distance between the character position and user specified position θ2 θ2 l2 l1 θ1 C=(c1,c2) (0,0)

Global Minimum vs. Local Minimum • Finding the global minimum for nonlinear functions is very hard • Finding the local minimum is much easier

Assumptions • The cost function F is differentiable and so smooth that the following Taylor expansion is valid,

Gradient Descent Objective function: Which direction is optimal?

Gradient Descent Which direction is optimal?

Gradient Descent A first-order optimization algorithm. To find a local minimum of a function using gradient descent, one takes steps proportional to the negative of the gradient of the function at the current point.

Gradient Descent • Initialize k=0, choose x0 • While k<kmax

Newton’s Method • Quadratic approximation • What’s the minimum solution of the quadratic approximation

Newton’s Method • High dimensional case: • What’s the optimal direction?

Newton’s Method • Initialize k=0, choose x0 • While k<kmax

Newton’s Method • Finding the inverse of the Hessian matrix is often expensive • Approximation methods are often used - conjugate gradient method - quasi-newton method

Comparison • Newton’s method vs. Gradient descent

Gauss-Newton Methods • Often used to solve non-linear least squares problems. Define We have

Gauss-Newton Method • In general, we want to minimize a sum of squared function values

Gauss-Newton Method • In general, we want to minimize a sum of squared function values • Unlike Newton’s method, second derivatives are not required.

Gauss-Newton Method • In general, we want to minimize a sum of squared function values

Gauss-Newton Method • In general, we want to minimize a sum of squared function values Quadratic function

Gauss-Newton Method • In general, we want to minimize a sum of squared function values Quadratic function

Gauss-Newton Method • In general, we want to minimize a sum of squared function values Quadratic function

Gauss-Newton Method • In general, we want to minimize a sum of squared function values Quadratic function

Gauss-Newton Method • Initialize k=0, choose x0 • While k<kmax

Gauss-Newton Method • In general, we want to minimize a sum of squared function values Any Problem? Quadratic function

Gauss-Newton Method • In general, we want to minimize a sum of squared function values Any Problem? Quadratic function

Gauss-Newton Method • In general, we want to minimize a sum of squared function values Any Problem? Quadratic function Solution might not be unique!

Gauss-Newton Method • In general, we want to minimize a sum of squared function values Any Problem? Quadratic function Add regularization term!

Levenberg-Marquardt Method • In general, we want to minimize a sum of squared function values Any Problem?

Levenberg-Marquardt Method • In general, we want to minimize a sum of squared function values Any Problem? Quadratic function Add regularization term!

Levenberg-Marquardt Method • In general, we want to minimize a sum of squared function values Any Problem? Quadratic function Add regularization term!

Levenberg-Marquardt Method • Initialize k=0, choose x0 • While k<kmax

Stopping Criteria • Criterion 1: reach the number of iteration specified by the user K>kmax

Stopping Criteria • Criterion 1: reach the number of iteration specified by the user • Criterion 2: when the current function value is smaller than a user-specified threshold K>kmax F(xk)<σuser

Stopping Criteria • Criterion 1: reach the number of iteration specified by the user • Criterion 2: when the current function value is smaller than a user-specified threshold • Criterion 3: when the change of function value is smaller than a user specified threshold K>kmax F(xk)<σuser ||F(xk)-F(xk-1)||<εuser

Levmar Library • Implementation of the Levenberg-Marquardt algorithm • http://www.ics.forth.gr/~lourakis/levmar/

Constrained Nonlinear Optimization • Finding the minimum value while satisfying some constraints