Image Compression With Haar Discrete Wavelet Transform

Image Compression With Haar Discrete Wavelet Transform. Cory Cox ME 535 Computational Techniques in Mechanical Engineering.

Image Compression With Haar Discrete Wavelet Transform

E N D

Presentation Transcript

Image Compression With Haar Discrete Wavelet Transform Cory Cox ME 535 Computational Techniques in Mechanical Engineering

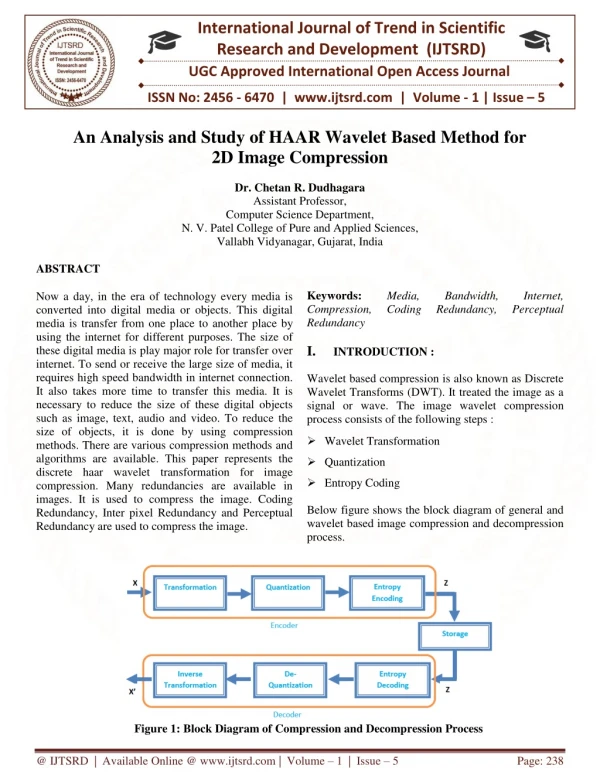

An example of the 2D discrete wavelet transform that is used in JPEG2000. Source: http://en.wikipedia.org/wiki/File:Jpeg2000_2-level_wavelet_transform-lichtenstein.png

Importance Of Image Compression “Faster sites create happy users [...] Like us, our users place a lot of value in speed – that’s why we’ve decided to take site speed into account in our search rankings.” Google Spokesperson, 2010

Haar Discrete Wavelet Transform Method • Let’s assume we’re working with a grayscale image • Integer pixel values from 0 (black) ------- > 255 (white)

Haar Discrete Wavelet Transform Method Let’s look at the first row of the image matrix [88 88 89 90 92 94 96 97] How do we save this information accurately while using less storage space?

Haar Discrete Wavelet Transform Method [88 88 89 90 92 94 96 97] Split the row into pairs [88 88] [89 90] [92 94] [96 97] Take the averages [88 89.5 93 96.5] “Approximation Coefficients”

Approximation Coefficients [88 88 89 90 92 94 96 97] [88 89.5 93 96.5] “Approximation Coefficients” are a good estimate of the original pixel values, but it’s impossible to reconstruct the original data completely from the approximation coefficients Let’s choose to save four more values.

Detail Coefficients Let’s save four more values such that we can completely reconstruct the original data [88 88 89 90 92 94 96 97] [88 89.5 93 96.5] [0 -0.5 -1 -0.5] Approximation Coefficients Detail Coefficients “Detail Coefficients” are a measure of the distance from an approximation coefficient to the original points that are being approximated by an average value Example: [89 90] --- > Approximation Coefficient = 89.5 --- > Detail Coefficient = -0.5

Haar Discrete Wavelet Transform Method [88 88 89 90 92 94 96 97] [88 89.5 93 96.5 0 -0.5 -1 -0.5] Why would we want to save the approximation and detail coefficients rather than the original 8 values? Both vectors contain the same number of entries, so how does this save space?

Haar Discrete Wavelet Transform Method Sparse matrices save space! [88 88 89 90 92 94 96 97] [88 89.5 93 96.5 0 -0.5 -1 -0.5] This isn’t sparse enough so let’s do another row-wise iteration of the Haar discrete wavelet transform on the first four entries (the approximation coefficients)

Haar Discrete Wavelet Transform Method [88 88 89 90 92 94 96 97] [88 89.5 93 96.5 0 -0.5 -1 -0.5] [88.75 94.75 -0.75 -1.75 0 -0.5 -1 -0.5] [91.75 -3 -0.75 -1.75 0 -0.5 -1 -0.5] 1st Iteration 2nd Iteration 3rd Iteration

Haar Discrete Wavelet Transform Method [88 88 89 90 92 94 96 97] [91.75 -3 -0.75 -1.75 0 -0.5 -1 -0.5] All of the energy is concentrated in the leftmost entry The other entries are zero or near zero Now let’s do this for an entire matrix! 3rd Iteration

Original matrix After one iteration of row sums and differences

Original matrix Grayscale: 255 (white) -----> 0 (black) Lower Energy: -close to zero -black Higher Energy: -Far from zero -white After one iteration of row sums and differences

Original matrix After three iterations of row and column sums and differences

Original matrix Grayscale: 255 (white) -----> 0 (black) Lower Energy: -close to zero -black Higher Energy: -Far from zero -white After one iteration of row sums and differences

Haar Discrete Wavelet Transform Method How can we make this even more sparse? What if we define a limiting factor below which all pixel values are approximated as zero?

Haar Discrete Wavelet Transform Method Assume a limiting factor of 0.25 There are now 37 zero entries as opposed to the previous matrix which had only 16 zero entries

Compression Ratio • The Good: • Decrease the file size • The Bad (And the Ugly): • The downside is that when we reconstruct that image we’ll have lost some details and created some error in the pixel values

Procedure • Start with grayscale image of size 256 x 256 • Scan a row of the image at a time, finding the sums/differences between neighboring entries in the image matrix • Split the image matrix into a left side and a right side, storing the sums or approximate coefficients in one half and the differences or detail coefficients in the other half. • Scan the image matrix by columns, finding the sums/differences between neighboring entries • Split the matrix into a top half and bottom half, storing the sums in one half and the differences in the other half. • Repeat steps 2-7 for the smaller matrix where the sums of the column scan and the row scan overlap. In our case we’ll repeat 4 times to obtain an image matrix where all of the row and column sums are concentrated in the upper left hand corner in a 16 x 16 sub-matrix.

Procedure Step 1: Start with grayscale image of size 256 x 256

Procedure Step 2: Scan a row of the image at a time, finding the sums/differences between neighboring entries in the image matrix [88 88 89 90 92 94 96 97] [88 89.5 93 96.5 0 -0.5 -1 -0.5] Except for our image each row is 256 entries!

Procedure Step 3: Split the image matrix into a left side and a right side, storing the sums or approximate coefficients in one half and the differences or detail coefficients in the other half.

Procedure Step 4: Scan the image matrix by columns, finding the sums/differences between neighboring entries This is the same as scanning by rows. Our image is 256 x 256 so we also have 256 columns to scan.

Procedure Step 5: Split the matrix into a top half and bottom half, storing the sums in one half and the differences in the other half.

Procedure Step 6: Repeat steps 2-7 for the smaller matrix where the sums of the column scan and the row scan overlap. In our case we’ll repeat 4 times to obtain an image matrix where all of the row and column sums are concentrated in the upper left hand corner in a 16 x 16 sub-matrix. Original Image 1st Iteration 2nd Iteration 4th Iteration

Saving Compressed Image with Masks The compressed image is quantized and pixel values are turned into binary 4 bits per pixel ---- > 16 shades of gray 8 bits per pixel ---- > 255 shades of gray 16 bits per pixel --- > 65, 535 shades of gray

Saving Compressed Image with Masks To optimize the image compression process we can experiment with using different numbers of bits per pixel for different areas of the image Use most bits per pixel here… Use fewer bits per pixel here… Use fewer bits per pixel here… Use fewest bits per pixel here…

Saving Compressed Image with Masks Through testing various masks it seems that the best quality and compression results from the following pixel coding: 8 bits/pixel : 16 x 16 6 bits/pixel : 32 x 32 4 bits/pixel : 64 x 64 2 bits/pixel : 128 x 128 0 bits/pixel : 256 x 256

Results : Mean Square Error Compares the accuracy of a compressed and reconstructed image to the original image in terms of individual pixel values.

Results: Peak Signal to Noise Ratio Whereas the mean square error is indicative of the cumulative error, the peak signal to noise ratio describes a sort of maximum error The signal is the original image and the noise is the error that occurs as a result of the compression

Conclusion The results from this image compression are on the same order of magnitude that some other studies show The reconstructed image shows some signs of pixilation and error