Revolutionizing Particle Physics through eScience Computing: A Showcase of Innovation

220 likes | 250 Views

Explore the cutting-edge intersection of eScience and Particle Physics, showcasing how advanced computing techniques are transforming research in this field. Discover the impact, challenges, and solutions of processing massive datasets in particle physics using global Grid resources and innovative technologies developed at Manchester. From tracking particle momentum to managing vast amounts of LHC data, witness how eScience is pushing the boundaries of scientific exploration.

Revolutionizing Particle Physics through eScience Computing: A Showcase of Innovation

E N D

Presentation Transcript

eScience and Particle Physics Roger Barlow eScience showcase May 1st 2007

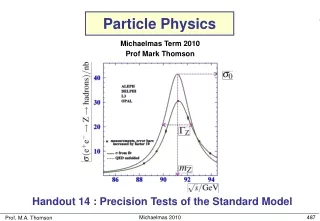

Particle physics yesterday Particle physics has pushed the computing envelope ever since the early days - though in the early days the envelope was smaller

Particle physics today • Today we have millions of extremely complicated events to interpret

Particle physics tomorrow • Tomorrow the LHC will give us trillions of much more complicated events to interpret

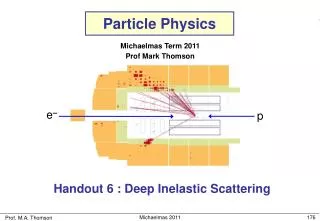

What is our computing? • Basic tasks fairly simple – find tracks from set of points, find particle momenta from track curvature, etc. • Data is pretty well constrained and homogenous. • But we need to do this on a big scale, for hundreds of millions of events.

Big is beautiful If all the data from the LHC in a year were written to CDs, and the CDs put in a pile, that pile would be 20 km high Commodity computing saved us in the 1990s: PCs got cheap. But that’s not enough

The Grid Data analysis can only be achieved as a world-wide exercise using all available computing resources This needs a Grid: The UK GridPP project and EGEE across Europe

Answer 1 (for journalists) The Grid is a virtual supercomputer Answer 2 (for politicians) Computing coming out of a wall socket, just like electric power Answer 3 (for the rest of us) The Web is computers talking to computers, saying ‘Give me a certain document’. The Grid is computers talking to computers. They can say anything. What is a Grid?

Issues Many computers available in computer centres Many people who want to use them How do the people know the computers are there? What facilities they provide How do the computer centres know which users are allowed to do what? That users are who they say they are? Traditional solution; every user needs an account and password at every centre Does not scale to large numbers of computers and users

Solution – Grid certificates • User details encoded by the RSA algorithm from a trusted Certificate Authority • Proves that whoever presents the certificate are who they say they are. (Modern analogue of the royal seal) • User needs only one Grid certificate – access everywhere • Led (in academia) by UK/European particle physicists /C=UK /O=eScience /OU=Manchester /L=HEP /CN=roger john barlow UK CA

Solution - VOMS Users join ‘Virtual Organisations’ Centres negotiate with VOs for usage rights UK VOMS system run from Manchester (Physics for GridPP, Computing for NGS). 500 users, 15 VOs, and growing VO VOMS system CENTRE User

Solution: pool accounts Developed and implemented by Manchester physics Neither user nor centre wants individual accounts Single account (‘griduser’) will lead to jobs deleting each others’ files Create generic accounts (ATLAS001, ATLAS002…) User assigned a generic account – can run jobs Account is linked to certificate name so there is an audit trail for antisocial behaviour

Solution - GridSite GridSite developed at Manchester to manage websites using grid credentials Now used to ‘gridify’ Web Services used on the grid GridPP website is maintained at Manchester using GridSite

Facility – Tier2 1000 dual 2.8 GHz Xeon nodes ½ Petabyte of storage 10 Gbit/s network Bought as faculty investment in eScience Reynolds House machine room Management through particle physics

Results Working and delivering CPU cycles High uptime and reliability

Who’s using it? Manchester LHC experiments Non-Manchester LHC experiments Manchester non LHC experiments Non-Manchester non-LHC experiments Non-Particle physics users

Biomed Drug design for combating bird flu & other diseases

ATLAS trigger tests Large scale software tests cannot be done at CERN as only ~10 computers available Tests run at Manchester (400 CPUs) instead ATLAS monitoring and calibration Detectors need frequent calibrating to convert raw signals into useful co-ordinates correctly Computers at CERN dedicated to actual DAQ Solution: ship raw data to Manchester. Process. Ship calibration data back Successful large-scale tests show this is possible ATLAS Detector Trigger 2000 CPUs CAL DATA DAQ Manchester Data storage Monitor

BABAR Data selection: Copy files from SLAC to Manchester (~5TB) Select ~200 different streams using different criteria Ship files of separate streams back to SLAC SLAC computers overloaded with other processing tasks Anticipate direct financial return if successful (common fund rebate to STFC) Experiment running at Stanford Linear Accelerator studying the difference between matter and antimatter

Financial benefits (future) Grants applied for Expect approval though not at full amount requested

Summary Particle Physics uses eScience eScience benefits from Particle Physics Manchester is leading in ideas and computer power thanks to bright people and strong support Benefits in international recognition and research income Long may it continue!