Goal-Directed Feature and Memory Learning: Reinforcement Learning Models

270 likes | 372 Views

This study examines goal-directed feature learning and memory in reinforcement learning models. Collaborators Sohrab Saeb and Jochen Triesch discuss the importance of utilizing unsupervised learning to extract relevant features for action selection. The research delves into topics such as reward-modulated Hebbian learning, input selection, and reinforcement learning with basal ganglia. Techniques like gradient descent and reward-modulated activity play crucial roles in shaping the models, demonstrating the convergence of values and action choices in different scenarios. The study presents detailed frameworks for network training, error minimization, and the development of memory weights. Two-layer SARSA RL, winner-take-all mechanisms, and non-negative coding are highlighted as key elements in the learning process. The study also discusses the connection between unsupervised and reinforcement learning and calls for further demonstrations with realistic data. The successful application of these models could open up new avenues in artificial intelligence research.

Goal-Directed Feature and Memory Learning: Reinforcement Learning Models

E N D

Presentation Transcript

Goal-Directed Feature and Memory Learning Cornelius Weber Frankfurt Institute for Advanced Studies (FIAS) Sheffield, 3rd November 2009 Collaborators: Sohrab Saeb and Jochen Triesch

unsupervised learning in cortex actor state space reinforcement learning in basal ganglia Doya, 1999

background: - gradient descent methods generalize RL to several layers Sutton&Barto RL book (1998); Tesauro (1992;1995) - reward-modulated Hebb Triesch, Neur Comp 19, 885-909 (2007), Roelfsema & Ooyen, Neur Comp 17, 2176-214 (2005); Franz & Triesch, ICDL (2007) - reward-modulated activity leads to input selection Nakahara, Neur Comp 14, 819-44 (2002) - reward-modulated STDP Izhikevich, Cereb Cortex 17, 2443-52 (2007), Florian, Neur Comp 19/6, 1468-502 (2007); Farries & Fairhall, Neurophysiol 98, 3648-65 (2007); ... - RL models learn partitioning of input space e.g. McCallum, PhD Thesis, Rochester, NY, USA (1996)

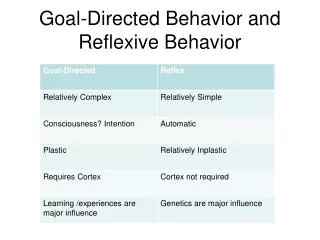

reinforcement learning go up? go right? go down? go left?

reinforcement learning action a weights input s

reinforcement learning q(s,a)value of a state-action pair (coded in the weights) action a weights input s minimizing value estimation error: d q(s,a) ≈0.9 q(s’,a’) - q(s,a) d q(s,a) ≈ 1 - q(s,a) repeated running to goal: in state s, agent performs best action a (with random), yielding s’ and a’ moving target value fixed at goal --> values and action choices converge

reinforcement learning actor input (state space) simple input complex input go right! go right? go left? can’t handle this!

complex input scenario: bars controlled by actions, ‘up’, ‘down’, ‘left’, ‘right’; reward given if horizontal bar at specific position sensory input action reward

need another layer(s) to pre-process complex data a action Q weight matrix action selection encodes q sstate position of relevant bar feature detection W weight matrix feature detector I input network definition: s = softmax(W I) P(a=1) = softmax(Q s) q = a Q s

a action Q weight matrix action selection s state feature detection W weight matrix I input network training: E = (0.9 q(s’,a’) - q(s,a))2 = δ2 d Q ≈ dE/dQ = δ a s d W ≈ dE/dW = δ Q s I + ε minimize error w.r.t. current target reinforcement learning δ-modulated unsupervised learning

Details: network training minimizes error w.r.t. target Vπ identities used: note: non-negativity constraint on weights

SARSA with WTA input layer (v should be q here)

learning the ‘short bars’ data feature weights RL action weights data action reward

short bars in 12x12 average # of steps to goal: 11

learning ‘long bars’ data RL action weights feature weights data input reward 2 actions (not shown)

WTA non-negative weights SoftMax no weight constraints SoftMax non-negative weights

if there are detection failures of features ... grey bars are invisible to the network ... it would be good to have memory or a forward model

a action Q action weights a(t-1) s state s(t-1) W feature weights I input network training by gradient descent as previously softmax function used; no weight constraint

discussion - two-layer SARSA RL performs gradient descent on value estimation error - approximation with winner-take-all leads to local rule with δ-feedback - learns only action-relevant features - non-negative coding aids feature extraction - memory weights develop into a forward model - link between unsupervised- and reinforcement learning - demonstration with more realistic data still needed

Thank you! Collaborators: Sohrab Saeb and Jochen Triesch Sponsors: Frankfurt Institute for Advanced Studies, FIAS Bernstein Focus Neurotechnology, BMBF grant 01GQ0840 EU project 231722 “IM-CLeVeR”, call FP7-ICT-2007-3