Midterm Schedule and Review Details for CS221 – Important Announcements

Attention CS221 students! The midterm is scheduled for November 24th from 7-9 PM, and there will be a review class next Tuesday. Additional study materials will be provided after class. Please note the homework return and regrade process details as well. We're exploring reflex agents through Pac-Man, and some schedule changes are coming up. Also, if you'd like to hear your song before class, feel free to reach out! Keep up the great work; there's still plenty of class left for learning!

Midterm Schedule and Review Details for CS221 – Important Announcements

E N D

Presentation Transcript

Announcements • Midterm 24th 7-9pm, NVIDIA • Midterm review in class next Tuesday • Extra study material for midterm (after class). • Homework back • Regrade process • Looking into reflex agent on pacman • Some changes to the schedule • Want to hear your song before class?

> 23 / 20 > 20 / 20 >= 17 / 20 >= 15 / 20 >= 12 / 20 >= 9 / 20 >= 2 / 20 Pac Man Grades CS221 Grade Book 16% A lot of class left

Yay Good Good Good Ok? Talk Talk How we see it CS221 Grade Book 16% A lot of class left

Yay Good Good Good Ok? Talk Talk Good job! CS221 Grade Book 16% A lot of class left

Yay Good Good Good Ok? Talk Talk Alright CS221 Grade Book 16% A lot of class left

Yay Good Good Good Ok? Talk Talk Rethink CS221 Grade Book 16% A lot of class left

Common Error: Formalize a problem Real World Problem Model the problem Formal Problem Apply an Algorithm Evaluate Solution

Modeling Discrete Search : what makes a state : possible actions from state s Succ: states that could result from taking action a from state s : reward for taking action a from state s : starting state :whether to stop :the value of reaching a given stopping point

Modeling Markov Decision : what makes a state : possible actions from state s : probability distribution of states that could result from taking action a from state s : reward for taking action a from state s : starting state :whether to stop :the value of reaching a given stopping point

Modeling Bayes Net Definition: Bayes Net = DAG DAG: directed acyclic graph (BN’s structure) • Nodes: random variables (typically discrete, but methods also exist to handle continuous variables) • Arcs: indicate probabilistic dependencies between nodes. Go from cause to effect. • CPDs: conditional probability distribution (BN’s parameters) Conditional probabilities at each node, usually stored as a table (conditional probability table, or CPT) Root nodes are a special case – no parents, so just use priors in CPD:

Modeling Hidden Markov Model X1 X2 X3 X4 X5 Formally: (1) State variables and their domains (2) Evidence variables and their domains (3) Probability of states at time 0 (4) Transition probability (5) Emission probability E1 E2 E3 E4 E5

Previously on CS221 In Class Research

Previously on CS221 In Class Research

Hidden Markov Model X1 X2 X3 X4 X5 Formally: (1) State variables and their domains (2) Evidence variables and their domains (3) Probability of states at time 0 (4) Transition probability (5) Emission probability E1 E2 E3 E4 E5

Filtering X1 X1 X2 E1

Track a Car! Pos2 Pos1 Dist1 Dist2

Track a Robot! Pos1 Probability Density Dist1 Value of d

Track a Robot! μ = True distance from x to your car Pos1 Probability Density Dist1 Value of d

Track a Robot! μ = True distance from x to your car Pos1 σ = Const.SONAR_STD Probability Density Dist1 Value of d

Track a Robot! Pos2 Pos1

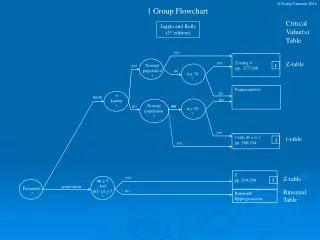

Particle Filters A particle is a hypothetical instantiation of a variable. Store a large number of particles. Elapse time by moving each particle given transition probabilities. When we get new evidence we weight each particle and create a new generation. The density of particles for any given value is an approximation of the probability that our variable equals that value

0.0 0.1 0.0 0.0 0.0 0.2 0.0 0.2 0.5 Particle Filtering Sometimes |X| is too big to use exact inference • |X| may be too big to even store B(X) • E.g. X is continuous • E.g. X is a real world map Solution: approximate inference • Track samples of X, not all values • Samples are called particles • Time per step is linear in the number of samples • But: number needed may be large • In memory: list of particles, not states This is how robot localization works in practice

Elapse Time Each particle is moved by sampling its next position from the transition model • Reflect the transition probs • Here, most samples move clockwise, but some move in another direction or stay in place This captures the passage of time • If we have enough samples, close to the exact values before and after (consistent)

Observe Step Slightly trickier: • We downweight our samples based on the evidence • Note that, as before, the probabilities don’t sum to one, since most have been downweighted (in fact they sum to an approximation of P(e))

Resample Old Particles: (3,3) w=0.1 (2,1) w=0.9 (2,1) w=0.9 (3,1) w=0.4 (3,2) w=0.3 (2,2) w=0.4 (1,1) w=0.4 (3,1) w=0.4 (2,1) w=0.9 (3,2) w=0.3 Rather than tracking weighted samples, we resample N times, we choose from our weighted sample distribution (i.e. draw with replacement) This is analogous to renormalizing the distribution Now the update is complete for this time step, continue with the next one New Particles: (2,1) w=1 (2,1) w=1 (2,1) w=1 (3,2) w=1 (2,2) w=1 (2,1) w=1 (1,1) w=1 (3,1) w=1 (2,1) w=1 (1,1) w=1

Track a Robot! Pos2 Pos1 Walls1 Walls2 Sometimes sensors are wrong Sometimes motors don’t work

Transition Prob Start

Emission Prob Laser sensor Sense walls