SAMPLING DISTRIBUTIONS AND HYPOTHESIS TESTING

270 likes | 496 Views

SAMPLING DISTRIBUTIONS AND HYPOTHESIS TESTING. Outline. Sampling Distributions revisited Hypothesis Testing Using the Normal Distribution to test Hypotheses Type I and Type II Errors One vs. Two Tailed Tests. Statistics is Arguing.

SAMPLING DISTRIBUTIONS AND HYPOTHESIS TESTING

E N D

Presentation Transcript

Outline • Sampling Distributions revisited • Hypothesis Testing • Using the Normal Distribution to test Hypotheses • Type I and Type II Errors • One vs. Two Tailed Tests Chapter 4

Statistics is Arguing • Typically, we are arguing either 1) that some value (or mean) is different from some other mean, or 2) that there is a relation between the values of one variable, and the values of another. • Thus, we typically first produce some null hypothesis (i.e., no difference or relation) and then attempt to show how improbably something is given the null hypothesis. Chapter 4

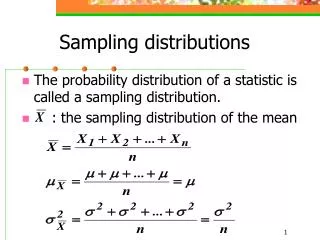

Sampling Distributions • Just as we can plot distributions of observations, we can also plot distributions of statistics (e.g., means). • These distributions of sample statistics are called sampling distributions. • For example, if we consider the 24 students in a tutorial who estimated my weight as a population, their guesses have an x of 168.75 and an of 12.43 (2 = 154.51) Chapter 4

Sampling Distributions • If we repeatedly sampled groups of 6 people, found the x of their estimates, and then plotted the x’s, the distribution might look like: Chapter 4

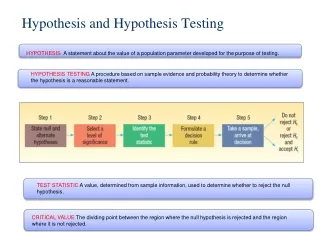

Hypothesis Testing • What I have previously called “arguing” is more appropriately called hypothesis testing. • Hypothesis testing normally consists of the following steps: 1) some research hypothesis is proposed (or alternate hypothesis) - H1. 2) the null hypothesis is also proposed - H0. Chapter 4

Hypothesis Testing 3) the relevant sampling distribution is obtained under the assumption that H0 is correct. 4) I obtain a sample representative of H1 and calculate the relevant statistic (or observation). 5) Given the sampling distribution, I calculate the probability of observing the statistic (or observation) noted in step 4, by chance. 6) On the basis of this probability, I make a decision Chapter 4

The Beginnings of an Example • One of the students in the tutorial guessed my weight to be 200 lbs. I think that said student was fooling around. That is, I think that guess represents something different that do the rest of the guesses. • H0 - the guess is not really different. • H1 - the guess is different. Chapter 4

The Beginnings of an Example 1) obtain a sampling distribution of H 0. 2) calculate the probability of guessing 200, given this distribution 3) Use that probability to decide whether this difference is just chance, or something more. Chapter 4

A Touch of Philosophy • Some students new to this idea of hypothesis testing find this whole business of creating a null hypothesis and then shooting it down as a tad on the weird side, why do it that way? • This dates back to a philosopher named Karl Popper who claimed that it is very difficult to prove something to be true, but no so difficult to prove it to be untrue. Chapter 4

A Touch of Philosophy • So, it is easier to prove H0 to be wrong, than to prove HA to be right. • In fact, we never really prove H1 to be right. That is just something we imply (similarly H0). Chapter 4

Using the Normal Distribution to test Hypotheses • The “Marty’s Weight” example begun earlier is an example of a situation where we want to compare one observation to a distribution of observations. • This represents the simplest hypothesis-testing situation because the sampling distribution is simply the distribution of the individual observations. Chapter 4

Using the Normal Distribution to test Hypotheses • Thus, in this case we can use the stuff we learned about z-scores to test hypotheses that some individual observation is either abnormally high (or abnormally low). • That is, we use our mean and standard deviation to calculate the a z-score for the critical value, then go to the tables to find the probability of observing a value as high or higher than (or as low or lower than) the one we wish to test. Chapter 4

Finishing the Example = 168.75 Critical = 200 = 12.43 Chapter 4

Finishing the Example • From the z-table, the area of the portion of the curve above a z of 2.51 (i.e., the smaller portion) is approximately .0060. • Thus, the probability of observing a score as high or higher than 200 is .0060 Chapter 4

Making Decisions given Probabilities • It is important to realize that all our test really tells us is the probability of some event given some null hypothesis. • It does not tell us whether that probability is sufficiently small to reject H0, that decision is left to the experimenter. • In our example, the probability is so low, that the decision is relatively easy. There is only a .60% chance that the observation of 200 fits with the other observations in the sample. Thus, we can reject H0 without much worry. Chapter 4

Making Decisions given Probabilities • But what if the probability was 10% or 5%? What probability is small enough to reject H0? • It turns out there are two answers to that: • the real answer. • the “conventional” answer. Chapter 4

The “Real” Answer • First some terminology. . . . • The probability level we pick as our cut-off for rejecting H0 is referred to as our rejection level or our significance level. • Any level below our rejection or significance level is called our rejection region Chapter 4

The “Real” Answer • OK, so the problem is choosing an appropriate rejection level. • In doing so, we should consider the four possible situations that could occur when we’re hypothesis testing. Chapter 4

Type I and Type II Errors • Type I error is the probability of rejecting the null hypothesis when it is really true. • Example: saying that the person who guessed I weigh 200 lbs was just screwing around when, in fact, it was an honest guess just like the others. • We can specify exactly what the probability of making that error was, in our example it was .60%. Chapter 4

Type I and Type II Errors • Usually we specify some “acceptable” level of error before running the study. • then call something significant if it is below this level. • This acceptable level of error is typically denoted as • Before setting some level of it is important to realize that levels of are also linked to Type II errors Chapter 4

Type I and Type II Errors • Type II error is the probability of failing to reject a null hypothesis that is really false. • Example: judging OJ as not guilty when he is actually guilty. • The probability of making a Type II error is denoted as Chapter 4

Type I and Type II Errors • Unfortunately, it is impossible to precisely calculate because we do not know the shape of the sampling distribution under H1. • It is possible to “approximately” measure , and we will talk a bit about that in Chapter 8. • For now, it is critical to know that there is a trade-off between and , as one goes down, the other goes up. • Thus, it is important to consider the situation prior to setting a significance level. Chapter 4

The Conventional Answer • While issues of Type I versus Type II error are critical in certain situations, psychology experiments are not typically among them (although they sometimes are). • As a result, psychology has adopted the standard of accepting =.05 as a conventional level of significance. • It is important to note, however, that there is nothing magical about this value (although you wouldn’t know it by looking at published articles). Chapter 4

One vs. Two Tailed Tests • Often, we want to determine if some critical difference (or relation) exists and we are not so concerned about the direction of the effect. • That situation is termed two-tailed, meaning we are interested in extreme scores at either tail of the distribution. • Note, that when performing a two-tailed test we must only consider something significant if it falls in the bottom 2.5% or the top 2.5% of the distribution (to keep at 5%). Chapter 4

One vs. Two Tailed Tests • If we were interested in only a high or low extreme, then we are doing a one-tailed or directional test and look only to see if the difference is in the specific critical region encompassing all 5% in the appropriate tail. • Two-tailed tests are more common usually because either outcome would be interesting, even if only one was expected. Chapter 4

Other Sampling Distributions • The basics of hypothesis testing described in this chapter do not change. • All that changes across chapters is the specific sampling distribution (and its associated table of values). • The critical issue will be to realize which sampling distribution is the one to use in which situation. Chapter 4