Multiple Regression Interpretation

Multiple Regression Interpretation. Correlation, Causation

Multiple Regression Interpretation

E N D

Presentation Transcript

Correlation, Causation Think about a light switch and the light that is on the electrical circuit. If you and I collect data about someone flipping the switch and the lights going on and off we would be able to say that there is correlation from a statistical point of view. In fact, you and I know we can say something even stronger. We can say in this case there is causation. In the world of business (and other areas) we want to find relationships between variables. We would hope to find correlation and if we have a compelling theory maybe we could say we have causation.

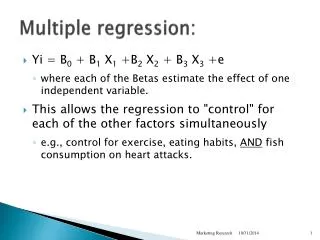

Example Say we are interested in crop yield on a farm. What variables are correlated with crop yield? You and I know the amount of water has been shown to have an impact on yield, as has fertilizer and soil type, among other things. In a multiple regression setting, if y = yield, x1 = water amount, and x2 = amount of fertilizer, the a multiple regression would be of the form y = Bo +B1x1 + B2x2 + e and our estimated regression would be of the form y hat = bo +b1x1 + b2x2.

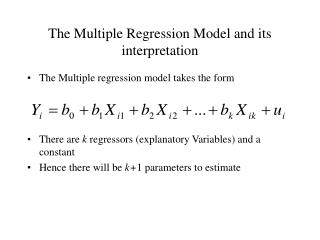

F Test In a multiple regression, a case of more than one x variable, we conduct a statistical test about the overall model. The basic idea is do all the x variables as a package have a relationship with the y variable? The null hypothesis is that there is no relationship and we write this in a shorthand notation as Ho: B1 = B2 = … =0. If this null hypothesis is true the equation for the line would mean the x’s do not have an influence on y. The alternative hypothesis is that at least one of the beta’s is not zero. Rejecting the null means that the x’s as a group are related to y. The test is performed with what is called the F test. From the sample of data we can calculate a number called the F statistic and use this value to perform the test. In our class we will have F calculated for us because it is a tedious calculation.

F Under the null hypothesis the F statistic we calculate from a sample has a distribution similar to the one shown. The F test here is a one tailed test. The farther to the right the statistic we get in the sample is, the more we are inclined to reject the null because extreme values are not very likely to occur under the null hypothesis. In practice we pick a level of significance and use a critical F to define the difference between accepting the null and rejecting the null.

Area we make = alpha F Critical F To pick the critical F we have two types of degrees of freedom to worry about. We have the numerator and the denominator degrees of freedom to calculate. They are called this because the F stat is a fraction. Numerator degrees of freedom = number of x’s, in general called p. Denominator degrees of freedom = n – p – 1, where n is the sample size. As an example, if n = 10 and p = 2 we would say the degrees of freedom are 2 and 7 where we start with the numerator value. You would see from a book (maybe page 672 of a stats book) the critical F is 4.74 when alpha is .05. Many times the book also has a table for alpha = .025 and .01.

Area we make = alpha =.05 here F 4.74 here In our example here the critical F is 4.74. If from the sample we get an F statistic that is greater than 4.74 we would reject the null and conclude the x’s as a package have a relationship with the variable y. On the previous slide is an example and the F stat is 32.8784 and so the null hypothesis would be rejected in that case.

Area we make = alpha =.05 here F 4.74 here 32.8784 P-value The computer printout has a number on it that means we do not even have to look at the F table if we do not want to. But, the idea is based on the table. Here you see 32.8784 is in the rejection region. I have colored in the tail area for this number. Since 4.74 has a tail area = alpha = .05 here, we know the tail area for 32.8784 must be less than .05. This tail area is the p-value for the test stat calculated from the sample and on the computer printout is labeled Significance F. In the example the value is .0003.

SOOOOOOO, Using the F table, Reject the null if the F stat > critical F in the table, or If the Significance F < alpha. If you can NOT reject the null then at this stage of the game there is no relation between the x’s and the y and our work here would be done. So from here out I assume we have rejected the null. T tests After the F test we would do a t test on each of the slopes similar to what we did in a simple linear regression case to make sure that each variable on its own has a relationship with y. There we reject the null of a zero slope when the p-value on the slope is less than alpha.

Multicollinearity Can you say multicollinearity? Sure you can. Let’s all say it together on the count of 3. 1, 2, 3 multicollinearity! Very good class, now listen up! Multicollinearity is an idea that volumes have been written about. We want to have a basic feel for the problem here. You and I want x variables that help explain y. The reason is so that we can predict and explain movement in y. As an example, if we can predict and explain crop yield maybe we can make yield higher so that we can feed the world! So, we want x’s that are correlated with y. This is a good thing. But, sometimes the x’s will be correlated with each other. This is called multicollinearity. The problem here is that sometimes we can not see the separate influence an x has on y because the other x’s have picked up the influence due to their correlation.

From a practical point of view multicollinearity could have the following affect on your research. You reject the null hypothesis of no relationship between all the x variables and y with the F test, but you can not reject some or all of the separate t tests for the separate slopes. Don’t freak out (yet!). Let’s think about crop yield. Some farmers have water systems. The more it rains in a summer the less water the farmers directly apply. (Okay, maybe I am ignorant here and farmers here can use all the water they can apply – its an example.) If you included both inches of rain and water applied there is a correlation between the two. This may make it difficult to see the separate impact of either the rain or the water from the system. If the x’s (the independent variables) have correlations more extreme than .7 or -.7 then multicollinearity could be a problem

r square r square on the regression printout is a measure designed to indicate the strength of the impact of the x’s on y. The number can be between 0 and 1, with values closer to 1 meaning the stronger the relationship. r square is actually the percentage of the variation in y that is accounted for by the x variables. This is also an important idea because although we may have a significant relationship we may not be explaining much. From the yield example the more variation we can explain then the more we can control yield and thus feed the world, perhaps. Or maybe in business setting the more variation we can explain the more profit we can make.