Multiple Regression

Multiple Regression. In multiple regression, we consider the response , y, to be a function of more than one predictor variable, x1, …, xk Easiest to express in terms of matrices. Multiple Regression. Let Y be a col vector whose rows are the observations of the response Let X be a matrix.

Multiple Regression

E N D

Presentation Transcript

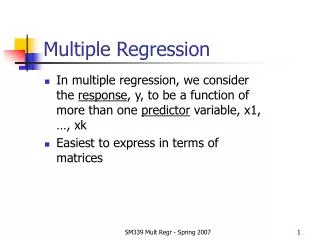

Multiple Regression • In multiple regression, we consider the response, y, to be a function of more than one predictor variable, x1, …, xk • Easiest to express in terms of matrices SM339 Mult Regr - Spring 2007

Multiple Regression • Let Y be a col vector whose rows are the observations of the response • Let X be a matrix. • First col of X contains all 1’s • Other cols contain the observations of the other predictor vars SM339 Mult Regr - Spring 2007

Multiple Regression • Y = X * b + e • B is a col vector of coefficients • E is a col vector of the normal errors • Matlab does matrices very well, but you MUST watch the sizes and orders when you multiply SM339 Mult Regr - Spring 2007

Multiple Regression • A lot of MR is similar to simple regression • B=X\Y gives coefficients • YH=X*B gives fitted values • SSE = (y-yh)’ *(y-yh) • SSR = (yavg-yh)’ * (yavg-yh) • SST = (y-yavg)’ * (y-yavg) SM339 Mult Regr - Spring 2007

Multiple Regression • We can set up the ANOVA table • Df for Regr = # vars • (So df=1 for simple regr) • F test is as before • R^2 is as before, with same interpretation SM339 Mult Regr - Spring 2007

Matrix Formulation • Instead of X\Y, we can solve the equations for B • B = (X’*X)-1 X’*Y • We saw things like X’*X before as sum of squares • Because of the shape of X, X’*X is square, so it makes sense to use its inverse • (Actually, X’*X is always square) SM339 Mult Regr - Spring 2007

Matrix Formulation • When we consider the coefficients, we not only have variances (SDs), but the relationship between coeffs • The sd of a coefficient is the corresponding diagonal element of est(SD) (X’*X)-1 • We can use this to get conf int for coefficients (and other information) SM339 Mult Regr - Spring 2007

Matrix Formulation • Exercises • 1. Compute coeffs, ANOVA and SD(coeff) for Fig13.11, p 608 where time = f(vol, wt, shift). Find PV for testing B1=0. Find 95% confidence interval for B1. • 2. Repeat for Fig 13.17, p. 613 where y=run time • 3. Repeat for DS13.2.1, p 614 where y= sales volume SM339 Mult Regr - Spring 2007

Comparing Models • Suppose we have two models in mind • #1 uses a set of predictors • #2 includes #1, but has extra variables • SSE for #2 is never greater than SSE for #1 • We can always consider a model for #2 which has zeros for the new coefficients SM339 Mult Regr - Spring 2007

Comparing Models • As always, we have to ask “Is the decrease in SSE unusually large?” • Suppose that model1 has p variables and model2 has p+k models • SSE1 is Chi^2 with df=N-p • SSE2 is Chi^2 with df=N-(p+k) • Then SSE1-SSE2 is Chi^2 with df=k SM339 Mult Regr - Spring 2007

Comparing Models • Partial F = (SSE1-SSE2)/k / MSE2 • Note that numerator is Chi^2 divided by df • Denominator is MS for model with more variables • Note that subtraction is “larger – smaller” SM339 Mult Regr - Spring 2007

Comparing Models • Consider Fig 13.11 on p. 608 • Y=unloading time • Model1: X=volume • F=203, PV very small • Model2: X=volume and wt • F=96 and PV still very small SM339 Mult Regr - Spring 2007

Comparing Models • SSE1=215.6991 • SSE2=215.1867 • So Model2 is better (smaller SSE), but only trivially • MSE2=12.6580 • Partial F = 0.0405 • So the decrease in SSE is not significant at all, even though Model2 is significant • (In part because Model2 includes Model1) SM339 Mult Regr - Spring 2007

Comparing Models • Recall that the SD of the coefficients can be found • The sd of a coefficient is the corresponding diagonal element of est(SD) (X’*X)-1 • In Model2 of the example, the 3rd diagonal element = 0.0030 • SD of coeff3 = 0.1961 • Coeff3/SD = 0.2012 = # SD the coefficient is away from zero • (0.2012)2 = 0.0405 = Partial F SM339 Mult Regr - Spring 2007

Comparing Models • Suppose we have a number of variables to choose from • What set of variables should we use? • Several approaches • Stepwise regression • Step up or step down • Either start from scratch and add variables or start with all variables and delete variables SM339 Mult Regr - Spring 2007

Comparing Models • Step up method • Fit regression using each variable on its own • If the best model (smallest SSE or largest F) is significant, then continue • Using the variable identified at step1, add all other variables one at a time • Of all these models, consider the one with smallest SSE (or largest F) • Compute partial F to see if this model is better than the single variable SM339 Mult Regr - Spring 2007

Comparing Models • We can continue until the best variable to add does not have a significant partial F • To be complete, after we have added a variable, we should check to be sure that all the variables in the model are still needed SM339 Mult Regr - Spring 2007

Comparing Models • One by one, drop each other variable from the model • Compute partial F • If partial F is small, then we can drop this variable • After all variables have been dropped that can be, we can resume adding variables SM339 Mult Regr - Spring 2007

Comparing Models • Recall that when adding single variables, we can find partial F by squaring coeff/SD(coeff) • So, for single variables, we don’t need to compute a large number of models because the partial F’s can be computed in one step from the larger model SM339 Mult Regr - Spring 2007

Other Models • Because it allows for multiple “predictors”, MLR is very flexible • We can fit polynomials by including not only X, but other columns for powers of X SM339 Mult Regr - Spring 2007

Other Models • Consider Fig13.7, p 605 • Yield is a fn of Temp • Model1: Temp only • F=162, highly significant • Model2: Temp and Temp^2 • F=326 • Partial F = 29.46 • Conclude that the quadratic model is significantly better than the linear model SM339 Mult Regr - Spring 2007

Other Models • Would a cubic model work better? • Partial F = 4.5*e-4 • So cubic model is NOT preferred SM339 Mult Regr - Spring 2007

Other Models • Taylor’s Theorem • “Continuous functions are approximately polynomials” • In Calc, we started with the function and used the fact that the coefficients are related to the derivatives • Here, we do not know the function, but can find (estimate) the coefficients SM339 Mult Regr - Spring 2007

Other Models • Consider Fig13.15, p. 611 • If y=f(water, fertilizer), then F<1 • Plot y vs each variable • VERY linear fn of water • Somewhat quadratic fn of fertilizer • Consider a quadratic fn of both (and product) SM339 Mult Regr - Spring 2007

Other Models • F is about 17 and pv is near 0 • All partial F’s are large, so should keep all terms in model • Look at coeff’s • Quadratics are neg, so the surface has a local max SM339 Mult Regr - Spring 2007

Other Models • Solve for max response • Water=6.3753, Fert=11.1667 • Which is within the range of values, but in the lower left corner • (1) We can find a confidence interval on where the max occurs • (2) Because of the cross product term, the optimal fertilizer varies with water SM339 Mult Regr - Spring 2007

Other Models • Exercises • 1. Consider Fig13.48, p 721. (Fix the line that starts 24.2. The 3rd col should be 10.6.) Is there any evidence of a quadratic relation? • 2. Consider Fig13.49, p. 721. Fit the response model. Comment. Plot y vs yh. What is the est SD of the residuals? SM339 Mult Regr - Spring 2007

Indicator Variables • For simple regression, if we used an indicator variable, we were doing a 2 sample t test • We can use indicator variables and multiple regression to do ANOVA SM339 Mult Regr - Spring 2007

Indicator Variables • Return to Fig11.4 on blood flow • Do indicators by • for i=1:max(ndx), • y(:,i)=(ndx==i);end • VERY IMPORTANT • If you are going to use the intercept, then you must leave out one column of the indicators (usually the last col) SM339 Mult Regr - Spring 2007

Indicator Variables • F is the same for regression as for ANOVA • The intercept is the avg of the group that was left out of indicators • The other coefficients are the differences between their avg and the intercept SM339 Mult Regr - Spring 2007

Indicator Variables • Exercises • Compare the sumstats approach and the regression approach for Fig11.4, Fig11.5 on p. 488, 489 SM339 Mult Regr - Spring 2007

Other ANOVA • Why bother with a second way to solve a problem we already can solve? • The regression approach works easily for other problems • But note that we cannot use regression approach on summary stats SM339 Mult Regr - Spring 2007

Other ANOVA • Two-way ANOVA • Want to compare Treatments, but the data has another component that we want to control for • Called “Blocks” from the origin in agriculture testing SM339 Mult Regr - Spring 2007

Other ANOVA • So we have 2 category variables, one for Treatment and one for Blocks • Set up indicators for both and use all these for X • Omit one column from each set SM339 Mult Regr - Spring 2007

Other ANOVA • We would like to separate the Treatment effect from the Block effect • Use partial F • ANOVA table often includes the change in SS separately for Treatment and Blocks SM339 Mult Regr - Spring 2007

Other ANOVA • Consider Fig 14.4 on p 640 • 3 machines and 4 solder methods • Problem doesn’t tell us which is Treatment and which is Blocks, so we’ll let machines be Treatments SM339 Mult Regr - Spring 2007

Other ANOVA • >> x=[i1 i2]; • >> [b,f,pv,aov,invxtx]=multregr(x,y);aov • aov = • 60.6610 5.0000 12.1322 13.9598 • 26.0725 30.0000 0.8691 0.2425 • This is for both sets of indicators SM339 Mult Regr - Spring 2007

Other ANOVA • For just machine • >> x=[i1]; • >> [b,f,pv,aov,invxtx]=multregr(x,y);aov • aov = • 1.8145 2.0000 0.9073 0.3526 • 84.9189 33.0000 2.5733 0.5805 • Change in SS is 60.6610- 1.8145 when we use Solder as well SM339 Mult Regr - Spring 2007

Other ANOVA • For just solder • >> x=[i2]; • >> [b,f,pv,aov,invxtx]=multregr(x,y);aov • aov = • 58.8465 3.0000 19.6155 22.5085 • 27.8870 32.0000 0.8715 0.1986 • SSR is the same as the previous difference • If we list them separately, then we use SSE for model with both vars so that it will properly add up to SSTotal SM339 Mult Regr - Spring 2007

Interaction • The effect of Solder may not be the same for each Machine • This is called “interaction” where a combination may not be the sum of the parts • We can measure interaction by using a product of the indicator variables • Need all possible products (2*3 in this case) SM339 Mult Regr - Spring 2007

Interaction • Including interaction • >> [b,f,pv,aov,invxtx]=multregr(x,y);aov • aov = • 64.6193 11.0000 5.8745 6.3754 • 22.1142 24.0000 0.9214 0.3144 • We can subtract to get the SS for Interaction • 64.6193 - 60.6610 • See ANOVA table on p 654 SM339 Mult Regr - Spring 2007

Interaction • We can do interaction between categorical variables and quantitative variables • Allows for different slopes for different categories • Can also add an indicator, which allows for different intercepts for different categories • With this approach, we are assuming a single SD for the e’s in all the models • May or may not be a good idea SM339 Mult Regr - Spring 2007

ANACOVA • We can do regression with a combination of categorical and quantitative variables • The quantitative variable is sometimes called a co-variate • Suppose we want to see if test scores vary among different groups • But the diff groups may come from diff backgrounds which would affect their scores • Use some measure of background (quantitative) in the regression SM339 Mult Regr - Spring 2007

ANACOVA • Then the partial F for the category variable after starting with the quan variable will measure the diff among groups after correcting for background SM339 Mult Regr - Spring 2007

ANACOVA • Suppose we want to know if mercury levels in fish vary among 4 locations • We catch some fish in each location and measure Hg • But the amount of Hg could depend on size (which indicates age), so we also measure that • Then we regress on both Size and the indicators for Location • If partial F for Location is large then we say that Location matters, after correcting for Size SM339 Mult Regr - Spring 2007