Using Inaccurate Models in Reinforcement Learning

Using Inaccurate Models in Reinforcement Learning. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng Stanford University. Overview. Reinforcement learning in high-dimensional continuous state-spaces. Model-based RL: Difficult to build an accurate model.

Using Inaccurate Models in Reinforcement Learning

E N D

Presentation Transcript

Using Inaccurate Models in Reinforcement Learning Pieter Abbeel, Morgan Quigley and Andrew Y. Ng Stanford University

Overview • Reinforcement learning in high-dimensional continuous state-spaces. • Model-based RL: Difficult to build an accurate model. • Model-free RL: Often requires large numbers of real-life trials. • We present a hybrid algorithm, which requires only • an approximate model, • a small number of real-life trials. • Resulting policy is (locally) near-optimal. • Experiments on flight simulator and real RC car.

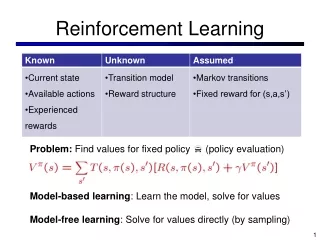

Reinforcement learning formalism • Markov Decision Process (MDP) • M = (S, A, T, H, s0, R ). • S = n (continuous state space) • Time varying, deterministic dynamics • T = { ft : S x A!S, t = 0,…,H}. • Goal: find policy : S!A, that maximizes • U() = E [R (st) | ]. • Focus: task of trajectory following. H t=0

Motivating Example • Student-driver learning to make a 90 degree right turn • Only a few trials needed. • No accurate model. • Student-driver has access to: • Real-life trial. • Crude model. • Result: good policy gradient estimate.

Algorithm Idea • Input to algorithm: approximate model. • Start by computing the optimal policy according to the model. Real-life trajectory Target trajectory The policy is optimal according to the model, so no improvement is possible based on the model.

Algorithm Idea (2) • Update the model such that it becomes exact for the current policy.

Algorithm Idea (2) • Update the model such that it becomes exact for the current policy.

Algorithm Idea (2) • The updated model perfectly predicts the state sequence obtained under the current policy. • We can use the updated model to find an improved policy.

Algorithm • Find the (locally) optimal policy for the model. • Execute the current policy and record the state trajectory. • Update the model such that the new model is exact for the current policy . • Use the new model to compute the policy gradient and update the policy: := + . • Go back to Step 2. Notes: • The step-size parameter is determined by a line search. • Instead of the policy gradient, any algorithm that provides a local policy improvement direction can be used. In our experiments we used differential dynamic programming.

Performance Guarantees: Intuition • Exact policy gradient: • Model based policy gradient: Evaluation of derivatives along wrong trajectory Derivative of approximate transition function Our algorithm eliminates one (of two) sources of error.

Performance Guarantees • Let the local policy improvement algorithm be policy gradient. Notes: • These assumptions are insufficient to give the same performance guarantees for model-based RL. • The constant K depends only on the dimensionality of the state, action, and policy (), the horizon H and an upper bound on the 1st and 2nd derivatives of the transition model, the policy and the reward function.

Experiments • We use differential dynamic programming (DDP) to find control policies in the model. • Two Systems: • Flight Simulator RC Car

Flight Simulator Setup • Flight simulator model has 43 parameters (mass, inertia, drag coefficients, lift coefficients etc.). • We generated “approximate models” by randomly perturbing the parameters. • All 4 standard fixed-wing control actions: throttle, ailerons, elevators and rudder. • Our reward function quadratically penalizes for deviation from the desired trajectory.

Flight Simulator Results 76% utility improvement over model-based approach desired trajectory model-based controller our algorithm

RC Car Setup • Control actions: throttle and steering. • Low-speed dynamics model with state variables: • Position, velocity, heading, heading rate. • Model estimated from 30 minutes of data.

Related Work • Iterative Learning Control: • Uchiyama (1978), Longman et al. (1992), Moore (1993), Horowitz (1993), Bien et al. (1991), Owens et al. (1995), Chen et al. (1997), … • Successful robot control with limited number of trials: • Atkeson and Schaal (1997), Morimoto and Doya (2001). • Robust control theory: • Zhou et al. (1995), Dullerud and Paganini (2000), … • Bagnell et al. (2001), Morimoto and Atkeson (2002), …

Conclusion • We presented an algorithm that uses a crude model and a small number of real-life trials to find a policy that works well in real-life. • Our theoretical results show that----assuming a deterministic setting and assuming a reasonable model----our algorithm returns a policy that is (locally) near-optimal. • Our experiments show that our algorithm can significantly improve on purely model-based RL by using only a small number of real-life trials, even when the true system is not deterministic.

Motivating Example • Student-driver learning to make a 90 degree right turn • Only a few trials needed. • No accurate model. • Key aspects • Real-life trial: shows whether turn is wide or short. • Crude model: turning steering wheel more to the right results in sharper turn, turning steering wheel more to the left results in wider turn. • Result: good policy gradient estimate.