gPU -ACCELERATED hmm FOR Speech Recognition

E N D

Presentation Transcript

HUCAA 2014 gPU-ACCELERATED hmm FOR Speech Recognition Leiming Yu, YashUkidave and David KaeliECE, Northeastern University

Outline • Background & Motivation • HMM • GPGPU • Results • Future Work

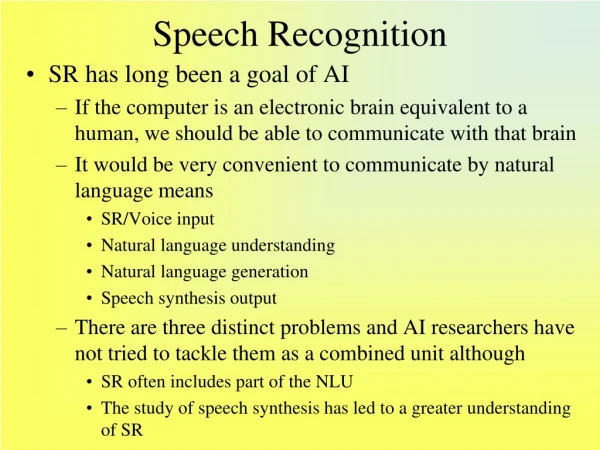

Background • Translate Speech to Text • Speaker DependentSpeaker Independent • Applications* Natural Language Processing * Home Automation * In-car Voice Control * Speaker Verifications * Automated Banking * Personal Intelligent Assistants Apple Siri Samsung S Voice * etc. [http://www.kecl.ntt.co.jp]

DTW Dynamic Time WarpingA template-based approach to measure similarity between two temporal sequences which may vary in time or speed. [opticalengineering.spiedigitallibrary.org]

DTW Dynamic Time Warping DTW Pros: 1) Handle timing variation 2) Recognize Speech at reasonable cost DTW Cons: 1) Template Choosing 2) Ending point detection (VAD, acoustic noise) 3) Words with weak fricatives, close to acoustic background For i := 1 to n For j := 1 to m cost:= D(s[i], t[j]) DTW[i, j] := cost + minimum(DTW[i-1, j ], DTW[i, j-1], DTW[i-1, j-1])

Neural Networks Algorithms mimics the brain. Simplified Interpretation: * takes a set of input features * goes through a set of hidden layers * produces the posterior probabilities as the output

Neural Networks Parking Meter Bike Pedestrian Car If Pedestrian “activation” of unit in layer matrix of weights controlling function mapping from layer to layer [Machine Learning, Coursera]

Neural Networks Equation Example

Neural Networks Example Hint: * effective in recognizing individual phones isolated words as short-time units * not ideal for continuous recognition tasks largely due to the poor ability to model temporal dependencies.

Hidden Markov Model In a Hidden Markov Model, * the states are hidden * output that depend on the states are visible x — states y — possible observations a — state transition probabilities b — output probabilities [wikipedia]

Hidden Markov Model The temporal transition of the hidden states fits well with the nature of phoneme transition. Hint: * Handle temporal variability of speech well * Gaussian mixture models(GMMs), controlled by the hidden variables determine how well a HMM can represent the acoustic input. * Hybrid with NN to leverage each modeling technique

Motivation • Parallel Architecturemulti-core CPU to many-core GPU ( graphics + general purpose) • Massive Parallelism in Speech Recognition SystemNeural Networks, HMMs, etc. , are both Computation and Memory Intensive • GPGPU Evolvement * Dynamic Parallelism • * Concurrent Kernel Execution * Hyper-Q • * Device Partitioning * Virtual Memory Addressing * GPU-GPU Data Transfer, etc. • Previous works • Our goal is to use new modern GPU features to accelerate Speech Recognition

Outline • Background & Motivation • HMM • GPGPU • Results • Future Work

Hidden Markov Model Markov chains and processes are named after Andrey Andreyevich Markov(1856-1922), a Russian mathematician, whose Doctoral Advisor is PafnutyChebyshev. 1966, Leonard Baum described the underlying mathematical theory. 1989, Lawrence Rabiner wrote a paper with the most comprehensive description on it.

Hidden Markov Model HMM Stages * causal transitional probabilities between states * observation depends on current state, not predecessor

Hidden Markov Model • Forward • Backward • Expectation-Maximization

Hidden Markov Model • Forward • Backward • Expectation-Maximization

HMM Backward I J t - 1 t t + 1 t + 2

HMM-EM Variable Definitions: * Initial Probability * Transition Prob. Observation Prob. * Forward Variable Backward Variable Other Variables During Estimation: * theestimated state transition probability matrix, epsilon * the estimated probability in a particular state at time t, gamma * Multivariate Normal Probability Density Function Update Obs. Prob. From Gaussian Mixture Models

Outline • Background & Motivation • HMM • GPGPU • Results • Future Work

GPGPU Programming Model

GPGPU GPU Hierarchical Memory System • Visibility • Performance Penalty [http://www.biomedcentral.com]

GPGPU • Visibility • Performance Penalty [www.math-cs.gordon.edu]

GPGPU GPU-powered Eco System 1) Programming Model * CUDA * OpenCL * OpenACC, etc. 2) High Performance Libraries * cuBLAS * Thrust * MAGMA (CUDA/OpenCL/Intel Xeon Phi) * Armadilo (C++ Linear Algebra Library), drop-in libraries etc. 3) Tuning/Profiling Tools * Nvidia: nvprof / nvvp * AMD: CodeXL 4) Consortium Standards Heterogeneous System Architecture (HSA) Foundation

Outline • Background& Motivation • HMM • GPGPU • Results • Future Work

Results Platform Specs

Results Mitigate Data Transfer Latency Pinned Memory Size current process limit: ulimit -l ( in KB ) hardware limit: ulimit –H –l increase the limit: ulimit –S –l 16384

Results A Practice to Efficiently Utilize Memory System

Results Hyper-Q Feature

Results Running Multiple Word Recognition Tasks

Outline • Background& Motivation • HMM • GPGPU • Results • Future Work

Future Work • Integrate with Parallel Feature Extraction • Power Efficiency Implementation and Analysis • Embedded System Development, Jetson TK1 etc. • Improve generosity, LMs • Improve robustness, Front-end noise cancelation • Go with the trend!