Binomial Probability Distribution

Binomial Probability Distribution. Here we study a special discrete PD (PD will stand for Probability Distribution) known as the Binomial PD. Binomial Distribution.

Binomial Probability Distribution

E N D

Presentation Transcript

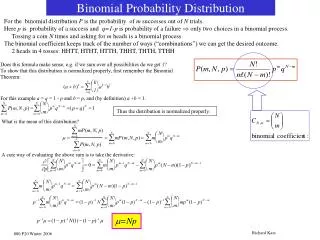

Binomial Probability Distribution Here we study a special discrete PD (PD will stand for Probability Distribution) known as the Binomial PD

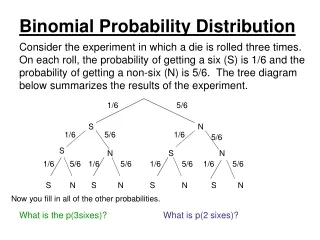

Binomial Distribution When you flip a coin you can get a head or a tail. You could observe heads or tails on several flips of a coin. Say you flip the coin n times (n is a general number of times and when we have a specific problem we usually have a specific value for n). On each flip we might call heads the “event of interest” and after n flips we might be interested in how many of the n flips gave the event of interest. The possibilities for the number of events of interest take on the discrete values 0, 1, 2 all the way through n. Thus the binomial distribution is really just the distribution of a variable with discrete values from 0 to n. But, certain conditions must hold. I show those soon. Now, on a coin flip heads has probability .5, but the event of interest on any one trial in the binomial process does not have to be .5

Properties of a Binomial process. 1) The sample consists of a fixed numbers of observations, n. 2) Each observation is classified into one of two mutually exclusive and collectively exhaustive categories. 3) The probability of an observation, denoted by π, being classified as the event of interest is constant (does not change) from observation to observation. (1 - π) is the probability of an observation being classified as NOT being the event of interest does not change from observation to observation. 4) The observations are independent. Recall before we saw that the probability of the intersection of independent events is equal to the product of the probabilities of each event. Let’s move to an example to put this information into context.

In the book there is an example about a company that takes orders from customers over the internet. Any invoice that is questionable is tagged (and presumably examined more closely before sending to a shipping department). Say in recent history the likelihood an order is tagged is 0.10. So, an order being tagged is the event of interest and the probability is .1 that this will happen to an order. If a sample of 4 orders is taken then the binomial variable the number of tagged orders can take on the values 0, 1, 2, 3, and 4. Using counting rule 1 there are 16 possible ways the 4 orders can come in. Let’s see these on the next screen.

Let’s call s the event of interest (a success) and f not an event of interest (a failure). 4 orders number of events of interest ssss 4 sssf 3 ssfs 3 ssff 2 sfss 3 sfsf 2 sffs 2 sfff 1 fsss 3 fssf 2 fsfs 2 fsff 1 ffss 2 ffsf 1 fffs 1 ffff 0 On the next slide I have a tree diagram to help you think about all the possible outcomes. On the fair left I have the event of interest s and not event of interest f in the first round. The second round shows a new s and f for each from the previous round. Then you just follow each branch to get the 16 different orders shown here.

The random variable again is x = the number tagged orders. So we can have 0, 1, 2, 3, or 4. You can see that 1 of the 16 possible outcomes has exactly 4 tagged orders. Does this mean the probability of exactly 4 tagged orders is 1/16 or .0625? Maybe not. Here is why. We have to figure in the probability that a given order will be tagged. All 4 orders being tagged is really the intersection of the first order tagged and the second order tagged and the third order tagged and the fourth order tagged. The probability of the intersection of independent events is just the multiplication of the probability of each. P(4) = .1(.1)(.1)(.1) = .0001

Now, the probability that only three are tagged is tricky. You can see from the list 4 of the 16 had only 3 tagged. Each one of the 4 has probability .1(.1)(.1)(.9) = .0009. So with 4 possible ways of getting this result we have P(3) = .0036 6 of the 16 outcomes have only two being tagged. Each has probability .1(.1)(.9)(.9) = .0081. So with this occurring six times P(2) = .0486. 4 of the 16 outcomes have only 1 being tagged. Each has probability .1(.9)(.9)(.9) = .0729. So with this occurring 4 times P(1) = .2916. 1 of the 16 outcomes has none tagged. P(0) = ,9(.9)(.9)(.9) = .6561

Remember we have n = 4 orders here and X = the number of orders tagged. X could be 0, 1, 2, 3, or 4. In general, the probability, written P(X), of a given X is found by the formula n! πX(1-π)(n-X) remember something raised to 0 power = 1 X!(n-X)! Now in our example n = 4, π = .1 and 1-π = .9 When X = 0, the probability P(0) = 4! .10(.9)4-0 = .6561 0!4! Note when we found P(0) we had 0! and this equals 1. Plus we had something raised to the 0 power. This always equals 1.

As you can tell these calculations are quite tedious. The good news is our book has a table that can give us the probabilities we so desire. Table E.6 in the back of the book has some binomial tables. Note down the left side of the table you see examples of n from 2 through 10. Also on the left you see X as the number of items of interest. For our example we had n = 4, or 4 orders sampled and X represents the number tagged. So we see the probability that 0 of the 4 being tagged is in the 0 row (of the n = 4 section). Since in one trial our event of interest = .1 we have to look in that column. (The table says p across the top but I think it should say π.). On the next screen I show you the binomial probability distribution with n = 4 and π = .1. I also add the cumulative distribution. (Note in table E.6 if π >.5 you look at the bottom of the table and up the right side.)

X P(X) cum prob 0 .6561 .6561 1 .2916 .9477 2 .0486 .9963 3 .0036 .9999 4 .0001 1.000 Now, let’s ask a few more questions. What is the probability 1 or fewer oders would be tagged? The cum prob tells us the answer is .9477. What is the probability that more than 2 will be tagged? More than 2 is the complement of 2 or fewer, so P(more than 2) = 1 – P(X≤2) = 1 - .9963 = .0037. The cum prob column is telling us the prob of X in a given row or any X less than in the row. P(X≤2), for example, is the probability of 2 or fewer tagged orders and equals .9963

Microsoft Excel and the Binomial PD On the next slide there is a spreadsheet in Excel. I use a different generic example for you to see how this is similar to the table E.6. Note cell c1 has the value of n = 3 and cell c2 has the value of π =p = .3. Cells A4:A7 have the values of x. Cells B4:B7 have Excel formulas typed in. If we put the mouse in cell B4 and typed “=BINOMDIST(A4, $C$1, $CD$2, FALSE).” The A4 will mean 0. The $C$1 will mean 3. The $C$2 will mean .3 and the FALSE means we want the f(x). When you type this in hit the enter key. To get the rest of the f(x) values put the mouse back into cell B5 and click once. Then move the mouse to the bottom right corner of the cell, click and drag down to the last cell. In the BINOMDIST function A4 changes to A5 and so on as you drag down. Excel wants to change cell values when you drag functions. The $ signs in the $D$1 mean when you drag you will not leave that cell. If you want a cum prob put TRUE, not FALSE.

The expected value for the binomial PD is E(x) = nπ (a simplification for the binomial case from what we saw previously), and the variance is Var(x) = σ2 = nπ(1-π) (also a simplification). The standard deviation is just the square root of the variance. Consider a binomial experiment with n = 10 and π = p = 0.1. You can double click inside the spreadsheet on the next screen and copy the Excel file if you want. a. f(0) is found in the f(x) column as .34867 b. f(2) = .1937 c. P(x≤2) is found in the Cum Prob column as .9298 d. P(x≥1) = 1 – P(x≤0) = 1 - .3487 = .6513

Note the E notation here. 9E-09 means we have the number 9 but have to move the decimal 9 places to the left because we have E-09. The number is .000000009. An E+ would require a movement of the decimal to the right.

Note on the previous slide I have an Excel spreadsheet. At the top I typed the label and numbers Number of Trials (n) 10 Probability of Success (p) 0.1 in separate cells. The numbers are used in the formulas. You should do this as well when you do a problem because it “dresses up” the output and makes it easier to remember what the heck is going on. Also note that in my notes when you see a table you can double click on it and see the Excel spreadsheet.

Example flipping two coins If you flip two coins (or one coin twice) the possible outcomes are HH, HT, TH, TT. So, n = 2. Let’s say the event of interest is heads H. We could have X = 0, 1, or 2. Also say π = .5 From table E.6 we see X P(X) 0 .25 1 .5 2 .25 What is the probability of at least 1 head on the two flips? This would be P(1) + P(2) = .5 + .25 = .75

Problem 12 page 165 Note the success rate is 87.8% or .878. This does not show up in the table E.6. You could use Excel here, but let’s just use the closest value in the table .90. You find this in the bottom of the table. So then we have to go up the right side. n = 3 and so we get X P(X) P(X≤Xi) 0 .0010 .0010 1 .0270 .0280 2 .2430 .2710 3 .7290 1.0000 a. P(3) = .7290 b. P(0) = .001 c. P(at least 2) = P(2 or 3) = 1 – (1 or 0) = 1 - .0280 = .972 d. mean = nπ = 3(.878) = 2.634, so on average 2.634 of every 3 orders are correct. Standard dev = sqrt[nπ(1 – π)] = sqrt[3(.878)(.122)] = .57