Evaluation

Learn why evaluation is crucial for project success, funding, and improvement. Understand types and importance of evaluation, what to evaluate, who should conduct it, and cost considerations. Explore data types, methods, and analysis tools.

Evaluation

E N D

Presentation Transcript

Evaluation Krista S. Schumacher Schumacher Consulting.org 918-284-7276 krista@schumacherconsulting.org www.schumacherconsulting.org Prepared for the Oklahoma State Regents for Higher Education 2009 Summer Grant Writing Institute July 21, 2009

Why Evaluate? • How will you know your project is progressing adequately to achieve objectives? • How will funders know your project was successful? • Increasing emphasis placed on evaluation, i.e., • U.S. Department of Education • National Science Foundation • Substance Abuse and Mental Health Services Administration (SAMHSA)

Why Evaluate? • Improve the program – • “Balancing the call to prove with the need to improve.” (W.K. Kellogg Foundation • Determine program effectiveness – • Evaluation supports “accountability and quality control” (Kellogg Foundation) • Significant influence on program’s future • Generate new knowledge – • Not just research knowledge • Determines not just that a program works, but analyzes how and why it works • With whom is the program most successful? • Under what circumstances?

Why Evaluate? WHAT WILL BE DONE WITH THE RESULTS????? “Evaluation results will be reviewed (quarterly, semi-annually, annually) by the project advisory board and staff. Results will be used to make program (research method) adjustments as needed.”

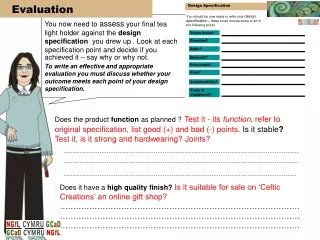

What to Evaluate? • Objectives • Use baseline data from need section • Activities • Program/research fidelity • How well program implementation or actual research matched established protocol • Attitudes • Consider sorting data by demographics, e.g., • location, gender, age, race/ethnicity, income level, first-generation status

Types of Evaluation • Process evaluation: • What are we doing? • How closely did implementation match the plan (program fidelity)? • What types of deviation from the plan occurred? • What led to the deviations? • What effect did the deviations have on the project and evaluation? • What types of services were provided, to whom, in what context, and at what cost? • services (modality, type, intensity, duration) • recipients (individual demographics and characteristics) • context (institution, community, classroom) • cost (did the project stay within budget?)

Types of Evaluation • Outcome evaluation: • What effect are we having on participants? • What program/contextual factors were associated with outcomes? • What individual factors were associated with outcomes? • How durable were the effects? • What correlations can be drawn between outcomes and program? • How do you know that the program was the cause of the effect? • How long did outcomes last?

Who will Evaluate? • External evaluators increasingly required or strongly recommended • Partners for effective and efficient programs • Collaborators in recommending and facilitating program adjustments • Methodological orientations • Mixed-methods? Quantitative only? Qualitative only? • Philosophical orientations • Purpose to use evaluation to boost personal research publication record or to help organization/effort? • Experience and qualifications • Years conducting evaluations for types of organizations and types of projects (e.g., education, health, technical research?) • Master’s degree required, PhD preferred • (or working toward PhD) • Social science background: Sociology, Psychology, Political Science

How much will it cost? • External evaluations cost money…period. • Some evaluators charge for pre-award proposal development phase • Kellogg Foundation recommends 5% to 10% of total budget • Check funder limits on evaluation • Ensure cost is reasonable but sufficient to conduct in-depth evaluation and detailed reports

Two Types of Data • Quantitative • Numbers based on objectives and research design • What data do you need? e.g., • Number of participants • Grade point averages • Retention rates • Survey data • Research outcomes • Qualitative • Interviews • Focus groups • Observation

Methods/Instruments • How are you going to get your data? • Establish baseline data • Pre- and post-assessments (knowledge, skills) • Pre- and post-surveys (attitudinal) • Enrollment rosters • Meeting minutes • Database reports • Institutional Research Office (I.R.)

Data Analysis: So you have your data, now what? • Quantitative data • Data analysis programs: • SPSS (Statistical Program for the Social Sciences), Stata, etc... • Descriptive and statistical data: • # and % of respondents who strongly agree that flying pigs are fabulous compared to those who strongly disagree with this statement. • Likert scale • On a scale of 1 to 5, with 1 being “strongly disagree” and 5 being “strongly agree,” rank your level of agreement with the following statement….. • t-ratio, F test, chi-square….. • “Quantitative data will be assessed using Stata statistical analysis software to report on descriptive and statistical outcomes for key objectives (e.g., increase in GPA, retention, enrollment, etc.).”

Data Analysis • Qualitative Data • Data analysis programs • NVivo (formerly NUD*IST), ATLAS.ti, etc… • “Qualitative data will be analyzed for overarching themesby reviewing notes and transcripts using a process of open coding. The codes will be condensed into a series of contextualized categories to reveal similarities across the data.” • Contextualization – how things fit together • More than pithy anecdotes • “May explain – and provide evidence of – those hard-to-measure outcomes that cannot be defined quantitatively.” – W.K. Kellogg Foundation • Provides a degree of insight into how and why a program is successful that quantitative data simply cannot provide

Two Types of Timeframes • Formative • Ongoing throughout life of grant • Measures activities and objectives • Summative • At conclusion of grant program or research • NEED BOTH!

Timelines • When will evaluation occur? • Monthly? • Quarterly? • Semi-annually? • Annually? • At the end of each training session? • At the end of each cycle? • How does evaluation timeline fit with formative and summative plans?

Origin of the Evaluation: Need and Objectives Need: For 2005-06, the fall-to-fall retention rate of first-time degree-seeking students was 55% for the College’s full-time students, compared to national average retention rates of 65% for full-time students at comparable institutions (IPEDS, 2006). Objective: The fall-to-fall retention rate of full-time undergraduate students will increase by 3% each year from a baseline of 55% to 61% by Sept. 30, 2010.

BEWARE THE LAYERED OBJECTIVE! • By the end of year five, five (5) full-time developmental education instructors will conduct 10 workshops on student retention strategies for 200 adjunct instructors.

Origin of the Evaluation: Research Hypothesis • Good: • Analogs to chemokine receptors can inhibit HIV infection. • Not so good: • Analogs to chemokine receptors can be biologically useful. • A wide range of molecules can inhibit HIV infection. *Waxman, Frank. Ph.D. (2005, July 13). How to Write a Successful Grant Application. Oklahoma State Regents for Higher Education.

Logic Models • From: University of Wisconsin-Extension, Program Development and Evaluation • http://www.uwex.edu/ces/pdande/evaluation/evallogicmodel.html • A Logic Model is…… • A depiction of a program showing what the program will do and what it is to accomplish. • A series of “if-then” relationships that, if implemented as intended, lead to the desired outcomes • The core of program planning and evaluation Situation Inputs Outputs Outcomes Hungry Get food Eat food Feel better

Evaluation Resources • W.K. Kellogg Foundation – “Evaluation Toolkit” • http://www.wkkf.org/default.aspx?tabid=75&CID=281&NID=61&LanguageID=0 • Newsletter resource – The PEN (Program Evaluation News) • http://www.the-aps.org/education/promote/content/newslttr3.2.pdf • NSF-sponsored program • www.evaluatorsinstitute.com • American Evaluation Association • www.eval.org • Western Michigan University, The Evaluation Center • http://ec.wmich.edu/evaldir/index.html (directory of evaluators) • OSRHE list of evaluators and other resources • http://www.okhighered.org/grant%2Dopps/writing.shtml • “Evaluation for the Unevaluated” course • http://pathwayscourses.samhsa.gov/eval101/eval101_toc.htm

Evaluation Resources • The Research Methods Knowledge Base • http://www.socialresearchmethods.net/ • The What Works Clearinghouse • http://www.w-w-c.org/ • The Promising Practices Network • http://www.promisingpractices.net/ • The International Campbell Collaboration • http://www.campbellcollaboration.org/ • Social Programs That Work • http://www.excelgov.org/ • Planning an Effective Program Evaluation short course • http://www.the-aps.org/education/promote/pen.htm

Schumacher Consulting.org In DIRE need of grant assistance? We provide All Things Grants!™ Grant Development | Instruction | Research | Evaluation Established in 2007, Schumacher Consulting.org is an Oklahoman-owned firm that works in partnership with organizations to navigate transformation and achieve change by securing funding and increasing program success.