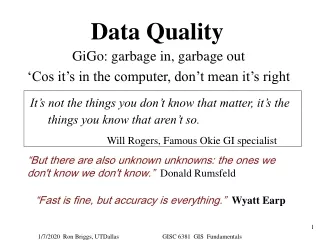

Data Quality

Data Quality . Learning Objectives. Identify data quality issues at each step of a data management system List key criteria used to assess data quality Identify key steps for ensuring data quality at all levels of the data management system. Does your Organization have Problems with.

Data Quality

E N D

Presentation Transcript

Learning Objectives • Identify data quality issues at each step of a data management system • List key criteria used to assess data quality • Identify key steps for ensuring data quality at all levels of the data management system

Does your Organization have Problems with...... • Incomplete or missing data? • Getting data collected, collated or analyzed quickly enough? • Getting data from partners in a timely manner? • Getting honest information?

Data Quality: Effect on Program Poor quality data can cause: • Erroneous program management decisions, and the use of additional program resources to take corrective actions • Missed opportunities for identifying program strengths or weaknesses • Reduced stakeholder confidence and support

Data Management System (DMS) Chain: 6 key processes Source Collection Collation Analysis Reporting Use 5

Dimensions of Data Quality • Validity – the ability to measure what is intended (accuracy) • Reliability – data collected regularly using the same methodology • Integrity – data free of “untruth” from willful or unconscious error due to manipulation or through the use of technology (for example data entry) • Precision – the ability to minimize random error, or the ability to reproduce measurements consistently • Timeliness - ability to have regularly collected, up-to-date data available when it is needed • Completeness – all intended is collected (no missing data)

REAL WORLD In the real world, project activities are implemented in the field. These activities are designed to produce results that are quantifiable INFORMATION SYSTEM An information system represents these activities by collecting the results that were produced and mapping them to a recording system Data Quality:How well the information system represents the real world Data Quality 1. Validity 2. Reliability 3. Integrity 4. Precision 5. Timeliness 6. Completeness Information System Real World Data Quality

Case Study 1: Number of Visit Forms Submitted by CHWs, by month

1. Validity • Do the data clearly, directly, and adequately represent the result that was intended to be measured? • Have we actually measured what we intended? • Do the data adequately represent performance & are thus valid? • Example: Are malaria cases really malaria cases as defined?

A Selection of 3 Aspects of Validity • Face Validity • Is there a solid, logical relation between the activity or program and what is being measured? • Does the indicator directly measure what was intended to be measured? • Measurement Validity • Measurement Errors resulting from poorly designed instruments or poor management of the DMS • Transcription Validity • Are transcription / data entry and collation procedures sound? • Is the data entered/ tallied correctly?

Data Validity challenges • Poorly designed data collection instruments • Poorly trained or partisan enumerators • Use of questions that elicit incomplete or untruthful information • Respondents feel pressured to answer correctly • Data are incomplete

Data Validity challenges • Data altered in transcription • Data Manipulation Errors: • Formulae not being applied correctly • Inconsistent formula application • Inaccuracies in formulae • Sampling / representation errors • Was the sampling frame wrong? • Unrepresentative Samples: too small for statistical extrapolation and/or contain sample units based on supposition rather than statistical representation

Maximizing Validity in your DMS • Use well-designed Indicator Reference Sheets to clearly and specifically define what is being measured • Use well-designed data collection and collation tools • Understand the population you are interested in (ie, sampling frame) • Be thoughtful and practical about methodologies (more sophisticated is not always better) • Spend a lot of money on training, supervision, and all of the processes and procedures relevant to data collection

2. Reliability • Consistency and stability of the measurement and data collection process • Can we get the same results or findings if the procedure were repeated over and over? • data collection process • sampling methodology • data collection procedure • Three aspects: • Consistency • Internal Quality Control • Transparency

Reliability: Consistency • Consistent procedures for data collection, maintenance, analysis, processing, and reporting • Definitions and indicators are stable across time • Data collection and sampling processes used are the same across time periods and personnel • Data analysis and reporting approach are the same from year to year, location to location, data source to data source

Reliability: Internal Quality Controls • Controls are procedures that: • exist to ensure that data are free of significant error & that bias has not been introduced • are in place for periodic review of data collection, maintenance, and processing • provide for periodic sampling and quality assessment of data

Reliability: Transparency • Transparency is increased when: • Data collection, cleaning, analysis, reporting, & quality assessment procedures are documented in writing • Data problems at each level are reported to the next level • Data quality problems are clearly described in reports

Maximize Reliability in your DMS • Develop Indicator Protocol Reference Sheets with clear instructions/definitions for all data collection efforts at all levels of the process • Document data collection, cleaning, analysis, reporting, and quality assurance procedures • Make sure everyone is aware of the procedures and that they follow your procedures from reporting period to reporting period • Use the same tool for collecting, collating, and reporting – thereby reducing transcription between DMS stages

Maximize Reliability in your DMS • Minimize (or eliminate) the use of different tools within and between sites for collecting/collating and reporting the same data • Ensure that people report when data is wrong or missing • Maintain official copies of all protocols and instruments • Consider using multiple (3) independent raters when data is based on human observation

Maximize Reliability in your DMS • Provide guidance to data collectors and transcribers on what to do if they see a data error and who to report it to • Develop an error log to track data errors and document data quality problems • Routinely randomly check data for transcription errors. If necessary, double key information • Collect data electronically. Include automatic skips/checks in data collection/transcription software • Track errors to their original source and correct mistakes • Always double check that final numbers are accurate

Case Study 3 • The data capturer at Organization Z…

3. Integrity • Data integrity refers to data which is free of “untruth” from willful or unconscious error • Compromised during data collection, cleaning, handling and storage due to lack of proper controls by either human or technical means, willfully or unconsciously • For example: • loss of data when technology fails • Fragile Data Management System • data are manipulated for either personal or political reasons

Maximize Integrity • Maintain objectivity and independence in data collection, management, and assessment procedures • Arrange for more than one data handler/reporter • Have a second set of eyes to verify data reported at each stage of the DMS • Copy all data handlers in final reports • Treat data as “official organizational information” and store data with security • Remove incentives that could lead to data manipulation • Hire personnel to maintain the technical aspects of the DMS

4. Precision • Precision is the ability to minimize random error, or the ability to reproduce measurements consistently • Precision is measurement of error “around the edges” of the measure • Margin of error • Are the units of measurement refined enough for your application / needs?

Maximize Precision • Know exactly what kind of information you require • Ensure that your data collection staff have the capacity to maintain adequate precision • Refine data collection instruments • Report any issues around precision (ex. develop an error log)

Case Study 5 Organization Green: Organic Pest Control Applications

5. Timeliness • Two Aspects of Timeliness: • Frequency • Data are available on a frequent enough basis to inform program management decisions • Currency • The data are reported as soon as possible after collection • Main threats to Timeliness concern collection and reporting frequencies

Maximize Timeliness • Make a realistic schedule that meets program management needs and enforce it! • Include dates for data collection, collation, analysis and reporting- each level of the data management process • Have a calendar for when data is due at each level in the data management process • Data Collection/Collation: Clearly identify the date of data collection/collation on all forms and reports • Reporting: Clarify dates of data collection when presenting results

Case Study 6 Operation Appropriate Treatment: Confirmed Cases and Treatment Maps

6. Completeness • All of the data that you intend to collect are collected • Your DMS represents the entirety of service delivery points, individuals, or other measurement units, and not just a fraction of the full population

Remember that Data Quality – • Refers to the worth/accuracy of the information collected • Focuses on ensuring that the data management process is of a high standard • Meets reasonable standards for validity, reliability, timeliness, precision, and integrity

Key Factors for Data Quality Success • Functioning information systems • Clear definition of indicators consistently used at all levels • Description of roles and responsibilities at all levels • Specific reporting timelines • Standard/compatible data-collection and reporting forms/tools with clear instructions • Documented data review procedures to be performed at all levels • Steps for addressing data quality challenges (missing data, double-counting, lost to follow up, …) • Storage policy and filling practices that allow retrieval of documents for auditing purposes (leaving an audit trail)

MEASURE Evaluation is a MEASURE program project funded by the U.S. Agency for International Development (USAID) through Cooperative Agreement GHA-A-00-08-00003-00 and is implemented by the Carolina Population Center at the University of North Carolina at Chapel Hill, in partnership with Futures Group International, John Snow, Inc., ICF Macro, Management Sciences for Health, and Tulane University. Visit us online at http://www.cpc.unc.edu/measure