Content analysis

Content analysis. Quantitative evaluation of texts. Questions about content.

Content analysis

E N D

Presentation Transcript

Content analysis Quantitative evaluation of texts

Questions about content • When we talk about “cop shows” or “news” or “sports” we think about certain kinds of content. Usually, we perceive certain regularities in the content and notice when a single ‘text’ or ‘artifact’ deviates from those expectations. • Sometimes we think certain regularities exist, while others dispute our beliefs.

The need for careful analysis • Because our own hunches and expectations can be in error, and much of our understanding of the effects, values, and role of telecommunications is dependent upon the nature of the content of television, radio, film, videogames, etc. it is often necessary to more carefully analyzed that content.

Text analyses • Many ways of evaluating content/texts are available. We call the entire group of methods “text analysis.” • The most heavily quantitative form of text analysis is “content analysis.”

Definition Content analysis: A research technique for making inferences by systematically and objectively measuring specified characteristics of a text.

Content analysis in telecommunications research • Content analysis is probably the most common form of research found in scholarly study of telecommunications • It demands the least money and resources • The downside is that many consider it “an easy publication” and produce very low-quality work

Goals of text analysis • To explain the nature of communication. • Describe the content, structure, and functions of the messages contained in texts. • What does the text mean? How does it achieve that meaning? • To describe how communication is related to other variables. • Input variables – Outcome variables • For example: How does a corporate takeover affect television news coverage? • To evaluate texts by using a set of standards or criteria. • Must establish a set of standards against which the communication can be compared. • Example: Is the text too hard to read for 12-year-olds?

Types of texts • Most any fixed symbolic whole—a story, a textbook, a church, a transcribed conversation, a website, and on and on can be considered a ‘text’. Sometimes a whole series of stories (Star Trek, season 2) may be considered a ‘text’.

Acquiring texts • Listen to conversations in naturalistic settings • Conversations produced in a lab • Visit rooms of teenage girls • Literary or historical sources (novels or films) • Record shows off the air • Visit or mirror websites

Procedures Select the text(s) to be analyzed Determine the recording units Develop content categories Train observers to code units into categories Carry out the coding while monitoring for quality Analyze the data

Sampling in content analysis • Population: totality of texts we want to say something about • This is often more difficult than it seems • All issues of the Herald Leader over a period of a year? • All coverage of terrorism in the elite press? • We can analyze a census or we can sample • The same sorts of sampling techniques used for surveys can be applied here • Random v. non-random sampling • Many non-random samples chosen for theoretical as well as convenience reasons

Sampling • Commonly multiple stages in sampling documents • Selecting communication sources • Newsweek • Prime Time television • Sampling documents • Pick an issue, particular shows • Sampling within documents • Front page v. all pages, etc.

Units of observation • Chosen first according to theory, then by convenience • Articles • Broadcasts • Books • Pictures • Movies • Letters • Conversations

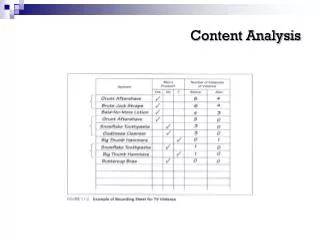

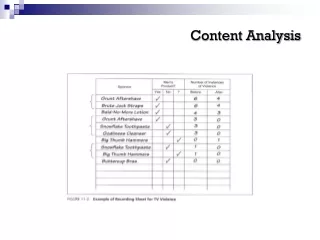

Recording units • Recording units are the actual ‘pieces’ of the observational units that are scored according to your category scheme • For example, if I were observing a single episode of NCIS, I might score every 5 minutes of the show for the presence or absence of humor. The 5-minute segment would be my recording unit.

Recording units • Single word or symbol • May be too small—large number of data points generated • Theme • Single assertion about some subject • May have overlapping themes • Character • Person or animal categorized rather than words or themes • Sentence or paragraph • May have ambiguous or conflicted evidence of one or more categories of content • Item • Whole book, film, radio program • Difficulty coding into single categories • Physical size measure • Column inches • Number of seconds

Coding categories • The category scheme is the set of dimensions you use to evaluate your recording units and the available options you have for scoring one recording unit on each dimension • For example: • Recording unit: Scene • Dimension: Emotionality of a scene • Scoring options: High/Medium/Low

Coding categories • The coding categories must be carefully developed in order to see that when the actual data are generated, they answer your theoretic questions of the text • I can’t tell you how many times I’ve had to reject manuscripts because the coding scheme was not adequate to answer the theoretical questions posed in the literature review

Coding scheme • Conceptualization coding categories • The code book provides the rules for assigning a coding unit to one or another category • It is an actual set of rules for assigning the proper codes (scoring) to each coding unit

Coding rules • What are the rules for determining which category a given recording unit should be placed in? • How do we know whether a given paragraph is pro-Lunsford, neutral, or anti-Lunsford? • This is a crucial part of the coding scheme. A naïve coder who simply applies the rules should get the outcome the theorist/ researcher intended.

Good coding categories • Categories should be: • Exhaustive • Mutually exclusive • Derived from a single classification principle • Independent • Adequate to answer the questions asked of the data

Practice coding • In order to see that coders use the instrument as the researcher intended, the researcher holds practice sessions • Related content, usually not from the actual sample, is coded and the results discussed

Coding reliability • To ensure that the coding scheme is reliable we have to test it • Coders score identical content • The more often different coders produce the same scores for the identical content, the more reliable the coding scheme is • Results are compared using statistical tests for reliability • Cronbach’salpha; Krippendorff’s alpha • A rule of thumb is that the coding scheme is reliable if alpha is at least .70

Reliability v. Validity v. Precision • The highest levels of reliability are usually found with very simple, extreme codes (true v. false; happy v. sad) but these simple codes often don’t provide the precision we want (clearly true, seemingly true, ambiguous, seemingly false, clearly false) and therefore reduce the value of the results—validity may suffer. • The researcher has to consider the tradeoff

Data analyses • Descriptive statistics are often used • Percentages • Mean • Standard deviation • May compare across texts • To test hypotheses, etc. • Compare findings to some prediction • Relative percentages among categories, between sources on same categories • Correlations among categories, with predictor variables, with outcome variables • E.g., goriness of violence with measures of audience enjoyment