Understanding Bayesian Networks in Artificial Intelligence

Explore Bayesian networks, a type of graphical model that models conditional independence relationships between random variables. Learn about random variables, joint probability distributions, and the representation of graphical models for efficient computation in probabilistic models.

Understanding Bayesian Networks in Artificial Intelligence

E N D

Presentation Transcript

Bayesian Networks Tamara Berg CS 590-133 Artificial Intelligence Many slides throughout the course adapted from Svetlana Lazebnik, Dan Klein, Stuart Russell, Andrew Moore, Percy Liang, Luke Zettlemoyer, Rob Pless, Killian Weinberger, Deva Ramanan

Announcements • HW3 will be released tonight • Written questions only (no programming) • Due Tuesday, March 18, 11:59pm

Review: Probability • Random variables, events • Axioms of probability • Atomic events • Joint and marginal probability distributions • Conditional probability distributions • Product rule • Independence and conditional independence • Inference

Bayesian decision making • Suppose the agent has to make decisions about the value of an unobserved query variable X based on the values of an observed evidence variableE • Inference problem: given some evidence E = e, what is P(X | e)? • Learning problem: estimate the parameters of the probabilistic model P(X | E) given training samples{(x1,e1), …, (xn,en)}

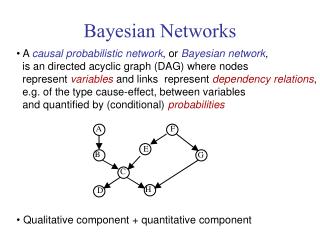

Bayesian networks (BNs) • A type of graphical model • A BN states conditional independence relationships between random variables • Compact specification of full joint distributions

Random Variables Random variables Let be a realization of

Random Variables Random variables Let be a realization of A random variable is some aspect of the world about which we (may) have uncertainty. Random variables can be: Binary (e.g. {true,false}, {spam/ham}), Take on a discrete set of values (e.g. {Spring, Summer, Fall, Winter}), Or be continuous (e.g. [0 1]).

Joint Probability Distribution Random variables Let be a realization of Joint Probability Distribution: Also written Gives a real value for all possible assignments.

Queries Also written Joint Probability Distribution: Given a joint distribution, we can reason about unobserved variables given observations (evidence): Stuff you care about Stuff you already know

Representation Also written Joint Probability Distribution: One way to represent the joint probability distribution for discrete is as an n-dimensional table, each cell containing the probability for a setting of X. This would have entries if each ranges over values. Graphical Models!

Representation Also written Joint Probability Distribution: Graphical models represent joint probability distributions more economically, using a set of “local” relationships among variables.

Graphical Models Graphical models offer several useful properties: 1. They provide a simple way to visualize the structure of a probabilistic model and can be used to design and motivate new models. 2. Insights into the properties of the model, including conditional independence properties, can be obtained by inspection of the graph. 3. Complex computations, required to perform inference and learning in sophisticated models, can be expressed in terms of graphical manipulations. from Chris Bishop

Main kinds of models • Undirected (also called Markov Random Fields) - links express constraints between variables. • Directed (also called Bayesian Networks) - have a notion of causality -- one can regard an arc from A to B as indicating that A "causes" B.

Cavity Weather Toothache Catch Syntax • Directed Acyclic Graph (DAG) • Nodes: random variables • Can be assigned (observed)or unassigned (unobserved) • Arcs: interactions • An arrow from one variable to another indicates direct influence • Encode conditional independence • Weather is independent of the other variables • Toothache and Catch are conditionally independent given Cavity • Must form a directed, acyclic graph

Example: N independent coin flips • Complete independence: no interactions … X1 X2 Xn

Example: Naïve Bayes document model • Random variables: • X: document class • W1, …, Wn: words in the document X … W1 W2 Wn

Example: Burglar Alarm • I have a burglar alarm that is sometimes set off by minor earthquakes. My two neighbors, John and Mary, promise to call me at work if they hear the alarm • Example inference task: suppose Mary calls and John doesn’t call. What is the probability of a burglary? • What are the random variables? • Burglary, Earthquake, Alarm, JohnCalls, MaryCalls • What are the direct influence relationships? • A burglar can set the alarm off • An earthquake can set the alarm off • The alarm can cause Mary to call • The alarm can cause John to call

Example: Burglar Alarm What does this mean? What are the model parameters?

Bayes Nets Directed Graph, G = (X,E) Nodes Edges • Each node is associated with a random variable

Joint Distribution By Chain Rule (using the usual arithmetic ordering)

Directed Graphical Models Directed Graph, G = (X,E) Nodes Edges • Each node is associated with a random variable • Definition of joint probability in a graphical model: • where are the parents of

Example Joint Probability:

Conditional Independence Independence: Conditional Independence: Or,

Conditional Independence By Chain Rule (using the usual arithmetic ordering) Joint distribution from the example graph: Missing variables in the local conditional probability functions correspond to missing edges in the underlying graph. Removing an edge into node i eliminates an argument from the conditional probability factor

Semantics • A BN represents a full joint distribution in a compact way. • We only need to specify a conditional probability distribution for each node given its parents: P(X | Parents(X)) … Z1 Z2 Zn X P(X | Z1, …, Zn)

Example 0 0 0 0 1 1 1 1 0 0 0 0 1 0 1 1 1 1 0 1 0 0 1 1

Example: Alarm Network Burglary Earthqk Alarm John calls Mary calls

Size of a Bayes’ Net • How big is a joint distribution over N Boolean variables? 2N • How big is an N-node net if nodes have up to k parents? O(N * 2k+1) • Both give you the power to calculate • BNs: Huge space savings! • Also easier to elicit local CPTs • Also turns out to be faster to answer queries (coming)

The joint probability distribution • For example, P(j, m, a, ¬b, ¬e) • = P(¬b) P(¬e) P(a | ¬b, ¬e) P(j | a) P(m | a)

Independence in a BN • Important question about a BN: • Are two nodes independent given certain evidence? • If yes, can prove using algebra (tedious in general) • If no, can prove with a counter example • Example: • Question: are X and Z necessarily independent? • Answer: no. Example: low pressure causes rain, which causes traffic. • X can influence Z, Z can influence X (via Y) • Addendum: they could be independent: how? X Y Z

Independence Key properties: a) Each node is conditionally independent of its non-descendants given its parents b) A node is conditionally independent of all other nodes in the graph given it’s Markov blanket (it’s parents, children, and children’s other parents)

Moral Graphs Equivalent undirected form of a directed acyclic graph

Independence in a BN • Important question about a BN: • Are two nodes independent given certain evidence? • If yes, can prove using algebra (tedious in general) • If no, can prove with a counter example • Example: • Question: are X and Z necessarily independent? • Answer: no. Example: low pressure causes rain, which causes traffic. • X can influence Z, Z can influence X (via Y) • Addendum: they could be independent: how? X Y Z

Causal Chains • This configuration is a “causal chain” • Is Z independent of X given Y? • Evidence along the chain “blocks” the influence X: Project due Y: No office hours Z: Students panic X Y Z Yes!

Common Cause • Another basic configuration: two effects of the same cause • Are X and Z independent? • Are X and Z independent given Y? • Observing the cause blocks influence between effects. Y X Z Y: Homework due X: Full attendance Z: Students sleepy Yes!

Common Effect • Last configuration: two causes of one effect (v-structures) • Are X and Z independent? • Yes: the ballgame and the rain cause traffic, but they are not correlated • Still need to prove they must be (try it!) • Are X and Z independent given Y? • No: seeing traffic puts the rain and the ballgame in competition as explanation • This is backwards from the other cases • Observing an effect activates influence between possible causes. X Z Y X: Raining Z: Ballgame Y: Traffic

The General Case Causal Chain • Any complex example can be analyzed using these three canonical cases • General question: in a given BN, are two variables independent (given evidence)? • Solution: analyze the graph Common Cause (Unobserved) Common Effect

Reachability (D-Separation) Active Triples Inactive Triples • Question: Are X and Y conditionally independent given evidence vars {Z}? • Yes, if X and Y “separated” by Z • Look for active paths from X to Y • No active paths = independence! • A path is active if each triple is active: • Causal chain A B C where B is unobserved (either direction) • Common cause A B C where B is unobserved • Common effect (aka v-structure) A B C where B or one of its descendantsis observed All it takes to block a path is a single inactive segment

Bayes Ball • Shade all observed nodes. Place balls at the starting node, let them bounce around according to some rules, and ask if any of the balls reach any of the goal node. • We need to know what happens when a ball arrives at a node on its way to the goal node.

Example R B Yes T T’

Example L Yes R B Yes D T Yes T’

Constructing Bayesian networks • Choose an ordering of variables X1, … , Xn • For i = 1 to n • add Xi to the network • select parents from X1, … ,Xi-1 such thatP(Xi | Parents(Xi)) = P(Xi | X1, ... Xi-1)

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J)?

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J)?No

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J)?No P(A | J, M) = P(A)? P(A | J, M) = P(A | J)? P(A | J, M) = P(A | M)?

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J)?No P(A | J, M) = P(A)? No P(A | J, M) = P(A | J)? No P(A | J, M) = P(A | M)? No

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J)?No P(A | J, M) = P(A)? No P(A | J, M) = P(A | J)?No P(A | J, M) = P(A | M)? No P(B | A, J, M) = P(B)? P(B | A, J, M) = P(B | A)?