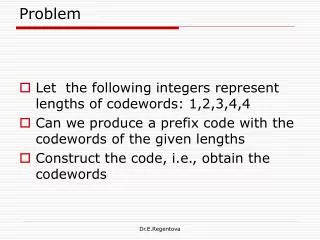

Problem

Problem. A subsequence is a sequence derived from another sequence by deleting some elements without changing the order of the remaining elements. Using code or pseudocode, write an algorithm that determines the longest common subsequence of two strings. Introduction to Algorithms.

Problem

E N D

Presentation Transcript

Problem A subsequence is a sequence derived from another sequence by deleting some elements without changing the order of the remaining elements. Using code or pseudocode, write an algorithm that determines the longest common subsequence of two strings.

Fibonacci numbers • Iterative solution

Fibonacci numbers (an aside) • Closed form solution exists • Binet’s formula • To calculate any Fibonacci number Fn

Pascal’s Triangle • Iterative solution • Begin with 1 on row 0 • Each new row is offset to the left by the width of one number • Construct the elements of rows as follows: • For each new value k, sum the value above and left with the value above and right to find k • If there is no number, substitute zero in its place

Pascal’s Triangle (an aside) • Closed form solution exists • To calculate values in row n • Use symmetry to derive right-hand side of row (or calculate)

Pascal’s Triangle (another aside) 1 1 2 3 5 8 13 21 34

Calculating prime numbers • Iterative solution • Let P be the set of prime numbers; at start, P = ∅ • For each value k = 2…n • For each value j = 2…k – 1 • If k/j is not an integer for all j, P = P ∪{ k } • Output P • Naïve, slow solution

Dynamic programming • May refer to two things • A mathematical optimisation method • An approach to computer programming and algorithm design • A method for solving complex problems using recursion • Problems may be solved by solving smaller parts • Keep addressing smaller parts until each subproblem becomes tractable • Problem must possess both of the following traits • Optimal substructure • Overlapping subproblems

Optimal substructure • A problem has optimal substructure if an optimal solution can be constructed efficiently from optimal solutions of its subproblems • May be solved using a greedy algorithm • Iff it can be proved by induction that this is optimal for all steps • Dynamic programming may be used if subproblems exist • Otherwise a brute-force search is required

Optimal substructure • Optimal substructure • Finding the shortest path between two cities by car • e.g. if the shortest route from Seattle to Los Angeles passes through Portland and Sacramento, the shortest route from Portland to Los Angeles passes through Sacramento • Not optimal substructure • Buying the cheapest ticket from Sydney to New York • Even if a ticket has stops in Dubai and London we cannot conclude that the cheapest ticket will stop in London as the price at which an airline sells multi-flight tickets is not the sum of the prices for which it would sell individual flights on the same trip

Overlapping subproblems • A problem has overlapping subproblems if either of the following is true • The problem can be broken down into subproblems which are reused several times • A recursive algorithm solves the same subproblem over and over instead of generating new subproblems • Examples • Fibonacci numbers • Pascal’s Triangle • …

Examples of dynamic programming • Fibonacci numbers • Pascal’s Triangle • Prime number generation • Dijkstra’s algorithm/A* algorithm • Towers of Hanoi game • Matrix chain multiplication • Beat tracking in music information retrieval software • Neural networks • Word-wrapping in word processors (especially LATEX) • Transposition and refutation tables in computer chess games

Recursion • Recursion is a powerful tool for solving dynamic programming problems • Not always an optimal solution on its own • Naïve • Wasteful • e.g. to calculate F100, a lot of work shall be wasted • Two standard approaches to improving performance • “Top-down” • “Bottom-up”

“Top-down” approach • In software design • Developing a program by starting with a large concept and adding increasing layers of specialisation • In dynamic programming • A solution which derives directly from the recursive solution • Performance is improved by memoisation • Solutions to subproblems are stored in a data structure • If a subproblem is solved, we read from the table, else we calculate and add it

“Bottom-up” approach • In software design • Developing a program by starting with many small objects and functions and building on them to create more functionality • In dynamic programming • Solving the subproblems first and aggregating them • Performance is improved using stored data • Memoisation may be used

Memoisation • Optimisation technique • Reduces need to repeat function calls, which may be expensive • “Memoised” function stores results of previous calls with a specific set of inputs • Function must be ‘referentially transparent’ • i.e. calling the function must have exactly the same outcome as returning the value that would be produced by calling the function

Memoisation • Not the same as using a lookup table • Lookup tables are precalculated before use • Memoised tables are calculated transparently as required • Memoisation optimises for time over space • Time/space trade-off in all algorithms • ‘Computational complexity’ • Complexity in time (how much time is required) • Complexity in space (how much memory is required) • Cannot reduce one without increasing the other

Computational complexity • Roughly, the quantity of resources required to solve a problem computationally • Resources are whatever is appropriate to the situation • Time • Memory • Logic gates on a circuit board • Number of processors in a supercomputer • e.g. “Big-O” notation is a measure of complexity in time

Computational complexity • Cannot reduce all complexity values simultaneously • All software design requires a trade-off • Primarily between time (runtime) and space (memory requirements) • Memoisation optimises for time over space • Calculations are performed more quickly… • … but memory is required to store precalculated data

Longest common subsequence • Assume two strings, S and T • Solution? • Generate all possible subsequences of both sequences, the set Q • Return longest subsequence in Q intlcs(char *S, char*T, intm, intn) { if (m == 0 || n == 0) return 0; if(S[m-1] == T[n-1]) return 1 + lcs(S, T, m-1, n-1); else return max(lcs(S, T, m, n-1), lcs(S, T, m-1, n)); }

Longest common subsequence • Time: O(2n) • Why? • Is this an efficient algorithm for this problem? intlcs(char *S, char*T, intm, intn) { if (m == 0 || n == 0) return 0; if(S[m-1] == T[n-1]) return 1 + lcs(S, T, m-1, n-1); else return max(lcs(S, T, m, n-1), lcs(S, T, m-1, n)); }

Longest common subsequence • Can we use dynamic programming to improve performance? • Does the problem meet the requirements? • Does it have optimal substructure? • Does it have overlapping subproblems?

Optimal substructure of LCS • Assume • Input sequences S[0..m-1] and T [0..n-1]with lengths m and n • L(S[0..m-1], T [0..n-1])is the length of the LCS of Sand T • L(S[0..m-1], T [0..n-1]) is defined recursively as follows • If last characters of both sequences match • L(S [0..m–1], T [0..n–1])=1 + L(S [0..m–2], T [0..n–2]) • If last characters of both sequences do not match • L(S [0..m–1], T [0..n–1]) = MAX( L(S [0..m–2], T [0..n–1]),L(S [0..m–1], T [0..n–2]) )

Optimal substructure of LCS • Examples • Last characters match • Consider the input strings “AGGTAB” and “GXTXAYB” • Length of LCS can be written as • L(“AGGTAB”, “GXTXAYB”) = 1 + L(“AGGTA”, “GXTXAY”) • Last characters do not match • Consider the input strings “ABCDGH” and “AEDFHR” • Last characters do not match for the strings. • Length of LCS can be written as • L(“ABCDGH”, “AEDFHR”) = MAX ( L(“ABCDG”, “AEDFHR”), L(“ABCDGH”, “AEDFH”) )

Overlapping subproblems in LCS • Consider the original code • Many of the same problems being solved repeatedly • Subproblems overlap • Performance can be improved by memoisation lcs("AXYT", "AYZX”) / \ lcs("AXY", "AYZX") lcs("AXYT", "AYZ") / \ / \ lcs("AX", "AYZX") lcs("AXY", "AYZ") lcs("AXY", "AYZ") lcs("AXYT", "AY")

Example of LCS of two strings • New runtime O(n ⋅ m) • Much less than previously: O(2n) • But increased space requirement to store working data

References http://faculty.ycp.edu/~dbabcock/cs360/lectures/lecture13.html http://www.algorithmist.com/index.php/Longest_Common_Subsequence