RENSSELAER

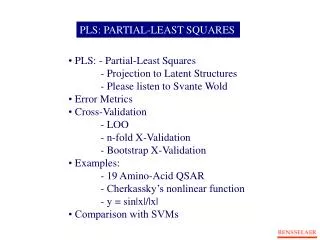

PLS: PARTIAL-LEAST SQUARES. PLS: - Partial-Least Squares - Projection to Latent Structures - Please listen to Svante Wold Error Metrics Cross-Validation - LOO - n-fold X-Validation - Bootstrap X-Validation Examples:

RENSSELAER

E N D

Presentation Transcript

PLS: PARTIAL-LEAST SQUARES • PLS: - Partial-Least Squares • - Projection to Latent Structures • - Please listen to Svante Wold • Error Metrics • Cross-Validation • - LOO • - n-fold X-Validation • - Bootstrap X-Validation • Examples: • - 19 Amino-Acid QSAR • - Cherkassky’s nonlinear function • - y = sin|x|/|x| • • Comparison with SVMs RENSSELAER

IMPORTANT EQUATIONS FOR PLS • t’s are scores or latent variables • p’s are loadings • w1 eigenvector of XTYYTX • t1 eigenvector of XXTYYT • w’s and t’s of deflations: • w’s are orthonormal • t’s are orthogonal • p’s not orthogonal • p’s orthogonal to earlier w’s RENSSELAER

NIPALS ALGORITHM FOR PLS (with just one response variable y) • Start for a PLS component: • Calculate the score t: • Calculate c’: • Calculate the loading p: • Store t in T, store p in P, store w in W • Deflate the data matrix and the response variable: Do for h latent variables RENSSELAER

The geometric representation of PLSR. The X-matrix can be represented as N points in the K dimensional space where each column of X (x_k) defines one coordinate axis. The PLSR model defines an A-dimensional hyper-plane, which in turn, is defined by one line, one direction, per component. The direction coefficients of these lines are p_ak. The coordinates of each object, i, when its ak data (row i in X) are projected down on this plane are t_ia. These positions are related to the values of Y.

QSAR DATA SET EXAMPLE: 19 Amino Acids From Svante Wold, Michael Sjölström, Lennart Erikson, "PLS-regression: a basic tool of chemometrics," Chemometrics and Intelligent Laboratory Systems, Vol 58, pp. 109-130 (2001) RENSSELAER

INXIGHT VISUALIZATION PLOT RENSSELAER

1 latent variable No aromatic AAs

KERNEL PLS HIGHLIGHTS • Invented by Rospital and Trejo (J. Machine learning, December 2001) • They first altered the linear PLS by dealing with eigenvectors of XXT • They also made the NIPALS PLS formulation resemble PCA more • Now non-linear correlation matrix K(XXT) rather than XXT is used • Nonlinear Correlation matrix contains nonlinear similarities of • datapoints rather than • • An example is the Gaussian Kernel similarity measure: Kernel PLS Linear PLS • trick is a different normalization • now t’s rather than w’s are normalized • t1 eigenvector of K(XXT)YYT • w’s and t’s of deflations of XXT • • • • • w1 eigenvector of XTYYTX • t1 eigenvector of XXTYYT • w’s and t’s of deflations: • w’s are orthonormal • t’s are orthogonal • p’s not orthogonal • p’s orthogonal to earlier w’s

1 latent variable Gaussian Kernel PLS (sigma = 1.3) With aromatic AAs

CHERKASSKY’S NONLINEAR BENCHMARK DATA • Generate 500 datapoints (400 training; 100 testing) for: Cherkas.bat

Bootstrap Validation Kernel PLS 8 latent variables Gaussian kernel with sigma = 1

True test set for Kernel PLS 8 latent variables Gaussian kernel with sigma = 1

Y=sin|x|/|x| • Generate 500 datapoints (100 training; 500 testing) for:

Comparison Kernel-PLS with PLS 4 latent variables sigma = 0.08 PLS Kernel-PLS