Performance Modeling of Pipelined Linear Algebra Architectures on FPGAs

Performance Modeling of Pipelined Linear Algebra Architectures on FPGAs. Sam Skalicky Sonia Lopez, Marcin Lukowiak , James Letendre and Matthew Ryan. Outline. Our Motivation to design FPGA model Assumptions made Architecture decomposed into equations Model equations

Performance Modeling of Pipelined Linear Algebra Architectures on FPGAs

E N D

Presentation Transcript

Performance Modeling of Pipelined Linear Algebra Architectures on FPGAs Sam Skalicky Sonia Lopez, MarcinLukowiak, James Letendre and Matthew Ryan High Performance Research Group

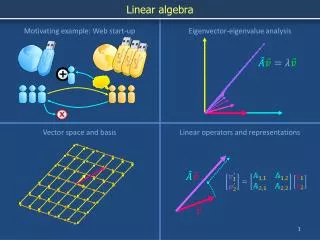

Outline • Our Motivation to design FPGA model • Assumptions made • Architecture decomposed into equations • Model equations • Model verification • Performance experiment • Conclusion High Performance Research Group

Motivation Compute-intensive problems requiring high performance Data assimilation applications • Linear algebra computations: • Dot Product, MV-Multiply, MM-Multiply, Matrix inverse, and Matrix decomposition • Execution time on GPP makes use impractical High Performance Research Group

Motivation Compute-intensive problems requiring high performance Data assimilation application • Medical diagnosis • NTEPI: Non-invasive Transmural Electrophysiological Imaging • Kalman Filter: ECG & Model • 120 electrodes • Solves Inverse Propagation Problem High Performance Research Group

Heterogeneous Systems Taking full advantage of compute capabilities of CPU, GPU, FPGA Design decisions • Which processor architecture to use? • Which implementation should be used? • How to handle different data sizes? High Performance Research Group

Simulation Design space exploration necessitates using processor models • CPU [1,2] • GPU [3,4] • FPGA [5,6] • Higher level analysis prior to implementation • Model error rates up to 15% • Computation specific model High Performance Research Group

Research Goals Modeling support for LA computations on FPGA • Linear Algebra Computations: • Dot product, MV-multiply, MM-multiply, Matrix inverse, and Matrix decomposition • Pipelined FPGA architectures • More Parallelism = fewer cycles • Model inputs: architecture (latencies), clock speed, memory bandwidth, data size, pipeline size High Performance Research Group

Assumptions We assume an FPGA system containing: • Memory Controller • Read/write elements, avoid bank conflicts, buffer up accesses (data stored is sequential) • Start/Finish Control Logic • Data ordering or precomputing FPGA High Performance Research Group

Assumptions We assume the pipeline structure is one of two types: Multiple pipeline single pipeline replicated Scalable pipeline having data dependencies or feeback • Stages • Functional units: add, subtract,multiply, etc. High Performance Research Group

Matrix-Vector Multiply Computation is characterized as: • Pipeline: MAC • Vector value stored • Data Sizes: Am×n, xn×1 • Usedn times • Performs mIterationsto calculate a single result High Performance Research Group

Matrix-Vector Multiply Computation is characterized as: • Pipeline: MAC • Vector value stored • Data Sizes: Am×n, xn×1 • Usedn times • Performs mIterationsto calculate a single result High Performance Research Group

Model: Compute Cycles The core calculation in the model • Combine the Uses() and Iterations() • With the # of Usable Pipelines() High Performance Research Group

Model: Compute Cycles The core calculation in the model • Combine the Uses() and Iterations() • With the # of Usable Pipelines() x0 • A00 • A01 • A02 • A03 x1 • A10 • A11 • A12 • A13 x2 • A20 • A21 • A22 • A23 x3 • A30 • A31 • A32 • A33 Pipeline0 y3 y2 y1 y0 High Performance Research Group

Model: Compute Cycles The core calculation in the model • Combine the Uses() and Iterations() • With the # of Usable Pipelines() x0 • A00 • A01 • A02 • A03 Pipeline0 x1 • A10 • A11 • A12 • A13 y0 x2 • A20 • A21 • A22 • A23 Pipeline0 y1 x3 • A30 • A31 • A32 • A33 Pipeline0 y2 y3 High Performance Research Group

Model: Operating Frequency To calculate the frequency at which the design will operate • Memory Bandwidth Ratio • Available to Required • Frequency the design operates at ( ) High Performance Research Group

Model: Total Execution Time Combines all model equations into final result • Total cycles required for computation ( ) • Compute Cycles • Latency (pipeline fill/empty) • Total Execution Time • Control Logic Latency • Memory access latency High Performance Research Group

Verification Hardware Platform Specifications used: • Data Sizes: 5 to 8000 • FPGA Devices: Virtex 6 & Virtex 7 • Memory Bandwidth: 800Mbps & 1.6Gbps • Single & Double Precision Floating Point High Performance Research Group

Verification Computation validated the accuracy of the model Dot Product MV Multiply MM Multiply Linear Regression Coefficient of determination (R2) of 1 Cholesky M Inverse High Performance Research Group

Verification: MM Multiply Computation validated the accuracy of the model MM Multiply Linear Regression Coefficient of determination (R2) of 1 High Performance Research Group

Performance Results: Cholesky Control flow dependent Execution Times for NxN matrices Pipeline sizes to achieve maximum performance Size of Pipeline Smaller Larger < > Less Cycles More Cycles Slower Rate Faster Rate High Performance Research Group

Performance Results: M Inverse Hardware resource dependent Execution Times for NxN matrices Pipeline sizes to achieve maximum performance High Performance Research Group

Performance Results: MM Multiply Memory bandwidth dependent Execution Times for NxN matrices Pipeline sizes to achieve maximum performance High Performance Research Group

Conclusion • Model incorporates elements from the architecture through the Uses, Iterations, and latencies • We showed the size of the pipeline is a critical parameter to achieve maximum performance • Quickly explore the design space without any implementation or simulation • The best accelerator can be configured to meet the needs of the application High Performance Research Group

References [1] A. Snavely, L. Carrington, N. Wolter, J. Labarta, R. Badia, and A. Purkayastha, “A framework for performance modeling and prediction,” in Supercomputing, ACM/IEEE 2002 Conference, nov. 2002, p. 21. [2] M. Laurenzano, M. Tikir, L. Carrington, and A. Snavely, “Pebil: Efficient static binary instrumentation for linux,” in Performance Analysis of Systems Software (ISPASS), 2010 IEEE International Symposium on, march 2010, pp. 175 –183. [3] S.HongandH.Kim,“Ananalyticalmodelforagpuarchitecture with memory-level and thread-level parallelism awareness,” in Proceedings of the 36th annual international symposium on Computer architecture, ser. ISCA ’09. New York, NY, USA: ACM, 2009, pp. 152–163. [4] J. Sim, A. Dasgupta, H. Kim, and R. Vuduc, “A performance analysis framework for identifying potential benefits in gpgpu applications,” in Proceedings of the 17th ACM SIGPLAN sympo- sium on Principles and Practice of Parallel Programming, ser. PPoPP ’12. New York, NY, USA: ACM, 2012, pp. 11–22. [5] B. Holland, K. Nagarajan, and A. George, “RAT: RC Amenability Test for Rapid Performance Prediction,” in ACM Transactions on Reconfigurable Technology and Systems (TRETS), vol. 1, no. 4, 2009. [6] B. Holland, A. George, H. Lam, and M. Smith, “An analytical model for multilevel performance prediction of Multi-FPGA systems ,” in ACM Transactions on Reconfigurable Technology and Systems (TRETS), vol. 4, no. 3, 2011. High Performance Research Group

BACKUP SLIDES High Performance Research Group

Performance Experiment To compute the time required given a matrix size • Based on the number of pipelines • Incorporating design factors: • Data precision (single or double) • Memory bandwidth • Available logic resources • Clock frequency High Performance Research Group

A03 A00 A02 A01 x0 A13 A12 A11 A10 x1 A23 A21 A22 A20 x2 A33 A31 A32 A30 x3

y0 A03 A00 A02 A01 x0 A13 A12 A11 A10 x1 A23 A21 A22 A20 x2 A33 A31 A32 A30 x3

x3 x0 x1 x2 y1 y0 A13 A12 A11 A10 A23 A21 A22 A20 A33 A31 A32 A30

x3 x0 x1 x2 y2 y0 y1 A23 A21 A22 A20 A33 A31 A32 A30

x3 x0 x1 x2 y3 y0 y1 y2 A33 A31 A32 A30

x3 x0 x1 x2 y0 A32 A30 A31 A33 y1 y2 y3