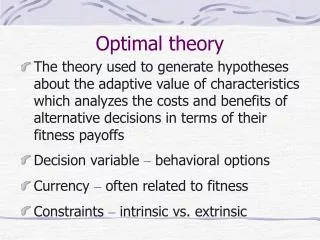

Optimal Experimental Design Theory

Optimal Experimental Design Theory. Motivation. To better understand the existing theory and learn from tools that exist out there in other fields To further develop the framework Better handle other design criteria Improve the ability to search for the optimal design

Optimal Experimental Design Theory

E N D

Presentation Transcript

Motivation • To better understand the existing theory and learn from tools that exist out there in other fields • To further develop the framework • Better handle other design criteria • Improve the ability to search for the optimal design • Would like to increase the “survival rate” of the start points, threads often die due to encountering singular matrices

References • Texts • Atkinson, Donev, and Tobias “Optimum Experimental Designs with SAS.” • Pukelsheim. “Optimal Designs of Experiments.” • Papers and Guides • Albrecht et al. “Findings of the Joint Dark Energy Mission Figure of Merit Science Working Group.” 2008. • Coe. “Fisher Matrices and Confidence Ellipses: A Quick Start Guide and Software.” 2010. • Related Software • Fisher.py, Python– simple manipulation of Fisher matrices and plotting of ellipses • DETFast(Albrecht et al. 2006), Java – Compare expectations of cosmological constraints from different experiments with your choice of priors with a few clicks! • Fisher4Cast 4 (Bassett et al. 2009), Matlab – most sophisticated

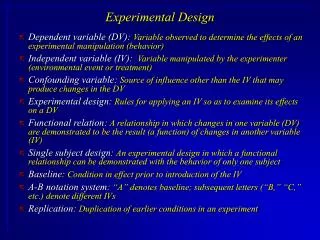

Confidence Ellipses • The inverse of the Fisher matrix is the covariance matrix • Typically, we’ve only been interested in the diagonal entries of the inverse, i.e. the variance of each parameter • However, CRLB states that F-1 does bound the covariance in a matrix sense, not just the variances • We may interested in a more complete picture • Can visualize as an ellipse(oid) • The 1st standard deviation contour of the multivariate Gaussian dist.

Confidence Ellipses λ1 λ2

Fisher Matrix Operations • Knowledge about prior variances of a parameter can be incorporated by adding 1/σ2 the corresponding diagonal entry of F • e.g. removing B0 which implies we know its value (either by assumption or B0 map) • Instead, if we know that B0 is a value with some confidence, σ2, we can use that • Adding data sets, information from multiple experiments can be combined just by adding their F matrices • For example, we can consider the T1 estimate for a combined protocol using many mapping methods

Fisher Matrix Operations • In fact, our formulation of F = JTJ can be reduced to this • JTJ is the summation of dyads, jjT for each collected image • where j is the vector of δfi/δθ, the sensitivity of the image to changes in each tissue parameter • So, we are just adding the informations matrices associated with each image we collect

VOPs? • An emerging challenge in optimizing experiments for range of values is computation time • Fine sampling of say the B0 axis can cause the search to slow • This problem grow dramatically if we increase the dimensionality, find the protocol that best estimates a range of T1 and T2 and is robust to B0 and B1 effects • Multiple tissues represent many confidence ellipses, which we know can be more succinctly bounded by VOPs • However there’s a key difference from SAR optimized pulse designs, in which case SAR = bTQb, and we find b • In optimal experimental design, we know b (determined by the signal equation at the tissue we’re optimizing for), but want to find Q • But we don’t have free control over Q, i.e. F • F is restricted to the Jacobians of the pulse sequence we’re applying • We cannot use a fixed set of VOPs to summarize our Fs over a whole range of B0s because the Fs change as we are evaluating different protocols

VOPs on Each Image? • One workaround is to instead consider a set of potential images to collect • For example, we grid the space of flip angle, phase cycle, TR, etc. and consider what is the optimal choice of these to acquire that is robust to B0 • We can then get the VOPs for each image which gives a smaller set of matrices that bounds the information given by that image • Then we find which sum of VOPs gives the smallest confidence ellipse or CRLB • This may still be expensive with the size of the gridding and VOPs themselves require an eigenvalue decomposition for every matrix • i.e. every point in the grid in all dimensions

Optimal Design Theory • General Equivalence Theorem • Is a fundamental result that describes conditions that must be met if a design is optimal on some quantity of F • Provides methods for the construction and checking of designs • However, the “support points,” or the collected images are known, finding the NEX/noise variance to acquire each • Continuous and exact designs • For single response models (1 output), at most p(p+1)/2 experiments can be used to represent F of size pxp • We don’t need more than that many images • There can exist protocols with more images that are also equivalently optimal, but from a perspective of technician convenience, it’s better to choose the one with fewer series • For mcDESPOT, 7 params -> at most 28 images, we collect 27

Improving Stability • Previously, in order to maintain constant scan time, literally enforced an equality condition for the sum of the noise variances (“NEX”) • One practical change, which is more compatible with Newton solver, is to transform the variables • Solve for z, which can be any real value, no longer constrained, which restricted the solvers available to us and probably made the problem harder

Improving Stability • F can be regularized as F+εI, where ε is a small constant • Atkinson et al. note that in designs that have an objective based on a subset of the parameters, as in our case, singular F can often times appear • This way the inversion will always succeed • From before, the interpretation is that we have some tiny but constant prior information for all the variables • Therefore choosing between the best design with this prior should effectively be the same as without it

Criteria of Optimality • A-optimality – minimize tr(F-1), total variance • D-optimality – maximize the (log) det(F), equivalently minimize the area of ellipse • This a very popular objective, though perhaps less common in MR papers • E-optimality – minimize the largest eigenvalue of F-1 • Expect more circular ellipse, I think • Linear optimality – this is what we’ve been doing with CoV • General:

Compound Design • This is the term given to problems that seek to optimize over many Fs • For example the protocol that gives the best average precision in T1 for a given range