Regression Analysis

Regression Analysis. CHEE209 Module 6. Outline . assessing systematic relationships types of models least squares estimation - assumptions fitting a straight line to data least squares parameter estimates graphical diagnostics quantitative diagnostics multiple linear regression

Regression Analysis

E N D

Presentation Transcript

Regression Analysis CHEE209 Module 6 J. McLellan

Outline • assessing systematic relationships • types of models • least squares estimation - assumptions • fitting a straight line to data • least squares parameter estimates • graphical diagnostics • quantitative diagnostics • multiple linear regression • least squares parameter estimates • diagnostics • precision of parameter estimates, predicted responses J. McLellan

The Scenario We have been given a data set consisting of measurements of a number of variables PLUS • background information about the “process” • objectives for the investigation • information about how the experimentation was conducted • e.g., shift, operating region, product line, ... J. McLellan

Assessing Systematic Relationships Is there a systematic relationship? Two approaches: • graphical • quantitative Other points - • what is the nature of the relationship? • linear in the “independent variables” • nonlinear in the “independent variables” • from engineering/scientific judgement - should there be a relationship? J. McLellan

Assessing Systematic Relationships Graphical Methods • scatterplots (x-y diagrams) • plot values of one variable against another • look for evidence of a trend • look for nature of trend - linear, quadratic, exponential, other nonlinearity? • surface plots • plot one variable against values of two other variables • look for evidence of a trend - surface • look for nature of trend - linear, nonlinear? • casement plots • a “matrix”, or table, of scatterplots J. McLellan

Graphical Methods for Analyzing Data Visualizing relationships between variables Techniques • scatterplots • scatterplot matrices • also referred to as “casement plots” J. McLellan

Scatterplots ,,, are also referred to as “x-y diagrams” • plot values of one variable against another • look for systematic trend in data • nature of trend • linear? • exponential? • quadratic? • degree of scatter - does spread increase/decrease over range? • indication that variance isn’t constant over range of data J. McLellan

Scatterplots - Example • tooth discoloration data - discoloration vs. fluoride trend - possibly nonlinear? J. McLellan

Scatterplot - Example • tooth discoloration data -discoloration vs. brushing signficant trend? - doesn’t appear to be present J. McLellan

Scatterplot - Example • tooth discoloration data -discoloration vs. brushing Variance appears to decrease as # of brushings increases J. McLellan

Scatterplot matrices … are a table of scatterplots for a set of variables Look for - • systematic trend between “independent” variable and dependent variables - to be described by estimated model • systematic trend between supposedly independent variables - indicates that these quantities are correlated • correlation can negatively ifluence model estimation results • not independent information • scatterplot matrices can be generated automatically with statistical software, manually using spreadsheets J. McLellan

Scatterplot Matrices - tooth data J. McLellan

Assessing Systematic Relationships Quantitative Methods • correlation • formal def’n plus sample statistic (“Pearson’s r”) • covariance • formal def’n plus sample statistic provide a quantiative measure of systematic LINEAR relationships J. McLellan

Covariance Formal Definition • given two random variables X and Y, the covariance is • E{ } - expected value • sign of the covariance indicates the sign of the slope of the systematic linear relationship • positive value --> positive slope • negative value --> negative slope • issue - covariance is SCALE DEPENDENT J. McLellan

Covariance • motivation for covariance as a measure of systematic linear relationship • look at pairs of departures about the mean of X, Y Y Y X X mean of X, Y mean of X, Y J. McLellan

strong linear relationship with negative slope strong linear relationship with positive slope Correlation • is the “dimensionless” covariance • divide covariance by standard dev’ns of X, Y • formal definition • properties • dimensionless • range Note - the correlation gives NO information about the actual numerical value of the slope. J. McLellan

Estimating Covariance, Correlation … from process data (with N pairs of observations) Sample Covariance Sample Correlation These quantitiesare statistics J. McLellan

Example - Solder Thickness Objective - study the effect of temperature on solder thickness Data - in pairs Solder Temperature (C) Solder Thickness (microns) 245 171.6 215 201.1 218 213.2 265 153.3 251 178.9 213 226.6 234 190.3 257 171 244 197.5 225 209.8 J. McLellan

Example - Solder Thickness J. McLellan

Outline • assessing systematic relationships • types of models • least squares estimation - assumptions • fitting a straight line to data • least squares parameter estimates • graphical diagnostics • quantitative diagnostics • multiple linear regression • least squares parameter estimates • diagnostics • precision of parameter estimates, predicted responses J. McLellan

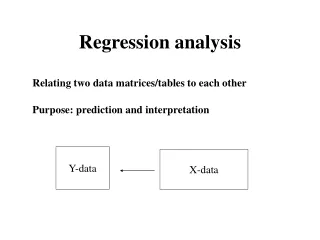

Empirical Modeling - Terminology • response • “dependent” variable - responds to changes in other variables • the response is the characteristic of interest which we are trying to predict • explanatory variable • “independent” variable, regressor variable, input, factor • these are the quantities that we believe have an influence on the response • parameter • coefficients in the model that describe how the regressors influence the response J. McLellan

Models When we are estimating a model from data, we consider the following form: response “random error” parameters explanatory variables J. McLellan

The Random Error Term • is included to reflect fact that measured data contain variability • successive measurements under the same conditions (values of the explanatory variables) are likely to be slightly different • this is the stochastic component • the functional form describes the deterministic component • random error is not necessarily the result of mistakes in experimental procedures - reflects inherent variability • “noise” J. McLellan

Types of Models • linear/nonlinear in the parameters • linear/nonlinear in the explanatory variables • number of response variables • single response (standard regression) • multi-response (or “multivariate” models) From the perspective of statistical model-building, the key point is whether the model is linear or nonlinear in the PARAMETERS. J. McLellan

Linear Regression Models • linear in the parameters • can be nonlinear in the regressors J. McLellan

Nonlinear Regression Models • nonlinear in the parameters • e.g., Arrhenius rate expression nonlinear linear (if E is fixed) J. McLellan

Outline • assessing systematic relationships • types of models • fitting a straight line to data • least squares estimation - assumptions • least squares parameter estimates • graphical diagnostics • quantitative diagnostics • multiple linear regression • least squares parameter estimates • diagnostics • precision of parameter estimates, predicted responses J. McLellan

Fitting a Straight Line to Data Consider the solder data - Goal - predict solder thickness as a function of temperature The trend appears to be quite linear --> try fitting a straight line model to this data Y - thickness X - temperature J. McLellan

Estimating a Model • what is our measure for prediction? • examine prediction error = measured - predicted value • square the prediction error -- closer link to “distance”, and prevents cancellation by positive, negative values • Least Squares Estimation J. McLellan

Assumptions for Least Squares Estimation Values of explanatory variables are known EXACTLY • random error is strictly in the response variable • practically - a random component will almost always be present in the explanatory variables as well • we assume that this component has a substantially smaller effect on the response than the random component in the response J. McLellan

Assumptions for Least Squares Estimation The form of the equation provides an adequate representation for the data • can test adequacy of model as a diagnostic Variance of random error is CONSTANT over range of data collected • e.g., variance of random fluctuations in thickness measurements at high temperatures is the same as variance at low temperatures J. McLellan

Assumptions for Least Squares Estimation The random fluctuations in each measurement are statistically independent from those of other measurements • at same experimental conditions • at other experimental conditions • implies that random component has no “memory” • no correlation between measurements Random error term is normally distributed • typical assumption • not essential for least squares estimation • important when determining confidence intervals, conducting hypothesis tests J. McLellan

Least Squares Estimation - graphically least squares - minimize sum of squared prediction errors o deterministic “true” relationship response (solder thickness) o o o o o T prediction error “residual” J. McLellan

More Notation and Terminology Random error is “independent, identically distributed” (I.I.D) -- can say that it is IID Normal Capitals - Y - denotes random variable - except in case of explanatory variable - capital used to denote formal def’n Lower case - y, x - denotes measured values of variables Model Measurement J. McLellan

More Notation and Terminology Estimate - denoted by “hat” • examples - estimates of response, parameter Residual - difference between measured and predicted response J. McLellan

Least Squares Estimation Find the parameter values that minimize the sum of squares of the residuals over the data set:Solution • solve conditions for stationary point (“normal equations”) • derivatives with respect to parameters = 0 • obtain analytical expressions for the least squares parameter estimates J. McLellan

Least Squares Parameter Estimates Note that the parameter estimates are functions of BOTH the explanatory variable values and the measured response values --> functions of “noisy data” J. McLellan

Diagnostics - Graphical Basic Principle - extract as much trend as possible from the data Residuals should have no remaining trend - • with respect to the explanatory variables • with respect to the data sequence number • with respect to other possible explanatory variables (“secondary variables”) • with respect to predicted values J. McLellan

Graphical Diagnostics Residuals vs. Predicted Response Values - even scatter over range of prediction - no discernable pattern - roughly half the residuals are positive, half negative * * residual ei * * * * * * * * * * * * DESIRED RESIDUAL PROFILE J. McLellan

Graphical Diagnostics Residuals vs. Predicted Response Values * outlier lies outside main body of residuals residual ei * * * * * * * * * * * * * RESIDUAL PROFILE WITH OUTLIERS J. McLellan

Graphical Diagnostics Residuals vs. Predicted Response Values variance of the residuals appears to increase with higher predictions * residual ei * * * * * * * * * * * * * * * * * NON-CONSTANT VARIANCE J. McLellan

Graphical Diagnostics Residuals vs. Explanatory Variables • ideal - no systematic trend present in plot • inadequate model - evidence of trend present left over quadratic trend - need quadratic term in model * residual ei * * * * * * * * * x * * * * J. McLellan

Graphical Diagnostics Residuals vs. Explanatory Variables Not in Model • ideal - no systematic trend present in plot • inadequate model - evidence of trend present * residual ei * * * * * * w * * * * * * * systematic trend not accounted for in model - include a linear term in “w” J. McLellan

Graphical Diagnostics Residuals vs. Order of Data Collection * failure to account for time trend in data residual ei * * * * * * * t * * * * * * residual ei successive random noise components are correlated - consider more complex model - time series model for random component? * * * * * * * * t * * * * * J. McLellan

Quantitative Diagnostics - Ratio Tests Is the variance of the residuals significant? • relative to a benchmark • indication of extent of unmodeled trend Benchmark • variance of inherent variation in process • provided by variance of replicate runs if possible • replicate runs - repeated runs at the same conditions which provide indication of inherent variation • can conduct replicate runs at several sets of conditions and compare variances - are they constant over the experimental region? J. McLellan

Quantitative Diagnostics - Ratio Tests Residual Variance Ratio Mean Squared Error of Residuals (Var. of Residuals): J. McLellan

Quantitative Diagnostics - Ratio Tests Is the ratio significant? - compare to the F-distribution Why? • ratio is the ratio of sums of squared normal r.v.’s • squared normal r.v.’s have a chi-squared distribution • ratios of chi-squared r.v.’s have an F-distribution Degrees of freedom • number of statistically independent pieces of information used to calculate quantities • degrees of freedom of MSE is N-2, where N is number of data points • d. of f. for inherent variance is M-1, where M is number of data points used to estimate inherent variance J. McLellan

Quantitative Diagnostics - Ratio Tests Interpretation of Ratio • if significant, then model fit is not adequate as the residual variation is large relative to the inherent variation • “still some signal to be accounted for” Example - Solder Thickness • previous data - variance is 102.2 (24 degrees of freedom) • residual variance (MSE) J. McLellan

Quantitative Diagnostics - Ratio Tests The ratio is: Compare to The residual variance is NOT statistically significant, and no evidence of inadequacy is detected. 5% of values occur outside this fence ( area of tail is 0.05) Fn1,n2 ^ 1.32 2.36 J. McLellan

Quantitative Diagnostics - Ratio Tests Mean Square Regression Ratio - is the variance described by the model significant relative to an indication of the inherent variation? Variance described by model: J. McLellan