Regression Analysis

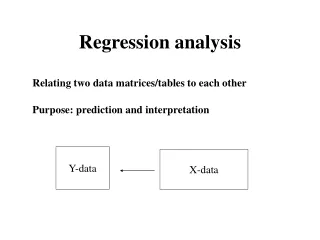

Regression Analysis. What is regression ? Best-fit line Least square. What is regression ?. Study of the behavior of one variable in relation to several compartments induced by another variable.

Regression Analysis

E N D

Presentation Transcript

Regression Analysis What is regression ? Best-fit line Least square

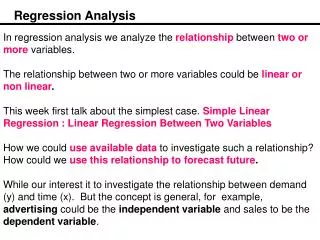

What is regression ? • Study of the behavior of one variable in relation to several compartments induced by another variable. • By the use of regression line or equation, we can predict scores on the dependent variable from those of the independent variable.There are different nomenclatures of independent and dependent variables.

NON CROSS CLASSIFIED AGE EDUCATION CROSS CLASSIFIED ELDER EDUCATED ELDER UNEDUCATED YOUNGER EDUCATED YOUNGER UNEDUCATED COMPARTMENTS

Equation for a straight line Y = a + bX (simple regression) Y = a+ b1X1+b2X2+……..bnXn Y= Predicted score a = Intercept/origin of regression line b = regression coefficient representing unit of change in dependent variable with the increase in 1 unit on X variable

b coefficient estimation SumXY-[(sum X)(sum Y)/ N] bxy = --------------------------------------- Sum Y2 - [(sum Y)^2/N] Sum of deviation XY bxy = -------------------------------- Sum of deviation X square

Estimation of ‘a’ axy = Mean X - bxy(predicted Y ) Predicting by graph

Least square Goal of linear regression procedure is to fit a line through the points. Specifically , the program will compute a line so that the squared deviations of the observed points from that line are minimized. This procedure is called least square. A fundamental principle of least square method is that variation on a dependent variable can be partitioned, or divided into parts, according to the sources of variation.

Least square estimation ( Y - Y mean )^2 = (Y hat - Y mean) ^2 + (Y - Y hat) ^2 a) Total sum of squared deviations of the observed values (Y) on the dependent variable from the dependent variable mean (Total SS) b) Sum of squared deviations of the predicted values (Y hat) for the dependent variable from the dependent variable mean (Model SS) c) Sum of squared of the observed values on the dependent variable from the predicted values (Y -hat) , that is, the sum of the squared residuals (Error SS)