Scoring

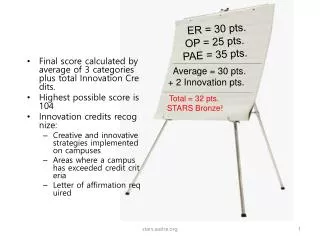

Scoring. Scoring Categories 1 – 6 (Process Categories). Examiners select a score (0-100) to summarize their observed strengths and opportunities for improvement (OFI’s) Scoring Guidelines are provided for Approach, Deployment, Learning, and integration Scores are assigned for each ITEM.

Scoring

E N D

Presentation Transcript

Scoring Categories 1 – 6 (Process Categories) • Examiners select a score (0-100) to summarize their observed strengths and opportunities for improvement (OFI’s) • Scoring Guidelines are provided for Approach, Deployment, Learning, and integration • Scores are assigned for each ITEM

BASIC MULTIPLE OVERALL Category/Item Question Organization

Overall Score for Categories 1 – 6 Items • An applicant’s score for approach [A], deployment [D], learning [L], and integration [I] depends on their ability to demonstrate the characteristics associated with that score • Although there are 4 factors to score for each Item, only one overall score is assigned • The examination team selects the “best fit” score, which is likely to be between the highest and lowest score for the ADLI factors

“Best Fit” Example Consider two applicants, both scoring 60% for A, D, and I, and 20% for L • Applicant Market Growth Technology Competitors A 10%/year Stable Stable B 2X/year Major change Many new • Item Score: • Applicant A: 50% (Learning could lead to incremental results improvements) • Applicant B: 30% (Learning needed to sustain the organization)

6. Independent review:LeTCI – Results Items Objective: Be able to evaluate a results item for independent review

Using a Table of Expected Results to Identify Category 7 OFI’s Purpose: Identify results that the applicant hasn’t provided WHY? Because applicants like to show results that are favorable, but may not always show results that are important.

Process for using a Table of Expected Results As you read the application, pay attention for processes that the applicant cites as important, measures that are discussed, segments that are used, etc. As you find examples of these, include them in the table of expected results in columns 1-3. When you review Category 7, review it for the expected results and complete column 4. Based on the comments in Column 4 of the table, an OFI, or OFI’s can be drafted citing missing expected results. Note: These are results that the applicant has introduced an expectation for in the Organizational Profile and Categories 1-6. They are NOT results that the examiner would like to see.

Evaluation Factors for Category 7 (results) • Le = Performance Levels Numerical information that places an organization’s results on a meaningful measurement scale. Performance levels permit evaluation relative to past performance, projection goals, and appropriate comparisons. • T = Trends Numerical information indicating the direction, rate and breadth of performance improvements. A minimum of 3 data points is needed to begin to ascertain a trend. More data points are needed to define a statistically valid trend. • C = Comparisons Establishing the value of results by their relationship to similar or equivalent measures. Comparisons can be made to results of competitors, industry averages, or best-in-class organizations. • I = Integration Connection to important customer, product and service, market, process and action plan performance measurements identified in the Organizational Profile and in Process Items. • G = Gaps Absence of results addressing specific areas of Category 7 Items, including the absence of results on key measures discussed in Categories 1–6

Results Evaluation Factors Le Denotes “Good” Trend direction Trend Comparison

Results strengths and OFI;s • Strengths are identified if the following is observed: • Performance levels [Le] are equivalent or better than comparatives, and/or benchmarks • Trends [T] show consistent improvement, and • Results are linked [Li] to key requirements • Opportunities for improvement are identified if: • Performance levels [Le] are not as good as comparatives • Trends [T] show degrading performance • Comparisons are not shown • Results are not linked [Li] to key requirements • Results are not provided [G] for key processes and/or action items

Scoring a Results Item • Scoring a Results item is similar to a Process Item • Scoring Guidelines for Le, T, C, and I • A single “best fit” score is selected for each results item

Results evaluation: key concepts • Integration • Results align with key factors, e.g., • Strategic challenges, • Workforce requirements • Vision, mission, values • Results presented for • Key processes • Key products, services • Strategic accomplishments • What examples can you think of? • Strong integration? • Not-so-strong integration?

Results evaluation: key concepts • Strong integration: results presented for • Key areas addressing strategic challenges, • Key competitive advantages • Key customer requirements • Not-so-strong integration: • Results missing for the above • Results presented that the Examiner can’t match to process items or Organizational Profile

“Key” results • Refers to elements or factors most critical to achieving the intended outcome • In terms of results, look for • Those responsive to the Criteria requirements • Those most important to the organization’s success • Those results that are essential elements for the organization to pursue or monitor in order to achieve its desired outcome

THANK YOU!! Your support and participation as Examiners helps us all by helping WSQA fulfill its mission!