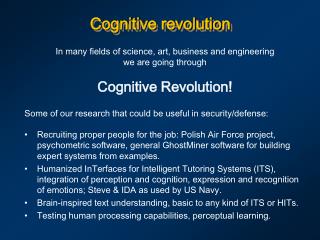

Cognitive revolution

Cognitive revolution. In many fields of science, art, business and engineering we are going through Cognitive R evolution! Some of our research that could be useful in security/defense:.

Cognitive revolution

E N D

Presentation Transcript

Cognitive revolution In many fields of science, art, business and engineering we are going through Cognitive Revolution! Some of our research that could be useful in security/defense: • Recruiting proper people for the job: Polish Air Force project, psychometric software, general GhostMiner software for building expert systems from examples. • Humanized InTerfaces for Intelligent Tutoring Systems (ITS), integration of perception and cognition, expression and recognition of emotions; Steve & IDA as used by US Navy. • Brain-inspired text understanding, basic to any kind of ITS or HITs. • Testing human processing capabilities, perceptual learning.

Human-Computer Interaction Human factors: how to build a Human-Computer Interface that would be easy to use? How to test for information overload? EPIC (Executive Process Interaction Control),human multitask simulator by D.E. Kieras (Michigan University, 1997). Although quite useful to explain skill acquisition it does not capture human executive processes correctly.

Cognitive architecture: SOAR Symbolic, rule-based architectures based on theory of cognition. A. Newell, Unified theories of cognition(Harvard University Press, 1990). SOAR: architecture based on universal theory of cognition, more than 25 years of development, community of over 100 researchers. Behavior = Architecture x Content • NL-Soar, a theory of human natural language (NL) comprehension and generation, explained many results from psycholinguistics. • SCA, a theory of symbolic concept learning. • NOVA, a theory of visual attention matching human data. • NTD-Soar, a computational theory of the perceptual, cognitive, and motor actions performed by the NASA Test Director (NTD) • Instructo-Soar, a computational theory of how people learn through interactive instruction … and many others.

Steve = Soar Training Expertfor Virtual Environments. STEVE, autonomous educational agent based on SOAR,helping students to learn how to operate and maintain complex equipment. Virtual 3Denvironment – graphics design relatively easy. Hard: understanding student’s mind; use of natural language, understanding questions; monitoring performance of students in virtual space. Used in Intelligent Forces project. New type of intelligent tutor but not easy to create … SOAR-EPIC combinations

Brain-inspired architectures G. Edelman (Neurosciences Institute) & collaborators, created a series of Darwin automata, brain-based devices, “physical devices whose behavior is controlled by a simulated nervous system”. • The device must engage in a behavioral task. • The device’s behavior must be controlled by a simulated nervous system having a design that reflects the brain’s architecture and dynamics. • The device’s behavior is modified by a reward or value system that signals the salience of environmental cues to its nervous system. • The device must be situated in the real world. Darwin VII consists of: a mobile base equipped with a CCD camera and IR sensor for vision, microphones for hearing, conductivity sensors for taste, and effectors for movement of its base, of its head, and of a gripping manipulator having one degree-of-freedom; 53K mean firing +phase neurons, 1.7 M synapses, 28 brain areas.

Humanized InTerfaces (HIT) C2I = Center for Computational Intelligence, SCE NTU Flagship project, principal investigators: Wlodzislaw Duch & Michel Pasquier + 15 other staff members so far …

HIT: definition and goals HIT is a computer/phone interface that can interact in a natural way with the user, accept natural input in form of: • speech and sound commands; text commands; • visual input, reading text (OCR), recognizing gestures, lip movement; HIT should have a robust understanding of user intentions for selected applications. HIT should respond and behave in a natural way. It may have a form of simulated talking head user can relate to, an android head, or a robotic pet. Major goals of the HIT project: • develop modular extensible software/hardware platform for HITs; • create interactive word games, information retrieval and other applications on PCs; • extend HIT functionality adding new interactivity & behavior; • move it to portable devices (PDAs/phones) & broadband services.

HIT: motivation • HIT software/hardware/services may find their way to a billion portable devices/phones in a few years time. The value of telephone ringtones alone in 2003 was 5 bln S$. New telephone functions include: camera, speech recognition, on-line translation, interactive games and educational software. • Complexity of devices: a small fraction of the functions of electronic devices, such as PDAs, phones, cameras, or new TVs is used, new humanized interfaces that will help users are needed. • Many applications in education, entertainment, services; talking heads connected to knowledge bases are already used in E-commerce. • Creating HITs is a great computer engineering challenge, like building a rocket, it requires integration of many technologies and research themes, move research to a higher level. 17 SCE staff members expressed their interest and formulated HIT subprojects. • A test-bed is urgently needed to experiment with such technologies.

HIT: state of the art HIT may draw from results of many large frontier programs, such as: Microsoft Research, offering free speech recognition/synthesis tools and publishing work on Attentional User Interface (AUI) project. DARPA’s Cognitive Information Processing Technology (call 6/2003). European Union’s Cognition Unit (started 10/2004) programs that have a goal to create artificial cognitive systems with human-like qualities. Intel has projects in natural interfaces, providing free libraries for speech, vision, machine learning methods and anticipatory computing. Talking heads already answer questions on Web pages for car, telecom, banks, pharmaceutical & other companies. Animated personal assistants work as memory enhancements and information sources, news, weather, show times, reviews, sports access... Services answering questions in natural languages are coming: AskJeeves and 82ask give answers (human) to any question! But ... HITs are not yet robust, are still very primitive in all respects, with limited interaction with the user, poor learning abilities, no anticipation ...

HIT related areas Learning Affective computing T-T-S synthesis Brain models Behavioralmodels Speech recognition HIT projects Cognitive Architectures Talking heads AI Robotics Cognitive science Graphics Lingu-bots A-Minds VR avatars Knowledgemodeling Info-retrieval WorkingMemory EpisodicMemory Semantic memory

Web/text/databases interface Text to speech NLP functions Natural input modules Graphical talking head Behavior control Cognitive functions Control of devices Affectivefunctions Specialized agents HIT: proposed approach Proposed platform: core functions: limited speech, graphics, and natural language processing (NLP) + extended functions: perceptual, cognitive, affective, specialized agents, behavioral. Challenge and opportunity is to build modular platform for HIT on a PC, with 3D graphical head, robust speech recognition, memory, reasoning + cognitive abilities, and move it to new phones/broadband services. Uniqueness: nothing like that exists, requires a large-scale effort, integration and extension of many existing projects; collaboration with telecom and software industry, great student training.

HIT sub-areas Core functions (5 experts) include HIT platform design/integration, robust limited vocabulary speech recognition, basic vision and learning; object and face tracking, synthesis of auditory and visual sensory signals, basic responses, control and talking head graphics. Extended functions (13 experts) include synchronization of lip motion, analyzing prosody in speech, control of facial expressions, emotion analysis from face video, cognitive and affective functions, natural language analysis and context identification, cognitive learning, reasoning and theories of mind (user model), learning rules for behavior, integration with robot control, visual understanding, and hardware architecture design for selected applications. Applications (5 experts) will initially include creation of specialized agents, implementing trivia / quiz/ educational and entertainment games, word games, 20 questions game, medical information presentation, office/ household automation, multi-lingual message (SMS, chat) understanding, an intelligent tutor to promote learning using appropriate gestures & more.

HIT benchmarks First year: we should be able to demonstrate basic HIT platform with: • 3D graphic head with control over rich facial movements coupled with • visual object and face tracking coordinated with basic responses; • robust limited vocabulary speech recognition for playing word games; • speech synthesis and copycatting; • following simple spoken commands; • interface with WWW search agents finding and presenting information; • full specifications for adding and interacting with HIT functions. Second year: initial applications and extensions of the HIT platform, work on the natural language processing and cognitive modeling: • demonstrate HIT playing the 20 questions game, selecting most informative questions that help to define the subject uniquely; • playing educational word games implementing trivia and quizzes; • medical information presentation system answering simple questions; • use of HIT in the NTU virtual campus; • organize an internal competition for new applications of HIT; • preliminary designs for moving the HIT platform to portable devices.

HIT extensions & management Third year and later: Improve existing platform and applications, move them to portable devices, add new functions needed for specific applications. For example, auditory and visual scene analysis, fusion of sensory signals, testing of perceptual modules inspired by neuroscience, applications of HIT for programming household devices, tutor programs to teach problem solving for some local courses. A competition for adding such new functions and applications to the HIT platform will be made at NTU; this requires creation of test data, scenarios of use, providing specification for the interface, selection/sponsoring of the best proposed approach, and integration in the HIT. Parallel project submitted to A*STAR: Developmental Robot-Embedded Artificial Mind (DREAM), aimed at controlling real android head, focused on comparison of general cognitive architectures; includes collaboration with 3 leading groups in cognitive modeling (Berkeley, Michigan and Memphis University), plus KAIST (Korea) on natural perception.

Word games Word games that were popular before computer games took over. Word games are essential to the development of analytical thinking skills. Until recently computer technology was not sufficient to play such games. The 20 question game may be the next great challenge for AI, much easier for computers than the Turing test; a World Championship with human and software players? Finding most informative questions requires understanding of the world. Performance of various models of semantic memory and episodic memory may be tested in this game in a realistic, difficult application. Asking questions to understand precisely what the user has in mind is critical for search engines and many other applications. Creating large-scale semantic memory is a great challenge: ontologies, dictionaries (Wordnet), encyclopedias, collaborative projects (Concept Net) … movie

Query Semantic memory Applications, eg. 20 questions game Humanized interface Store Part of speech tagger & phrase extractor verification On line dictionaries Parser Manual

Puzzle generator Semantic memory may invents a large number of word puzzles that the avatar presents. The application selects a random concept from all concepts in the memory and searches for a minimal set of features necessary to uniquely define it. If many subsets are sufficient for unique definition one of them is selected randomly. It is an Amphibian, it is orange and has black spots. How do you call this animal? A Salamander. It has charm, it has spin, and it has charge. What is it? If you do not know, ask Google!Quark page comes at the top …

Developmental Robot-Embedded Artificial Mind (DREAM) Ng Geok See, Wlodzislaw Duch, Michel Pasquier, Quek Hiok Chai, Abdul Wahab, Shi Daming,John HengCentre for Computational Intelligence Nanyang Technological University

Objectives and Motivations • To develop a robotic head endowed with complex cognitive processor that recognizes and interacts with humans using natural means of communication. • To facilitate integration of research areas in perception (signal processing, computer vision), real-time control, natural language processing and cognitive modeling. • To create new applications for educational games, office assistants, chatterbot interfaces, etc. • To provide training of graduate students in a new field of high potential importance for Singapore economy. • This project is at the heart of cognitive robotics, a very important field not yet pursued in Singapore.

Related work Android receptionists Inkha in the King’s College London Although functionality of these receptionists is very limited these projects have been very popular, including a note in “Nature”. The Carnegie-Mellon Universityreceptionist Valerie.

Web/text/databases interface Text to speech NLP functions Natural input modules Talking head Behavior control Cognitive functions Control of devices Affectivefunctions Specialized agents DREAM architecture DREAM is concentrated on the cognitive functions + real time control, we plan to adopt software from the HIT project for perception, NLP, and other functions.

Research Partners Main goal: integration of perception with cognitive architectures, tests of 4 architectures and creation of new architecture for android head control. • Brain Science Research Center of the Korea Advanced Institute of Science and Technology headed by Soo-Young Lee. Need his expertise for perception and brain-inspired computational models. • SOAR by John Laird, University of Michigan. His experience will be helpful in interfacing SOAR with natural perception and motor control of our android. • The “Conscious” Software Research Group headed by Stan Franklin, Institute of Intelligent Systems, University of Memphis; we will use his Intelligent Distribution Agent (IDA) for solving problems that require complex reasoning. • Shruti by Lokendra Shastri, International Computer Science Institute, Berkeley; we will use Shruti for understanding natural language commands and for formation of episodic memories as a result of interaction with users.

Some applications • DREAM will serve as a application platform for human-computer interactions, for example in projects where evolution of language and development of mind through natural interactions with people is investigated (at present text-based interfaces are used). • Interface to word games, such as trivia games, educational quizzes, games requiring reasoning, and the 20 question games. • Natural interface to information resources, such as structured internet databases (MIT Start system), encyclopedias, or transportation. • Receptionist, science museums, office mates ... • An interface to control household devices. • Taking care of old people, reminding them of their schedule, talking to them to enhance their memories and collect their life stories (in collaboration with Alzheimer clinic in Bad Aibling, Bayern, Germany). • Creating alter-ego, by mimicking behavior of people, understanding their stories (forming episodic memories) and asking additional questions to gather more information. • Creating realistic instincts for robotics pets.

Emovere: A Neuro-Cognitive Computational Framework for Research on Emotions David Cho, Quek Hiok Chai, W. Duch, Ng Geok See, A. Wahab,John Taylor (King College) and Looi Chee Kit, (NIE/LSL)

Motivations • Human can express emotions easily, but from computational point of view emotions are not easy to understand. • Emotions are an important factor in intelligent behavior, including problem solving, they can help to focus attention on correct reasoning. • Understanding on how to capture real emotions in artificial system is a challenging problem. • Research on computational approaches to emotions is a state-of-the-art basic research topic. • HIT, DREAM and intelligent tutor projects will benefit from affective computing.

Objectives • Neurocognitive computational framework for emotion • Encoding of Emotional Tags • Encoding of Episodic Memory using Emotional Tags and Emotional Expressions. • Information flow between memory modules, computational model similar to the brain info flow • Fusion of Multiple Modal Inputs • Facial, gesture, body posture, prosody and lexical content in speech. • Application • Implementation and validation of the model in an Intelligent Tutoring System.

Emotions using visual cues: face, gesture, and body posture. • Emotional face processing • Feedforward sweep through primary visual cortices ending up in associative cortices. • Projections at various levels of the visual (primary) and the associative cortices to the amygdala. • Activation of the prefrontal cortex initiate a re-prioritization of the salience of this face within the prefrontal cortex area. • Amygdala generates or simulates a motor response providing effectively a simulation of the other person’s emotional state. • Emotional gesture processing is similar to face processing. • Emotional body posture understanding: • Body movements accompany specific emotions. • Coding schemata for the analysis of body movements and postures will be investigated.

Emotions using auditory cues: linguistic and prosody • Speech carries a significant amount of information about the emotional state of the speaker in the form of its prosody or paralinguistic content. • Temporal recurrent spiking networks have already been used in identification of prosodic attitudes, but only using fundamental frequency, still 6 attitudes were distinguished with 82% accuracy. • Primary and high level auditory cortices are involved in the extraction and perceptual processing of various prosodic cues. • The amygdala and the pre-frontal cortex appears to be responsible for translating these prosodic cues into emotional information regarding the speech source.

Affect-based Cognitive Skill Instruction in an Intelligent Tutoring System • Intelligent Tutoring Systems (ITS) • Integrating characteristics proper of human tutoring into ITS performance. • Providing the student with a more personalized and friendly environment for learning according to his/her needs and progress. • A platform to extend the emotional modeling to real life experiments with affect-driven instruction. • Will provide a reference for the use of affect in intelligent tutoring systems.

3 levels of text processing: recognition, semantic, episodic. First: local, recognition of terms, we bring a lot of background knowledge reading the text, ignore misspellings and small mistakes. Second: larger units require semantic interpretation, discover and understand meaning of concepts composed of several terms, define semantic word sense for ambiguous words, expand acronyms etc. Third: episodic level of processing, or what the whole record or text is about? Knowing the category of text/paragraph helps in unique interpretation at recognition and semantic level. Used now for medical xml annotation; future semantic net basis. Large-scale effort to create the background knowledge is needed. Human information retrieval

IDoCare: Infant Development and Carefor development of perfect babies! Problem: about 5-10% of all children have a developmental disability that causes problems in their speech and language development. Identification of congenital hearing loss in USA is at 2½ years of age! Solution: permanent monitoring of babies in the crib, stimulation, recording and analysis of their responses, providing guideline for their perceptual and cognitive development, calling an expert help if needed. Key sensors: suction response (basic method in developmental psychology), motion detectors, auditory and visual monitoring. Potential: market for baby monitors (Sony, BT...) is billions of $; so far they only let parents to hear or see the baby and play ambient music. W. Duch, D.L. Maskell, M.B. Pasquier, B. Schmidt, A. Wahab School of Computer Engineering, Nanyang Technological University

Perceptual learning for adults Games that sharpen mind & senses, not only senso-motoric coordination. Sensory: hear, see, smell and also remember and think better! Intensive practice with a limited set of stimuli that are increasingly more difficult to distinguish. Results: development of perfect musical pitch, sound localization, sound recognition, accent recognition … Requires: understanding brain mechanisms to assure generalization. Cognitive: speed of reaction (common) and working memory span. Perceptual learning is the specific and relatively permanent modification of perception and behavior following sensory experience. The subconscious learning process involves structural and/or functional changes in primary sensory cortices.

Other projects Brain as Complex System (BRACS) global brain simulation (EU project just submitted, coordinated by J.G. Taylor, KCL, UK). Goal: autonomous robots, understanding how higher-level cognitive functions arise from neural interactions in a simplified brain architecture. Brain-Inspired Model of Skill Learning: From Conscious Cognition to Subconscious Actions (submitted to ARC). Goal: using brain information flow simulations understanding the dynamics of the skill learning on the example of car driving. Understanding creativity and implementing it in artificial systems. Modeling brain processes behind leading to creation of new, interesting words, understanding basis of creativity using psychological experiments, brain imaging and computer simulations. Ex: find good name for a company, organization, or a product.

![November Revolution [October Revolution]](https://cdn1.slideserve.com/2514903/an-american-journalist-at-the-storming-of-the-winter-palace-st-petersburg-november-7-1917-john-reed-dt.jpg)