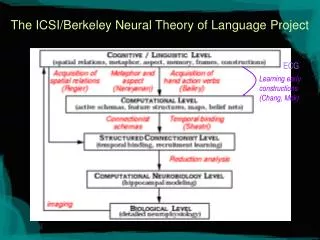

The ICSI/Berkeley Neural Theory of Language Project

Learning early constructions (Chang, Mok). The ICSI/Berkeley Neural Theory of Language Project. ECG. Moving from Spatial Relations to Verbs. Open class vs. closed class How do we represent verbs (say of hand motion) Can we build models of verbs based on motor control primitives?

The ICSI/Berkeley Neural Theory of Language Project

E N D

Presentation Transcript

Learning early constructions (Chang, Mok) The ICSI/Berkeley Neural Theory of Language Project ECG

Moving from Spatial Relations to Verbs • Open class vs. closed class • How do we represent verbs (say of hand motion) • Can we build models of verbs based on motor control primitives? • If so, how can models overcome central limitations of Regier’s system? • Inference • Abstract uses

Coordination • PATTERN GENERATORS, separate neural networks that control each limb, can interact in different ways to produce various gaits. • In ambling (top) the animal must move the fore and hind leg of one flank in parallel. • Trotting (middle) requires movement of diagonal limbs (front right and back left, or front left and back right) in unison. • Galloping (bottom) involves the forelegs, and then the hind legs, acting together

Multiple, chronically implanted, intracranial microelectrode arrays would be used to sample the activity of large populations of single cortical neurons simultaneously. The combined activity of these neural ensembles would then be transformed by a mathematical algorithm into continuous three-dimensional arm-trajectory signals that would be used to control the movements of a robotic prosthetic arm. A closed control loop would be established by providing the subject with both visual and tactile feedback signals generated by movement of the robotic arm.

A New Picture Rizzolatti et al. 1998

The fronto-parietal networks Rizzolatti et al. 1998

F5 Mirror Neurons Gallese and Goldman, TICS 1998

Category Loosening in Mirror Neurons (~60%) Observed: A is Precision Grip B is Whole Hand Prehension Action: C: precision grip D: Whole Hand Prehension (Gallese et al. Brain 1996)

A (Full vision) B (Hidden) C (Mimicking) D (HiddenMimicking) Umiltà et al. Neuron 2001

F5 Audio-Visual Mirror Neurons Kohler et al. Science (2002)

Summary of Fronto-Parietal Circuits Motor-Premotor/Parietal Circuits PMv (F5ab) – AIP Circuit “grasp” neurons – fire in relation to movements of hand prehension necessary to grasp object F4 (PMC) (behind arcuate) – VIP Circuit transforming peri-personal space coordinates so can move toward objects PMv (F5c) – PF Circuit F5c different mirror circuits for grasping, placing or manipulating object Together suggest cognitive representation of the grasp, active in action imitation and action recognition

Evidence in Humans for Mirror, General Purpose, and Action-Location Neurons Mirror: Fadiga et al. 1995; Grafton et al. 1996; Rizzolatti et al. 1996; Cochin et al. 1998; Decety et al. 1997; Decety and Grèzes 1999; Hari et al. 1999; Iacoboni et al. 1999; Buccino et al. 2001. General Purpose: Perani et al. 1995; Martin et al. 1996; Grafton et al. 1996; Chao and Martin 2000. Action-Location: Bremmer, et al., 2001.

AIP Dorsal Stream: Affordances Ways to grab this “thing” Task Constraints (F6) Working Memory (46?) Instruction Stimuli (F2) Ventral Stream: Recognition “It’s a mug” IT PFC FARS (Fagg-Arbib-Rizzolatti-Sakata) Model AIP extracts the set of affordances for an attended object.These affordances highlight the features of the object relevant to physical interaction with it. Itti: CS564 - Brain Theory and Artificial Intelligence. FARS Model

MULTI-MODAL INTEGRATION The premotor and parietal areas, rather than having separate and independent functions, are neurally integrated not only to control action, but also to serve the function of constructing an integrated representation of: Actions, together with objects acted on, and locations toward which actions are directed. In these circuits sensory inputs are transformed in order to accomplish not only motor but also cognitive tasks, such as space perception and action understanding.

Modeling Motor Schemas • Relevant requirements (Stromberg, Latash, Kandel, Arbib, Jeannerod, Rizzolatti) • Should model coordinated, distributed, parameterized control programs required for motor action and perception. • Should be an active structure. • Should be able to model concurrent actions and interrupts. • Should model hierarchical control (higher level motor centers to muscle extensor/flexors. • Computational model called x-schemas (http://www.icsi.berkeley.edu/NTL)

An Active Model of Events • At the Computational level, actions and events are coded in active representations called x-schemas which are extensions to Stochastic Petri nets. • x-schemas are fine-grained action and event representations that can be used for monitoring and control as well as for inference.

3 2 Resource arc 1 Precondition arc Inhibition arc Model Review: Stochastic Petri Nets Basic Mechanism [1] [1] Firing function -- conjunctive -- logistic -- exponential family

Model Review 3 2 1 Firing Semantics

Model Review 1 2 1 1 1 Result of Firing

walker at goal energy walker=Harry goal=home Active representations • Many inferences about actions derive from what we know about executing them • Representation based on stochastic Petri nets captures dynamic, parameterized nature of actions • Generative model: action, recognition, planning , language • Walking: • bound to a specific walker with a direction or goal • consumes resources (e.g., energy) • may have termination condition(e.g., walker at goal) • ongoing, iterative action