Parallel FFT Implementation on CELL Processor

Modifying existing FFT algorithm to leverage CELL processor's parallel processing capabilities, aiming to speed up medical imaging devices like MRIs. Recursive version implemented for PPU and iterative version for SPUs.

Parallel FFT Implementation on CELL Processor

E N D

Presentation Transcript

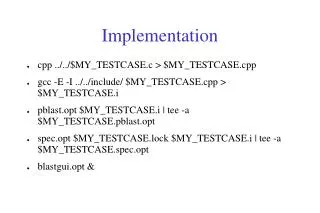

Preprocessing Post-processing Senior Project – Computer Science - 20071D FFTs on the CELL ProcessorGene DavisonAdvisor – Professor Burns Background IBM’s CELL processor is a new approach to multicore processing. It contains 1 PPU (primary processing unit) for standard processing and 8 SPUs (synergistic processing units) that the PPU can use to perform calculations in parallel. FFTs, or Fast Fourier Transforms, are a specific form of Discrete Fourier Transform. Used extensively in a wide variety of applications such as signal processing in MRIs, the FFT computes DFT in O(n lg n), improving on the straight forward implementation’s time of O(n2). Abstract The goal was to take an existing FFT algorithm and modify it to take advantage of the parallel processing capacities of the CELL processor. Parallelization was maximized with the goal of obtaining the theoretically possible speed up predicted by Amdahl’s Law. Such an implementation could see use speeding up the results of medical imaging devices such as MRIs, or the decreased processing time could instead be used for increasing the quality of such machine’s output. Implementation In order to take full advantage of the parallelization of computation that the CELL processor gives us the opportunity for, the FFT algorithm had to be adjusted so that the majority of the work could be done in parallel with no interdependence. To obtain this property, I chose to implement a recursive version on the PPU so that executing code on the SPUs could take place naturally as part of the implementation. Under this version, instead of calling the same FFT implementation with the recursive call, I call the code on the SPUs. This allowed for the data to be broken up into eighths and computed in parallel. Then the data would be recombined after the SPUs had completed their computation. The code on the SPUs had a different concern. Instead of having to worry about parallelization of computation, the SPUs suffer from limited memory space. To compensate for this, I chose a straight-forward iterative implementation of the code for use on the SPUs, thereby limiting the amount of memory required for execution.