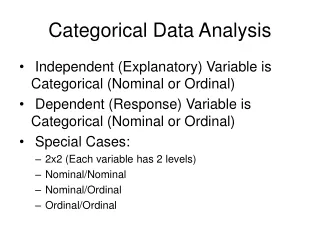

Categorical Data Analysis

1.54k likes | 1.87k Views

Categorical Data Analysis. Week 2. Binary Response Models. binary and binomial responses binary: y assumes values of 0 or 1 binomial: y is number of “successes” in n “ trials” distributions Bernoulli: Binomial:. Transformational Approach. linear probability model

Categorical Data Analysis

E N D

Presentation Transcript

Categorical Data Analysis Week 2

Binary Response Models • binary and binomial responses • binary: y assumes values of 0 or 1 • binomial: y is number of “successes” in n “trials” • distributions • Bernoulli: • Binomial:

Transformational Approach • linear probability model • use grouped data (events/trials): • “identity” link: • linear predictor: • problems of prediction outside [0,1]

The Logit Model • logit transformation: • inverse logit: • ensures that p is in [0,1] for all values of x and .

The Logit Model • odds and odds ratios are the key to understanding and interpreting this model • the log odds transformation is a “stretching” transformation to map probabilities to the real line

The Logit Transformation • properties of logit linear

Odds, Odds Ratios, and Relative Risk • odds of “success” is the ratio: • consider two groups with success probabilities: • odds ratio (OR) is a measure of the odds of success in group 1 relative to group 2

Odds Ratio Y 0 1 • 2 X 2 table: • OR is the cross-product ratio (compare x = 1 group to x = 0 group) • odds of y = 1 are 4 times higher when x =1 than when x = 0 0 1 X

Odds Ratio • equivalent interpretation • odds of y = 1 are 0.225 times higher when x = 0 than when x = 1 • odds of y = 1 are 1-0.225 = .775 times lower when x = 0 than when x = 1 • odds of y = 1 are 77.5% lower when x = 0 than when x = 1

Log Odds Ratios • Consider the model: • D is a dummy variable coded 1 if group 1 and 0 otherwise. • group 1: • group 2: • LOR: OR:

Relative Risk • similar to OR, but works with rates • relative risk or rate ratio (RR) is the rate in group 1 relative to group 2 • OR RR as .

Tutorial: odds and odds ratios • consider the following data

Tutorial: odds and odds ratios • read table: clear input educ psex f 0 0 873 0 1 1190 1 0 533 1 1 1208 end label define edlev 0 "HS or less" 1 "Col or more" label val educ edlev label var educ education

Tutorial: odds and odds ratios • compute odds: • verify by hand tabodds psex educ [fw=f]

Tutorial: odds and odds ratios • compute odds ratios: • verify by hand tabodds psex educ [fw=f], or

Tutorial: odds and odds ratios • stat facts: • variances of functions • use in statistical significance tests and forming confidence intervals • basic rule for variances of linear transformations • g(x) = a + bx is a linear function of x, then • this is a trivial case of the delta method applied to a single variable • the delta method for the variance of a nonlinear function g(x) of a single variable is

Tutorial: odds and odds ratios • stat facts: • variances of odds and odds ratios • we can use the delta method to find the variance in the odds and the odds ratios • from the asymptotic (large sample theory) perspective it is best to work with log odds and log odds ratios • the log odds ratio converges to normality at a faster rate than the odds ratio, so statistical tests may be more appropriate on log odds ratios (nonlinear functions of p)

Tutorial: odds and odds ratios • stat facts: • the log odds ratio is the difference in the log odds for two groups • groups are independent • variance of a difference is the sum of the variances

Tutorial: odds and odds ratios • data structures: grouped or individual level • note: • use frequency weights to handle grouped data • or we could “expand” this data by the frequency weights resulting in individual-level data • model results from either data structures are the same • expand the data and verify the following results expand f

Tutorial: odds and odds ratios • statistical modeling • logit model (glm): • logit model (logit): glm psex educ [fw=f], f(b) eform logit psex educ [fw=f], or

Tutorial: odds and odds ratios • statistical modeling (#1) • logit model (glm):

Tutorial: odds and odds ratios • statistical modeling (#2) • some ideas from alternative normalizations • what parameters will this model produce? • what is the interpretation of the “constant” gen cons = 1 glm psex cons educ [fw=f], nocons f(b) eform

Tutorial: odds and odds ratios • statistical modeling (#2)

Tutorial: odds and odds ratios • statistical modeling (#3) • what parameters does this model produce? • how do you interpret them? gen lowed = educ == 0 gen hied = educ == 1 glm psex lowed hied [fw=f], nocons f(b) eform

Tutorial: odds and odds ratios • statistical modeling (#3) are these odds ratios?

Tutorial: prediction • fitted probabilities (after most recent model) predict p, mu tab educ [fw=f], sum(p) nostandard nofreq

Probit Model • inverse probit is the CDF for a standard normal variable: • link function:

Interpretation • probit coefficients • interpreted as a standard normal variables (no log odds-ratio interpretation) • “scaled” versions of logit coefficients • probit models • more common in certain disciplines (economics) • analogy with linear regression (normal latent variable) • more easily extended to multivariate distributions

Example: Grouped Data • Swedish mortality data revisited logit model probit model

Swedish Historical Mortality Data • predictions

Programming • Stata: generalized linear model (glm) glm y A2 A3 P2, family(b n) link(probit) glm y A2 A3 P2, family(b n) link(logit) • idea of glm is to make model linear in the link. • old days: Iteratively Reweighted Least Squares • now: Fisher scoring, Newton-Raphson • both approaches yield MLEs

Generalized Linear Models • applies to a broad class of models • iterative fitting (repeated updating)except for linear model • update parameters, weights W, and predicted values m • models differ in terms of W and m and assumptions about the distribution of y • common distributions for yinclude: normal, binomial, and Poisson • common links include: identity, logit, probit, and log

Latent Variable Approach • example: insect mortality • suppose a researcher exposes insects to dosage levels (u) of an insecticide and observes whether the “subject” lives or dies at that dosage. • the response is expected to depend on the insect’s tolerance (c) to that dosage level. • the insect dies if u > c and survives if u < c • tolerance is not observed (survival is observed)

Latent Variables • u and c are continuous latent variables • examples: • women’s employment: u is the market wage and c is the reservation wage • migration: u is the benefit of moving and c is the cost of moving. • observed outcome y =1 or y = 0 reveals the individual’s preference, which is assumed to maximize a rational individual’s utility function.

Latent Variables • Assume linear utility and criterion functions • over-parameterization = identification problem • we can identify differences in components but not the separate components

Latent Variables • constraints: • Then: • where F(.) is the CDF of ε

Latent Variables and Standardization • Need to standardize the mean and variance of ε • binary dependent variables lack inherent scales • magnitude of βis only in reference to the mean and variance of ε which are unknown. • redefine ε to a common standard • where a and b are two chosen constants.

Standardization for Logit and Probit Models • standardization implies • F*() is the cdf of ε* • location a and scale b need to be fixed • setting • and

Standardization for Logit and Probit Models • distribution of ε is standardized • standard normal probit • standard logistic logit • both distributions have a mean of 0 • variances differ

Extending the Latent Variable Approach • observed y is a dichotomous (binary) 0/1 variable • continuous latent variable: • linear predictor + residual • observed outcome

Notation • conditional means of latent variables obtained from index function: • obtain probabilities from inverse link functions logit model: probit model:

ML • likelihood function • where if data are binary • log-likelihood function

Assessing Models • definitions: • L null model (intercept only): • L saturated model (a parameter for each cell): • L current model: • grouped data (events/trials) • deviance (likelihood ratio statistic)

Deviance • grouped data: • if cell sizes are reasonably large deviance is distributed as chi-square • individual-level data: Lf=1 and log Lf=0 • deviance is not a “fit” statistic

Deviance • deviance is like a residual sum of squares • larger values indicate poorer models • larger models have smaller deviance • deviance for the more constrained model (Model 1) • deviance for the less constrained model (Model 2) • assume that Model 1 is a constrained version of Model 2.

Difference in Deviance • evaluate competing “nested” models using a likelihood ratio statistic • model chi-square is a special case • SAS, Stata, R, etc. report different statistics

Other Fit Statistics • BIC & AIC (useful for non-nested models) • basic idea of IC : penalize log L for the number of parameters (AIC/BIC) and/or the size of the sample (BIC) • AIC s=1 • BIC s= ½ log n (sample size) • dfmis the number of model parameters